Authenticating Azure AKS Kubernetes Clusters with Okta SSO

How to Authenticate Azure AKS Kubernetes Clusters with Okta SSO. Consolidating access and providing an audit log for access

Article Contents

In this article on securing access to Kubernetes, we will explore how Teleport brings an additional layer of security to clusters based on Azure Kubernetes Service (AKS) managed service from Microsoft Azure.

Before proceeding further, ensure you have configured the Teleport authentication server to work with Okta SSO. Refer to the guide and the documentation for the setup.

This guide will use a configured Okta directory. The configuration will use the domain cloudnativelabs.in while the Teleport proxy and authentication server are associated with the proxy.teleport-demo.in domain.

Step 1 - Provisioning an Azure Kubernetes Service (AKS) cluster

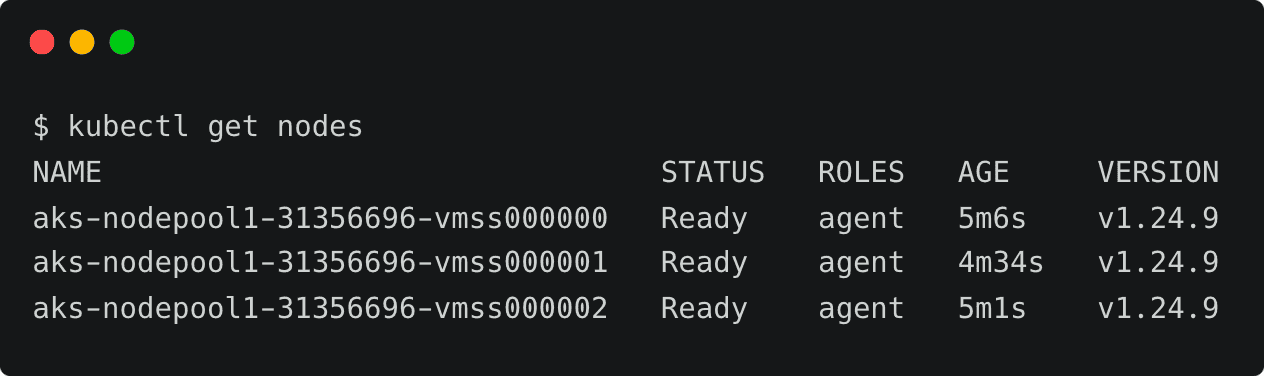

This tutorial will launch an AKS cluster with three Ubuntu nodes and the most recent version of Kubernetes. You can replace the parameters such as the Azure region and the number of nodes. Download and configure the Azure CLI to follow along.

touch aks-config

export KUBECONFIG=$PWD/aks-config

export AZ_REGION=southindia

az group create \

--name teleport-demo \

--location $AZ_REGION

az aks create \

--resource-group teleport-demo \

--name cluster1 \

--node-count 3 \

--generate-ssh-keysWhen the provisioning is complete, the kubeconfig contents are written to aks-config file in the current directory.

az aks get-credentials \

--resource-group teleport-demo \

--name cluster1You can now list the nodes and verify the access to the cluster.

kubectl get nodes

NAME STATUS ROLES AGE VERSION

aks-nodepool1-31356696-vmss000000 Ready agent 5m6s v1.24.9

aks-nodepool1-31356696-vmss000001 Ready agent 4m34s v1.24.9

aks-nodepool1-31356696-vmss000002 Ready agent 5m1s v1.24.9

Step 2 - Registering AKS cluster with Teleport

Similar to other configurations, such as servers, Teleport expects an agent to run within the target cluster. This agent can be installed through a Helm chart by pointing it to the Teleport proxy server endpoint.

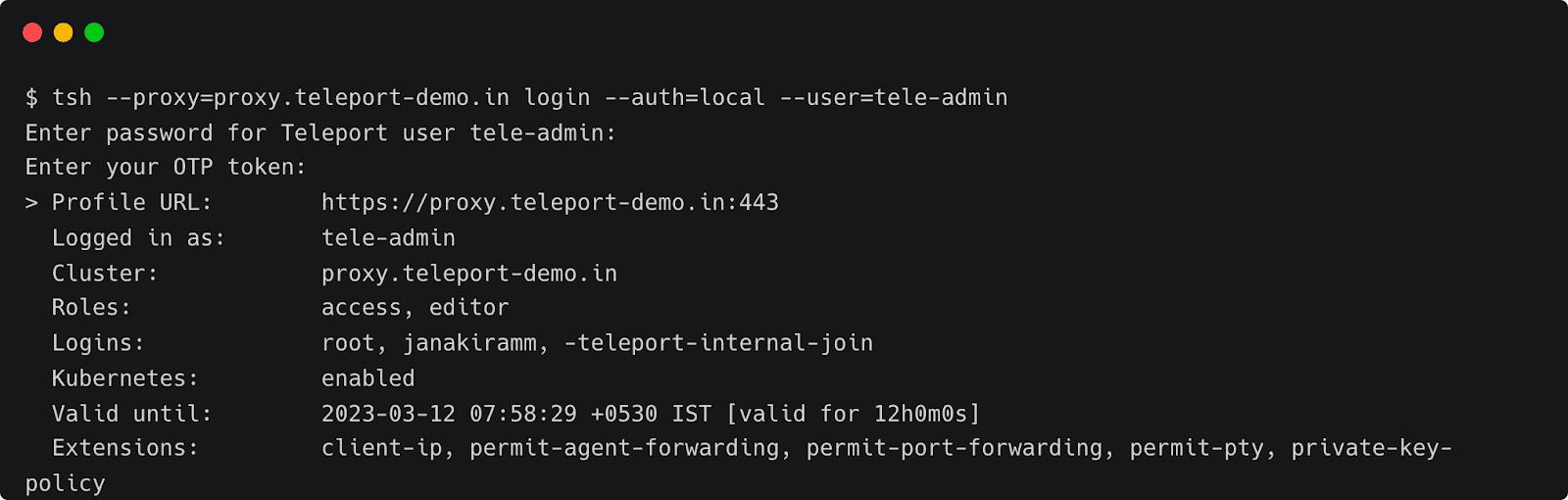

Before proceeding further, we need to get the token responsible for validating the agent. Run the below command to create the token based on the Kubernetes role. Make sure you log in to Teleport as a user with roles editor and access.

tsh --proxy=proxy.teleport-demo.in login --auth=local --user=tele-admin

Enter password for Teleport user tele-admin:

Enter your OTP token:

> Profile URL: https://proxy.teleport-demo.in:443

Logged in as: tele-admin

Cluster: proxy.teleport-demo.in

Roles: access, editor

Logins: root, janakiramm, -teleport-internal-join

Kubernetes: enabled

Valid until: 2023-03-12 07:58:29 +0530 IST [valid for 12h0m0s]

Extensions: client-ip, permit-agent-forwarding, permit-port-forwarding, permit-pty, private-key-policy TOKEN=$(tctl nodes add --roles=kube --ttl=10000h --format=json | jq -r '.[0]') The next step is to add Teleport’s repo and update Helm, which provides us access to the Helm chart.

helm repo add teleport https://charts.releases.teleport.dev

helm repo updateThe below environment variables contain key parameters needed by the Helm chart.

PROXY_ADDR=proxy.teleport-demo.in:443

CLUSTER=aks-cluster

helm install teleport-agent teleport/teleport-kube-agent \

--set kubeClusterName=${CLUSTER?} \

--set proxyAddr=${PROXY_ADDR?} \

--set authToken=${TOKEN?} \

--create-namespace \

--namespace=teleport-agent \

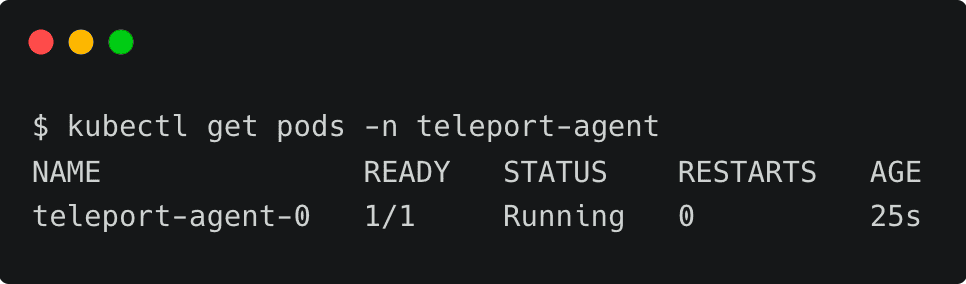

--version 12.1.0 Wait for a few minutes and check if the agent pod is up and running within the teleport-agent namespace.

kubectl get pods -n teleport-agent

NAME READY STATUS RESTARTS AGE

teleport-agent-0 1/1 Running 0 25sYou can also verify the logs with the below command:

kubectl logs teleport-agent-0 -n teleport-agentStep 3 - Configuring Okta as SSO provider for AKS cluster

Kubernetes uses an identity embedded within the kubeconfig file to access the cluster. We need to add that identity to Teleport’s kubernetes_users role for the user to assume the role.

First, let’s get the current user from the kubeconfig file.

kubectl config view -o jsonpath="{.contexts[?(@.name==\"$(kubectl config current-context)\")].context.user}"By default, AKS creates a user based on the format clusterUser_RESOURCE_GROUP_CLUSTENAME. Let’s tell Teleport that this user will assume the role of kubernetes_users by creating an RBAC definition in a file by name kube-access.yaml and applying it.

kind: role

metadata:

name: kube-access

version: v5

spec:

allow:

kubernetes_labels:

'*': '*'

kubernetes_groups:

- viewers

kubernetes_users:

- clusterUser_teleport-demo_cluster1

deny: {}tctl create -f kube-access.yaml

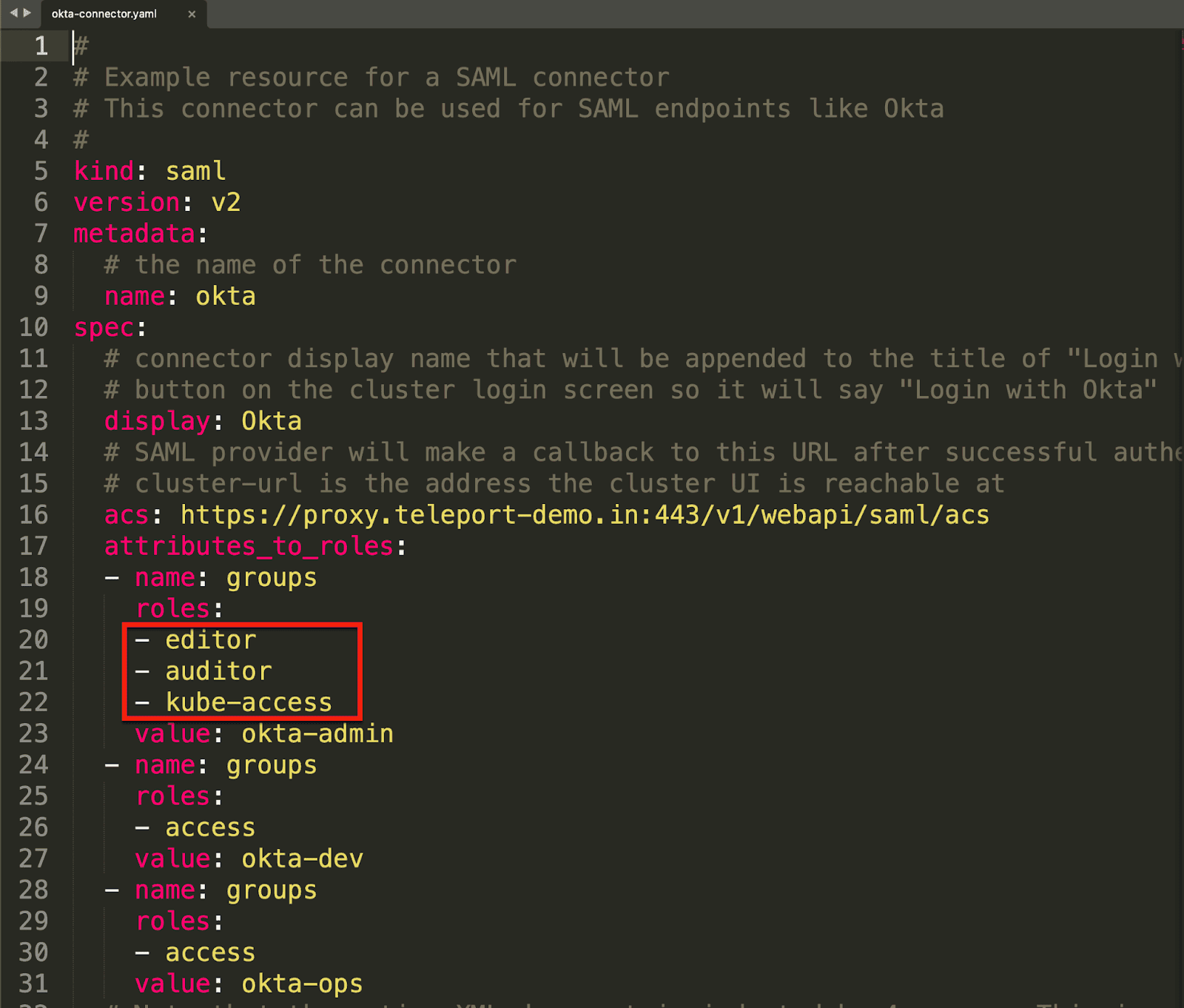

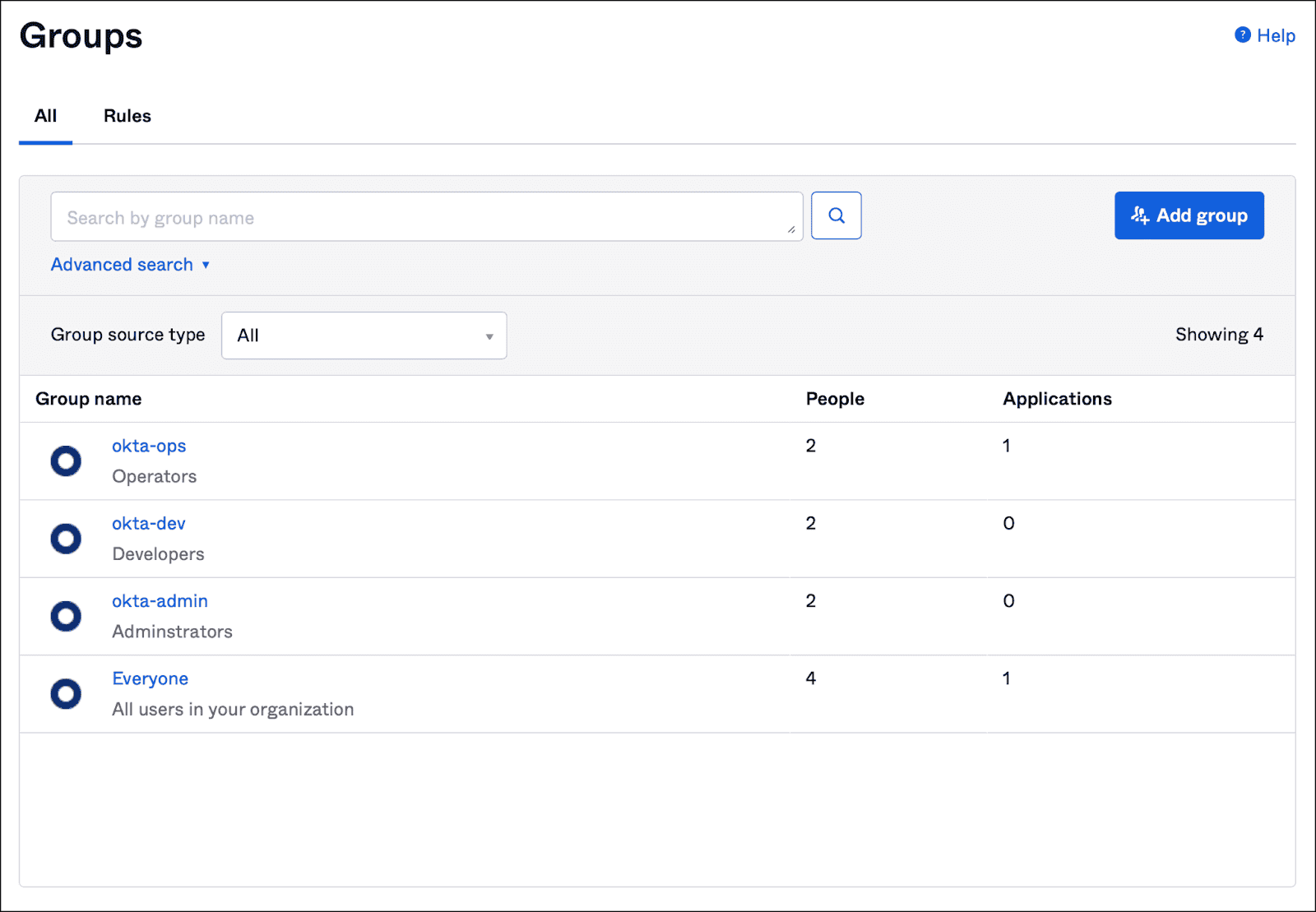

role 'kube-access' has been createdAfter this, we must update the ODIC connector created in the previous tutorial. This step ensures that users belonging to specific groups within the Okta directory can gain access to the cluster.

Let’s update the Okta connector definition to add auditor and kube-access role to the okta-admin group.

Notice how the okta-admin group configured in Okta is mapped to the Teleport roles.

Overwrite the connector configuration by applying it again.

tctl create -f okta-sso.yaml

authentication connector 'okta' has been createdFinally, we need to create a cluster-wide role binding in Kubernetes to bind the groups defined in Teleport to the local roles. Since the Teleport RBAC definition only has viewers under the kubernetes_groups section, let’s bind that role with the view cluster role in Kubernetes.

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: viewers-crb

subjects:

- kind: Group

name: viewers

apiGroup: rbac.authorization.k8s.io

roleRef:

kind: ClusterRole

name: view

apiGroup: rbac.authorization.k8s.iokubectl apply -f viewers-bind.yamlThis step essentially closes the loop by mapping Teleport roles with Kubernetes cluster roles.

Step 4 - Accessing Kubernetes clusters through Okta Identity

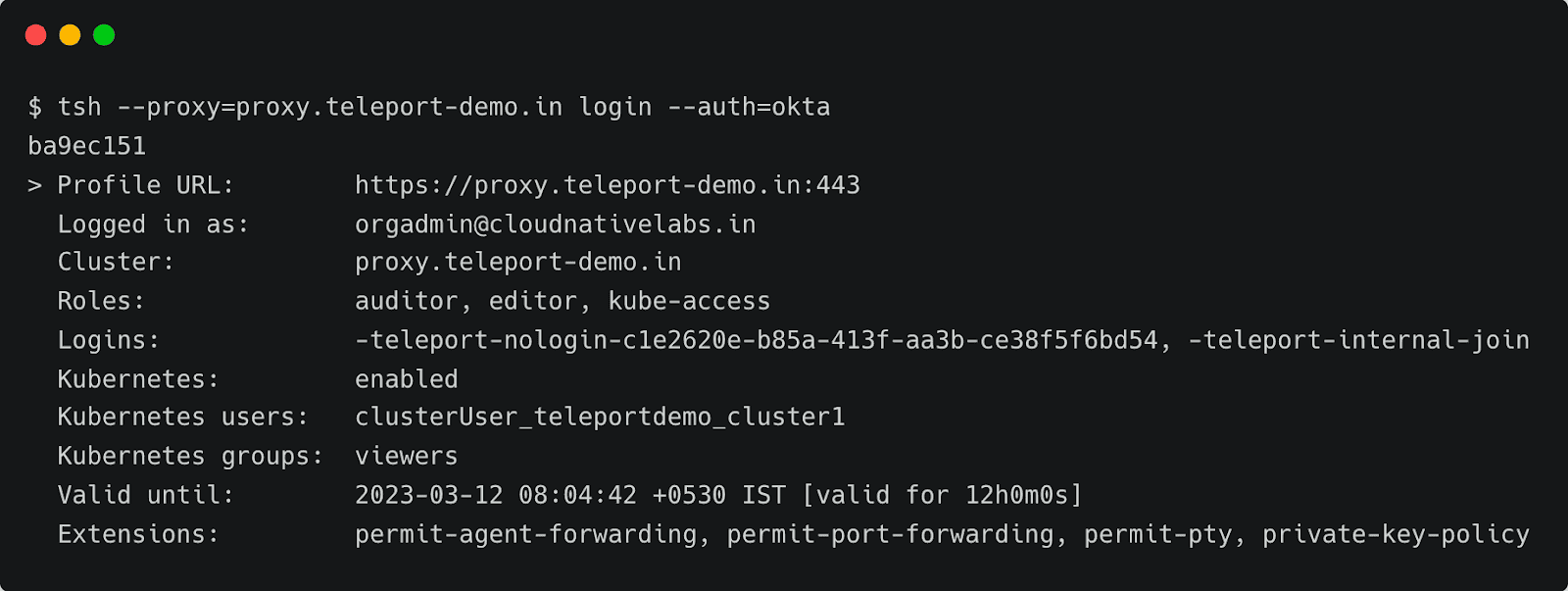

It’s time to test the configuration by signing into Teleport as an Okta user and then using the tsh CLI to list the registered clusters.

tsh --proxy=proxy.teleport-demo.in login --auth=oktaThis opens up the browser window for entering the Okta credentials. Once you login, the CLI confirms the identity.

tsh --proxy=proxy.teleport-demo.in login --auth=okta

ba9ec151

> Profile URL: https://proxy.teleport-demo.in:443

Logged in as: [email protected]

Cluster: proxy.teleport-demo.in

Roles: auditor, editor, kube-access

Logins: -teleport-nologin-c1e2620e-b85a-413f-aa3b-ce38f5f6bd54, -teleport-internal-join

Kubernetes: enabled

Kubernetes users: clusterUser_teleportdemo_cluster1

Kubernetes groups: viewers

Valid until: 2023-03-12 08:04:42 +0530 IST [valid for 12h0m0s]

Extensions: permit-agent-forwarding, permit-port-forwarding, permit-pty, private-key-policy

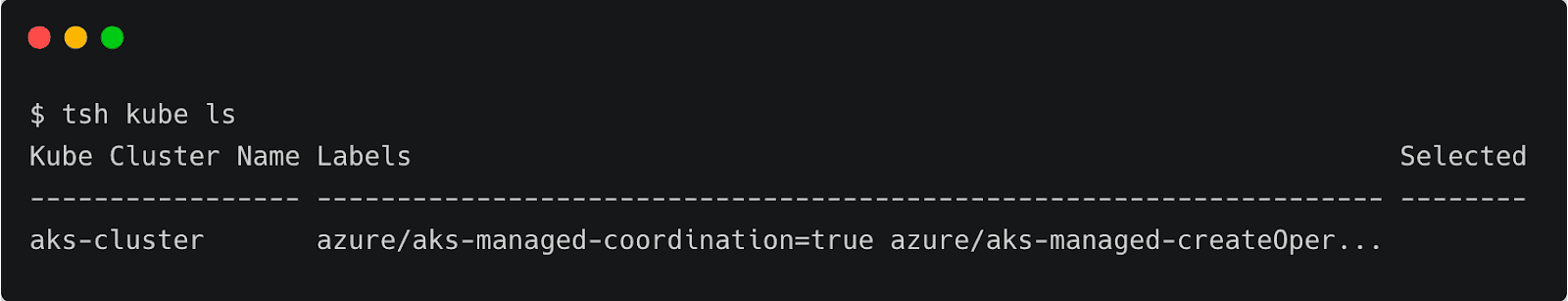

Notice that the current user is [email protected]. Now, let’s list the registered Kubernetes clusters and log onto the AKS cluster, which is running in Azure.

tsh kube ls

Kube Cluster Name Labels Selected

----------------- ------------------------------------------------------------------- --------

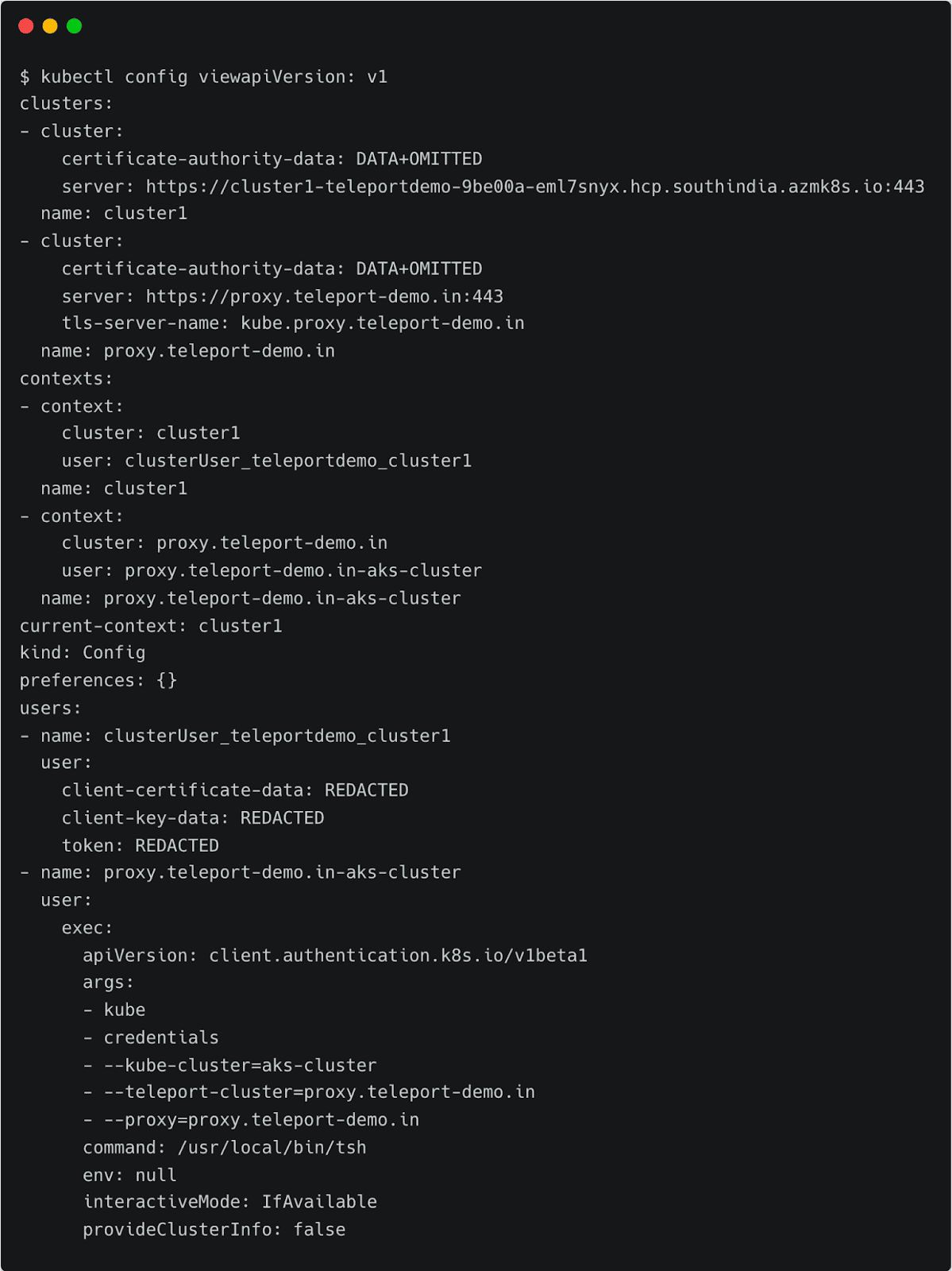

aks-cluster azure/aks-managed-coordination=true azure/aks-managed-createOper...At this point, Teleport client has added another context into the original kubeconfig file. You can verify it by running the following command:

kubectl config view

Notice the current context pointing to proxy.teleport-demo.in-aks-cluster. You can continue to use the standard Kubernetes client CLI, kubectl, to access the cluster through Teleport’s proxy server transparently.

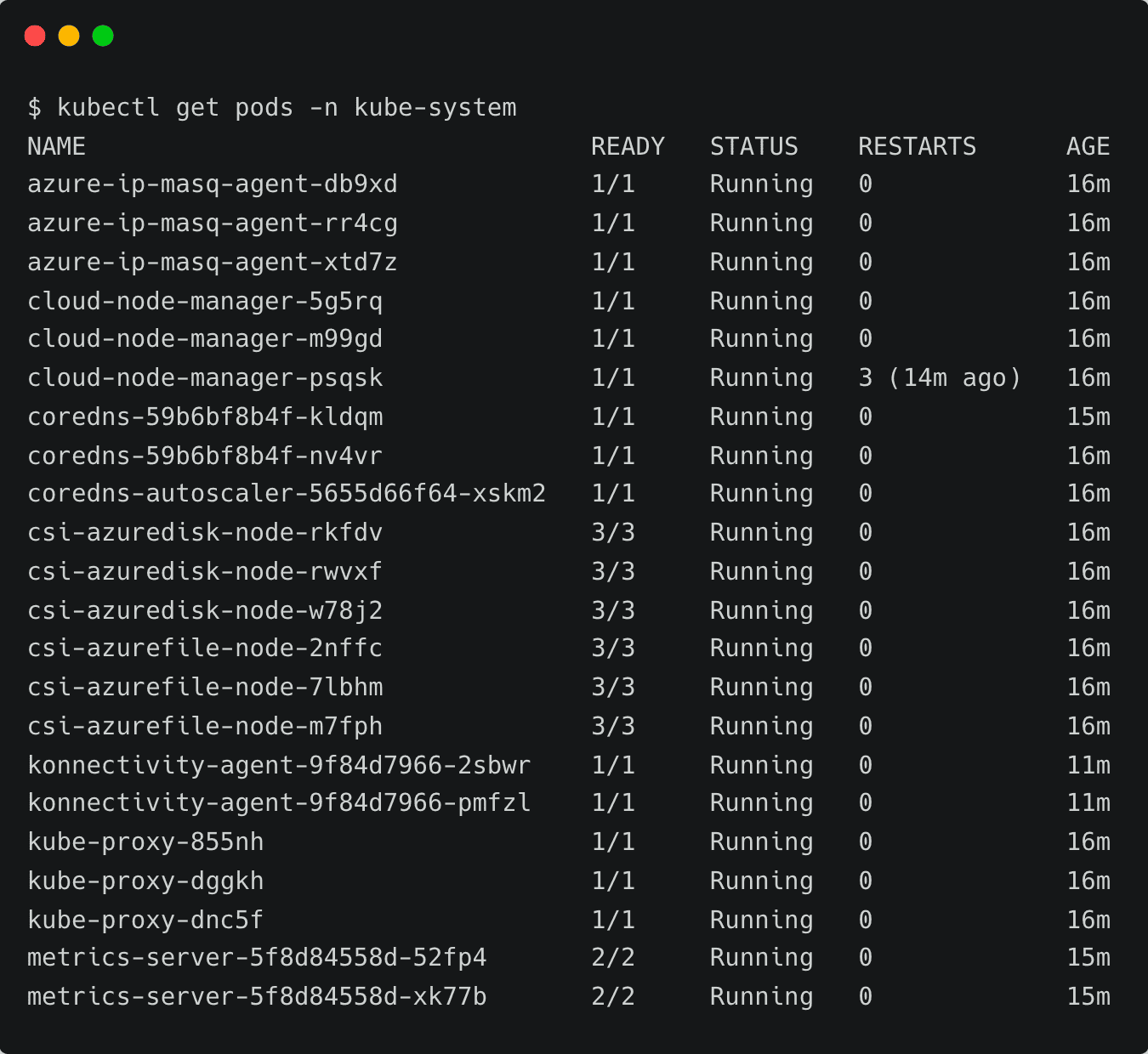

Let’s try to list all the pods running in the kube-system namespace.

kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

azure-ip-masq-agent-db9xd 1/1 Running 0 16m

azure-ip-masq-agent-rr4cg 1/1 Running 0 16m

azure-ip-masq-agent-xtd7z 1/1 Running 0 16m

cloud-node-manager-5g5rq 1/1 Running 0 16m

cloud-node-manager-m99gd 1/1 Running 0 16m

cloud-node-manager-psqsk 1/1 Running 3 (14m ago) 16m

coredns-59b6bf8b4f-kldqm 1/1 Running 0 15m

coredns-59b6bf8b4f-nv4vr 1/1 Running 0 16m

coredns-autoscaler-5655d66f64-xskm2 1/1 Running 0 16m

csi-azuredisk-node-rkfdv 3/3 Running 0 16m

csi-azuredisk-node-rwvxf 3/3 Running 0 16m

csi-azuredisk-node-w78j2 3/3 Running 0 16m

csi-azurefile-node-2nffc 3/3 Running 0 16m

csi-azurefile-node-7lbhm 3/3 Running 0 16m

csi-azurefile-node-m7fph 3/3 Running 0 16m

konnectivity-agent-9f84d7966-2sbwr 1/1 Running 0 11m

konnectivity-agent-9f84d7966-pmfzl 1/1 Running 0 11m

kube-proxy-855nh 1/1 Running 0 16m

kube-proxy-dggkh 1/1 Running 0 16m

kube-proxy-dnc5f 1/1 Running 0 16m

metrics-server-5f8d84558d-52fp4 2/2 Running 0 15m

metrics-server-5f8d84558d-xk77b 2/2 Running 0 15mSince the Kubernetes role has view permission, we are able to list the pods. To verify the policy, let’s try to create a namespace.

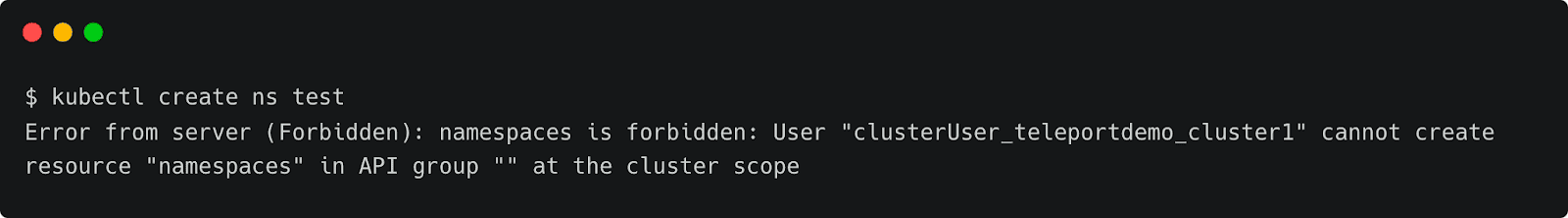

kubectl create ns test

Error from server (Forbidden): namespaces is forbidden: User "clusterUser_teleportdemo_cluster1" cannot create resource "namespaces" in API group "" at the cluster scope

This results in error due to the lack of permission to create resources.

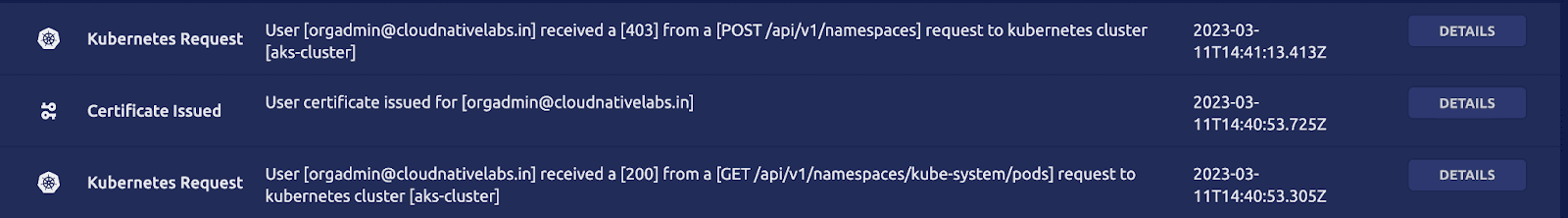

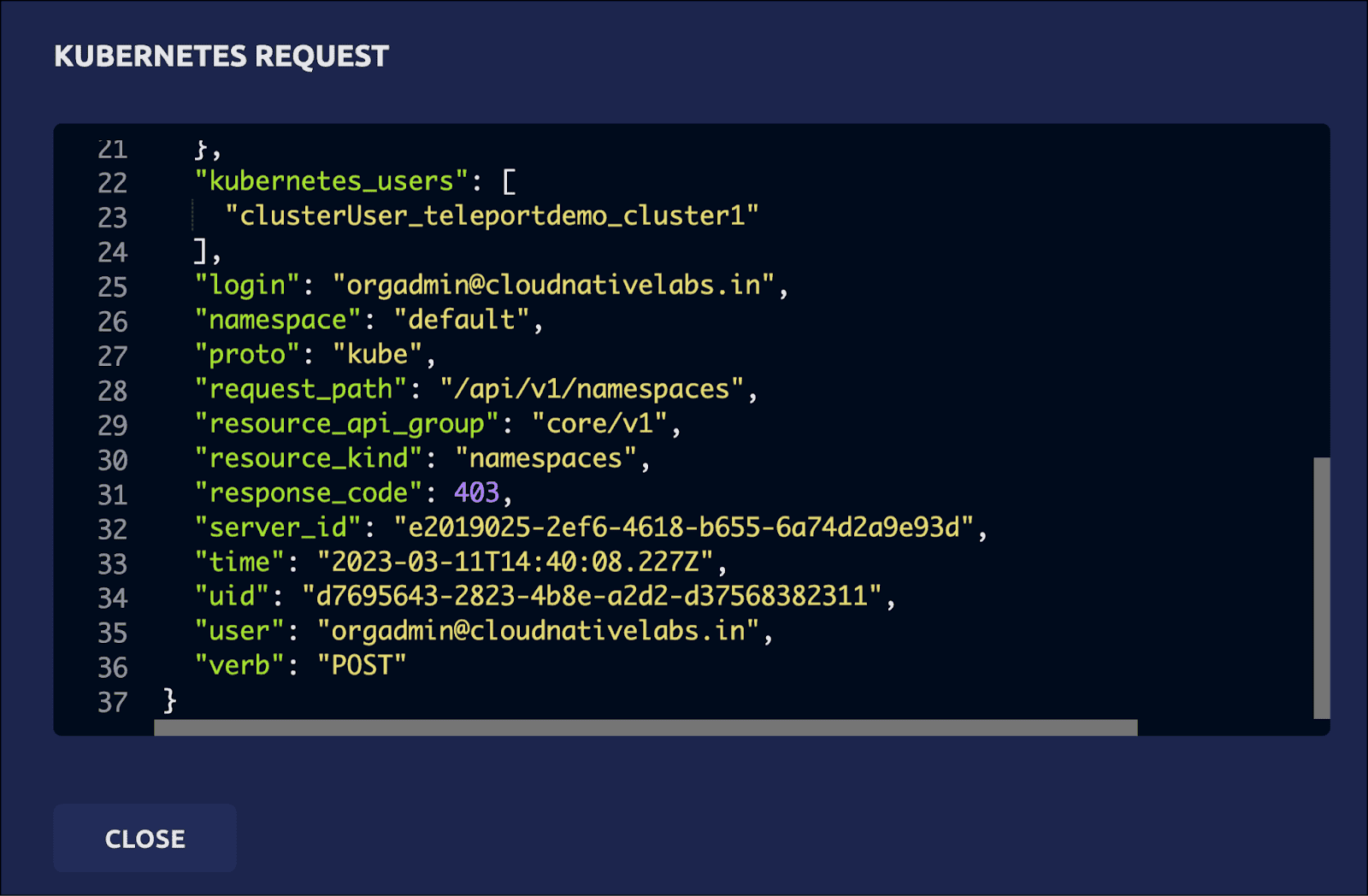

Since the action violates the access policy defined by the RBAC, it is also visible in Teleport audit logs and any kubectl execs are recorded.

The details clearly explain that a POST request has been made to the Kubernetes API server attempting to create the namespace, which was declined.

We can define fine-grained RBAC policies that map the users from the Okta groups to Teleport users to Kubernetes roles. This gives ultimate control to cluster administrators and DevOps teams to allow or restrict access to Kubernetes resources.

In this tutorial, we have learned how to leverage Okta as an SSO provider for Teleport to define access policies for Azure Kubernetes Service-based Kubernetes cluster.

Try using Teleport to connect your Okta with your AKS cluster, by signing up for our 14-day Teleport Cloud trial.