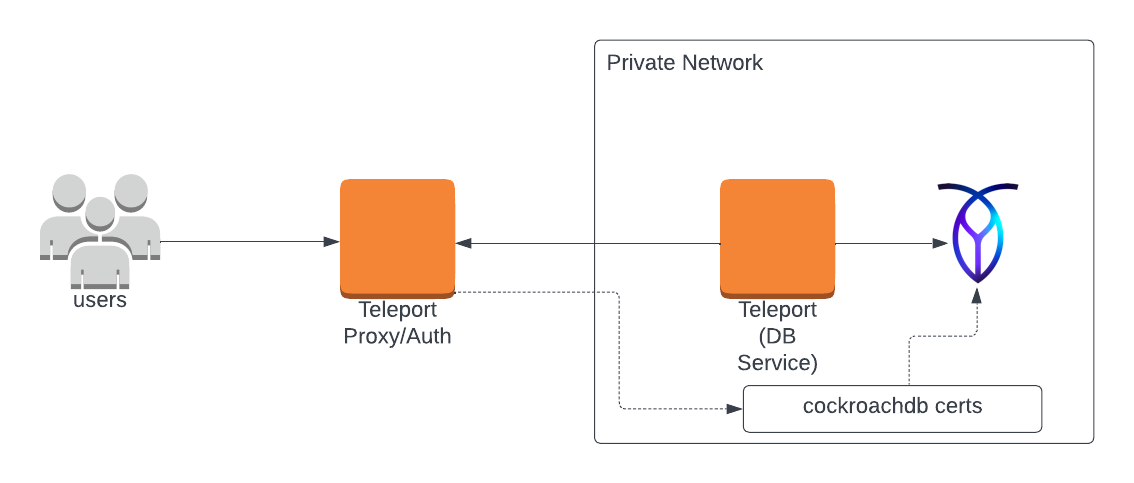

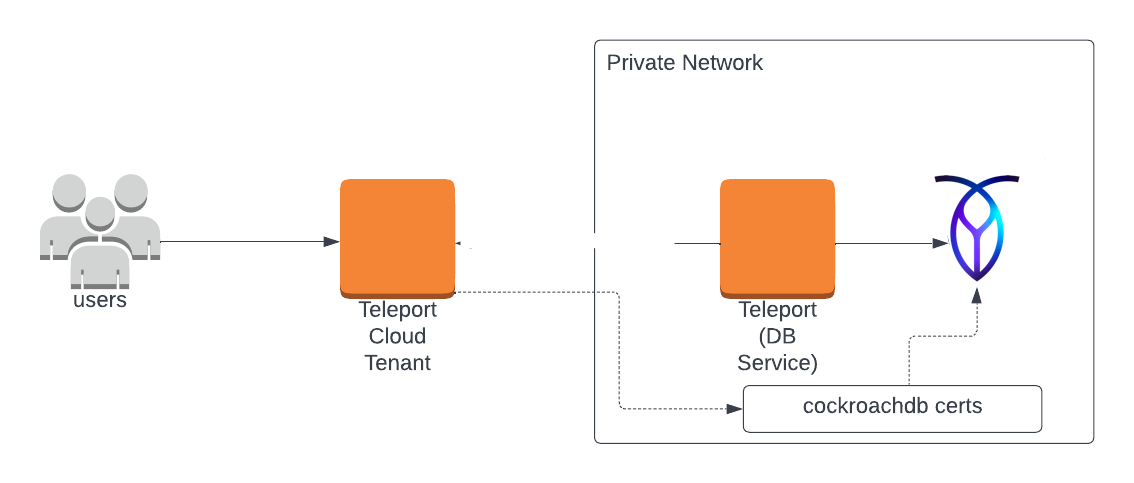

Database Access with Self-Hosted CockroachDB

Teleport can provide secure access to CockroachDB via the Teleport Database Service. This allows for fine-grained access control through Teleport's RBAC.

In this guide, you will:

- Configure an CockroachDB with mutual TLS authentication.

- Join the CockroachDB database to your Teleport cluster.

- Connect to the CockroachDB database via the Teleport Database Service.

- Self-Hosted

- Teleport Enterprise Cloud

Prerequisites

-

A running Teleport cluster version 14.3.33 or above. If you want to get started with Teleport, sign up for a free trial or set up a demo environment.

-

The

tctladmin tool andtshclient tool.Visit Installation for instructions on downloading

tctlandtsh.

-

CockroachDB cluster.

-

A host, e.g., an Amazon EC2 instance, where you will run the Teleport Database Service.

-

To check that you can connect to your Teleport cluster, sign in with

tsh login, then verify that you can runtctlcommands using your current credentials.tctlis supported on macOS and Linux machines.For example:

$ tsh login --proxy=teleport.example.com [email protected]

$ tctl status

# Cluster teleport.example.com

# Version 14.3.33

# CA pin sha256:abdc1245efgh5678abdc1245efgh5678abdc1245efgh5678abdc1245efgh5678If you can connect to the cluster and run the

tctl statuscommand, you can use your current credentials to run subsequenttctlcommands from your workstation. If you host your own Teleport cluster, you can also runtctlcommands on the computer that hosts the Teleport Auth Service for full permissions. -

A certificate authority to issue CockroachDB certificates for nodes in your CockroachDB cluster.

Why do I need my own CA?

Distributed databases like CockroachDB use mTLS for node-to-node communication. Teleport requires that you have your own CA to issue certificates for node-to-node mTLS communication.

Teleport uses a split-CA architecture for database access. The Teleport

dbCA issues server certificates and thedb_clientCA issues client certificates.Databases are configured to trust the Teleport

db_clientCA for client authentication, but not thedbCA. Additionally, Teleport only issues ephemeraldb_clientCA certificates.When a CockroachDB node connects to another CockroachDB node, it must present a certificate that the other node trusts for client authentication. Since Teleport does not issue long-lived

db_clientcertificates, the node needs to have a long-lived certificate issued by another CA that its peer node trusts.The split

dbanddb_clientCA architecture was introduced as a security fix in Teleport versions: 13.4.17, 14.3.7, and 15.See Database CA Migrations for more information.

Step 1/4. Set up the Teleport Database Service

The Database Service requires a valid join token to join your Teleport cluster.

Run the following tctl command and save the token output in /tmp/token

on the server that will run the Database Service:

$ tctl tokens add --type=db --format=text

abcd123-insecure-do-not-use-this

Install and configure Teleport where you will run the Teleport Database Service:

- Linux Server

- Kubernetes Cluster

Select an edition, then follow the instructions for that edition to install Teleport.

- Teleport Community Edition

- Teleport Enterprise

- Teleport Enterprise Cloud

The following command updates the repository for the package manager on the local operating system and installs the provided Teleport version:

$ curl https://cdn.teleport.dev/install-v14.3.33.sh | bash -s 14.3.33

- Debian 9+/Ubuntu 16.04+ (apt)

- Amazon Linux 2/RHEL 7 (yum)

- Amazon Linux 2/RHEL 7 (zypper)

- Amazon Linux 2023/RHEL 8+ (dnf)

- SLES 12 SP5+ and 15 SP5+ (zypper)

- Tarball

# Download Teleport's PGP public key

$ sudo curl https://apt.releases.teleport.dev/gpg \

-o /usr/share/keyrings/teleport-archive-keyring.asc

# Source variables about OS version

$ source /etc/os-release

# Add the Teleport APT repository for v14. You'll need to update this

# file for each major release of Teleport.

$ echo "deb [signed-by=/usr/share/keyrings/teleport-archive-keyring.asc] \

https://apt.releases.teleport.dev/${ID?} ${VERSION_CODENAME?} stable/v14" \

| sudo tee /etc/apt/sources.list.d/teleport.list > /dev/null

$ sudo apt-get update

$ sudo apt-get install teleport-ent

For FedRAMP/FIPS-compliant installations, install the teleport-ent-fips package instead:

$ sudo apt-get install teleport-ent-fips

# Source variables about OS version

$ source /etc/os-release

# Add the Teleport YUM repository for v14. You'll need to update this

# file for each major release of Teleport.

# First, get the major version from $VERSION_ID so this fetches the correct

# package version.

$ VERSION_ID=$(echo $VERSION_ID | grep -Eo "^[0-9]+")

$ sudo yum install -y yum-utils

$ sudo yum-config-manager --add-repo "$(rpm --eval "https://yum.releases.teleport.dev/$ID/$VERSION_ID/Teleport/%{_arch}/stable/v14/teleport.repo")"

$ sudo yum install teleport-ent

#

# Tip: Add /usr/local/bin to path used by sudo (so 'sudo tctl users add' will work as per the docs)

# echo "Defaults secure_path = /sbin:/bin:/usr/sbin:/usr/bin:/usr/local/bin" > /etc/sudoers.d/secure_path

For FedRAMP/FIPS-compliant installations, install the teleport-ent-fips package instead:

$ sudo yum install teleport-ent-fips

# Source variables about OS version

$ source /etc/os-release

# Add the Teleport Zypper repository for v14. You'll need to update this

# file for each major release of Teleport.

# First, get the OS major version from $VERSION_ID so this fetches the correct

# package version.

$ VERSION_ID=$(echo $VERSION_ID | grep -Eo "^[0-9]+")

# Use zypper to add the teleport RPM repo

$ sudo zypper addrepo --refresh --repo $(rpm --eval "https://zypper.releases.teleport.dev/$ID/$VERSION_ID/Teleport/%{_arch}/stable/cloud/teleport-zypper.repo")

$ sudo yum install teleport-ent

#

# Tip: Add /usr/local/bin to path used by sudo (so 'sudo tctl users add' will work as per the docs)

# echo "Defaults secure_path = /sbin:/bin:/usr/sbin:/usr/bin:/usr/local/bin" > /etc/sudoers.d/secure_path

For FedRAMP/FIPS-compliant installations, install the teleport-ent-fips package instead:

$ sudo yum install teleport-ent-fips

# Source variables about OS version

$ source /etc/os-release

# Add the Teleport YUM repository for v14. You'll need to update this

# file for each major release of Teleport.

# First, get the major version from $VERSION_ID so this fetches the correct

# package version.

$ VERSION_ID=$(echo $VERSION_ID | grep -Eo "^[0-9]+")

# Use the dnf config manager plugin to add the teleport RPM repo

$ sudo dnf config-manager --add-repo "$(rpm --eval "https://yum.releases.teleport.dev/$ID/$VERSION_ID/Teleport/%{_arch}/stable/v14/teleport.repo")"

# Install teleport

$ sudo dnf install teleport-ent

# Tip: Add /usr/local/bin to path used by sudo (so 'sudo tctl users add' will work as per the docs)

# echo "Defaults secure_path = /sbin:/bin:/usr/sbin:/usr/bin:/usr/local/bin" > /etc/sudoers.d/secure_path

For FedRAMP/FIPS-compliant installations, install the teleport-ent-fips package instead:

$ sudo dnf install teleport-ent-fips

# Source variables about OS version

$ source /etc/os-release

# Add the Teleport Zypper repository.

# First, get the OS major version from $VERSION_ID so this fetches the correct

# package version.

$ VERSION_ID=$(echo $VERSION_ID | grep -Eo "^[0-9]+")

# Use Zypper to add the teleport RPM repo

$ sudo zypper addrepo --refresh --repo $(rpm --eval "https://zypper.releases.teleport.dev/$ID/$VERSION_ID/Teleport/%{_arch}/stable/v14/teleport-zypper.repo")

# Install teleport

$ sudo zypper install teleport-ent

For FedRAMP/FIPS-compliant installations, install the teleport-ent-fips package instead:

$ sudo zypper install teleport-ent-fips

In the example commands below, update $SYSTEM_ARCH with the appropriate

value (amd64, arm64, or arm). All example commands using this variable

will update after one is filled out.

$ curl https://cdn.teleport.dev/teleport-ent-v14.3.33-linux-$SYSTEM_ARCH-bin.tar.gz.sha256

# <checksum> <filename>

$ curl -O https://cdn.teleport.dev/teleport-ent-v14.3.33-linux-$SYSTEM_ARCH-bin.tar.gz

$ shasum -a 256 teleport-ent-v14.3.33-linux-$SYSTEM_ARCH-bin.tar.gz

# Verify that the checksums match

$ tar -xvf teleport-ent-v14.3.33-linux-$SYSTEM_ARCH-bin.tar.gz

$ cd teleport-ent

$ sudo ./install

For FedRAMP/FIPS-compliant installations of Teleport Enterprise, package URLs will be slightly different:

$ curl https://cdn.teleport.dev/teleport-ent-v14.3.33-linux-$SYSTEM_ARCH-fips-bin.tar.gz.sha256

# <checksum> <filename>

$ curl -O https://cdn.teleport.dev/teleport-ent-v14.3.33-linux-$SYSTEM_ARCH-fips-bin.tar.gz

$ shasum -a 256 teleport-ent-v14.3.33-linux-$SYSTEM_ARCH-fips-bin.tar.gz

# Verify that the checksums match

$ tar -xvf teleport-ent-v14.3.33-linux-$SYSTEM_ARCH-fips-bin.tar.gz

$ cd teleport-ent

$ sudo ./install

OS repository channels

The following channels are available for APT, YUM, and Zypper repos. They may be used in place of

stable/v14 anywhere in the Teleport documentation.

| Channel name | Description |

|---|---|

stable/<major> | Receives releases for the specified major release line, i.e. v14 |

stable/cloud | Rolling channel that receives releases compatible with current Cloud version |

stable/rolling | Rolling channel that receives all published Teleport releases |

- Debian 9+/Ubuntu 16.04+ (apt)

- Amazon Linux 2/RHEL 7/CentOS 7 (yum)

- Amazon Linux 2023/RHEL 8+ (dnf)

- SLES 12 SP5+ and 15 SP5+ (zypper)

Add the Teleport repository to your repository list:

# Download Teleport's PGP public key

$ sudo curl https://apt.releases.teleport.dev/gpg \

-o /usr/share/keyrings/teleport-archive-keyring.asc

# Source variables about OS version

$ source /etc/os-release

# Add the Teleport APT repository for cloud.

$ echo "deb [signed-by=/usr/share/keyrings/teleport-archive-keyring.asc] \

https://apt.releases.teleport.dev/${ID?} ${VERSION_CODENAME?} stable/cloud" \

| sudo tee /etc/apt/sources.list.d/teleport.list > /dev/null

# Provide your Teleport domain to query the latest compatible Teleport version

$ export TELEPORT_DOMAIN=example.teleport.com

$ export TELEPORT_VERSION="$(curl https://$TELEPORT_DOMAIN/v1/webapi/automaticupgrades/channel/default/version | sed 's/v//')"

# Update the repo and install Teleport and the Teleport updater

$ sudo apt-get update

$ sudo apt-get install "teleport-ent=$TELEPORT_VERSION" teleport-ent-updater

# Source variables about OS version

$ source /etc/os-release

# Add the Teleport YUM repository for cloud.

# First, get the OS major version from $VERSION_ID so this fetches the correct

# package version.

$ VERSION_ID=$(echo $VERSION_ID | grep -Eo "^[0-9]+")

$ sudo yum install -y yum-utils

$ sudo yum-config-manager --add-repo "$(rpm --eval "https://yum.releases.teleport.dev/$ID/$VERSION_ID/Teleport/%{_arch}/stable/cloud/teleport-yum.repo")"

# Provide your Teleport domain to query the latest compatible Teleport version

$ export TELEPORT_DOMAIN=example.teleport.com

$ export TELEPORT_VERSION="$(curl https://$TELEPORT_DOMAIN/v1/webapi/automaticupgrades/channel/default/version | sed 's/v//')"

# Install Teleport and the Teleport updater

$ sudo yum install "teleport-ent-$TELEPORT_VERSION" teleport-ent-updater

# Tip: Add /usr/local/bin to path used by sudo (so 'sudo tctl users add' will work as per the docs)

# echo "Defaults secure_path = /sbin:/bin:/usr/sbin:/usr/bin:/usr/local/bin" > /etc/sudoers.d/secure_path

# Source variables about OS version

$ source /etc/os-release

# Add the Teleport YUM repository for cloud.

# First, get the OS major version from $VERSION_ID so this fetches the correct

# package version.

$ VERSION_ID=$(echo $VERSION_ID | grep -Eo "^[0-9]+")

# Use the dnf config manager plugin to add the teleport RPM repo

$ sudo dnf config-manager --add-repo "$(rpm --eval "https://yum.releases.teleport.dev/$ID/$VERSION_ID/Teleport/%{_arch}/stable/cloud/teleport-yum.repo")"

# Provide your Teleport domain to query the latest compatible Teleport version

$ export TELEPORT_DOMAIN=example.teleport.com

$ export TELEPORT_VERSION="$(curl https://$TELEPORT_DOMAIN/v1/webapi/automaticupgrades/channel/default/version | sed 's/v//')"

# Install Teleport and the Teleport updater

$ sudo dnf install "teleport-ent-$TELEPORT_VERSION" teleport-ent-updater

# Tip: Add /usr/local/bin to path used by sudo (so 'sudo tctl users add' will work as per the docs)

# echo "Defaults secure_path = /sbin:/bin:/usr/sbin:/usr/bin:/usr/local/bin" > /etc/sudoers.d/secure_path

# Source variables about OS version

$ source /etc/os-release

# Add the Teleport Zypper repository for cloud.

# First, get the OS major version from $VERSION_ID so this fetches the correct

# package version.

$ VERSION_ID=$(echo $VERSION_ID | grep -Eo "^[0-9]+")

# Use Zypper to add the teleport RPM repo

$ sudo zypper addrepo --refresh --repo $(rpm --eval "https://zypper.releases.teleport.dev/$ID/$VERSION_ID/Teleport/%{_arch}/stable/cloud/teleport-zypper.repo")

# Provide your Teleport domain to query the latest compatible Teleport version

$ export TELEPORT_DOMAIN=example.teleport.com

$ export TELEPORT_VERSION="$(curl https://$TELEPORT_DOMAIN/v1/webapi/automaticupgrades/channel/default/version | sed 's/v//')"

# Install Teleport and the Teleport updater

$ sudo zypper install "teleport-ent-$TELEPORT_VERSION" teleport-ent-updater

OS repository channels

The following channels are available for APT, YUM, and Zypper repos. They may be used in place of

stable/v14 anywhere in the Teleport documentation.

| Channel name | Description |

|---|---|

stable/<major> | Receives releases for the specified major release line, i.e. v14 |

stable/cloud | Rolling channel that receives releases compatible with current Cloud version |

stable/rolling | Rolling channel that receives all published Teleport releases |

Is my Teleport instance compatible with Teleport Enterprise Cloud?

Before installing a teleport binary with a version besides v16,

read our compatibility rules to ensure that the binary is compatible with

Teleport Enterprise Cloud.

Teleport uses Semantic Versioning. Version numbers

include a major version, minor version, and patch version, separated by dots.

When running multiple teleport binaries within a cluster, the following rules

apply:

- Patch and minor versions are always compatible, for example, any 8.0.1 component will work with any 8.0.3 component and any 8.1.0 component will work with any 8.3.0 component.

- Servers support clients that are one major version behind, but do not support

clients that are on a newer major version. For example, an 8.x.x Proxy Service

instance is compatible with 7.x.x agents and 7.x.x

tsh, but we don't guarantee that a 9.x.x agent will work with an 8.x.x Proxy Service instance. This also means you must not attempt to upgrade from 6.x.x straight to 8.x.x. You must upgrade to 7.x.x first. - Proxy Service instances and agents do not support Auth Service instances that

are on an older major version, and will fail to connect to older Auth Service

instances by default. You can override version checks by passing

--skip-version-checkwhen starting agents and Proxy Service instances.

On the host where you will run the Teleport Database Service, start Teleport with the appropriate configuration.

Note that a single Teleport process can run multiple different services, for

example multiple Database Service agents as well as the SSH Service or Application

Service. The step below will overwrite an existing configuration file, so if

you're running multiple services add --output=stdout to print the config in

your terminal, and manually adjust /etc/teleport.yaml.

Generate a configuration file at /etc/teleport.yaml for the Database Service:

- Teleport Enterprise/Enterprise Cloud

- Teleport Community Edition

$ sudo teleport db configure create \

-o file \

--token=/tmp/token \

--proxy=teleport.example.com:443 \

--name=roach \

--protocol=cockroachdb \

--uri=roach.example.com:26257 \

--labels=env=dev

$ sudo teleport db configure create \

-o file \

--token=/tmp/token \

--proxy=mytenant.teleport.sh:443 \

--name=roach \

--protocol=cockroachdb \

--uri=roach.example.com:26257 \

--labels=env=dev

Configure the Teleport Database Service to start automatically when the host boots up by creating a systemd service for it. The instructions depend on how you installed the Teleport Database Service.

- Package Manager

- TAR Archive

On the host where you will run the Teleport Database Service, enable and start Teleport:

$ sudo systemctl enable teleport

$ sudo systemctl start teleport

On the host where you will run the Teleport Database Service, create a systemd service configuration for Teleport, enable the Teleport service, and start Teleport:

$ sudo teleport install systemd -o /etc/systemd/system/teleport.service

$ sudo systemctl enable teleport

$ sudo systemctl start teleport

You can check the status of the Teleport Database Service with systemctl status teleport

and view its logs with journalctl -fu teleport.

Teleport provides Helm charts for installing the Teleport Database Service in Kubernetes Clusters.

Set up the Teleport Helm repository.

Allow Helm to install charts that are hosted in the Teleport Helm repository:

$ helm repo add teleport https://charts.releases.teleport.dev

Update the cache of charts from the remote repository so you can upgrade to all available releases:

$ helm repo update

- Self-Hosted

- Cloud-Hosted

Install the Teleport Kube Agent into your Kubernetes Cluster with the Teleport Database Service configuration.

$ JOIN_TOKEN=$(cat /tmp/token)

$ helm install teleport-kube-agent teleport/teleport-kube-agent \

--create-namespace \

--namespace teleport-agent \

--set roles=db \

--set proxyAddr=teleport.example.com:443 \

--set authToken=${JOIN_TOKEN?} \

--set "databases[0].name=roach" \

--set "databases[0].uri=roach.example.com:26257" \

--set "databases[0].protocol=cockroachdb" \

--set "databases[0].static_labels.env=dev" \

--version 14.3.33

Install the Teleport Kube Agent into your Kubernetes Cluster with the Teleport Database Service configuration.

$ JOIN_TOKEN=$(cat /tmp/token)

$ helm install teleport-kube-agent teleport/teleport-kube-agent \

--create-namespace \

--namespace teleport-agent \

--set roles=db \

--set proxyAddr=mytenant.teleport.sh:443 \

--set authToken=${JOIN_TOKEN?} \

--set "databases[0].name=roach" \

--set "databases[0].uri=roach.example.com:26257" \

--set "databases[0].protocol=cockroachdb" \

--set "databases[0].static_labels.env=dev" \

--version 16.4.3

Make sure that the Teleport agent pod is running. You should see one

teleport-kube-agent pod with a single ready container:

$ kubectl -n teleport-agent get pods

NAME READY STATUS RESTARTS AGE

teleport-kube-agent-0 1/1 Running 0 32s

A single Teleport process can run multiple services, for example multiple Database Service instances as well as other services such the SSH Service or Application Service.

Step 2/4. Create a Teleport user

To modify an existing user to provide access to the Database Service, see Database Access Access Controls

- Teleport Community Edition

- Teleport Enterprise/Enterprise Cloud

Create a local Teleport user with the built-in access role:

$ tctl users add \

--roles=access \

--db-users="*" \

--db-names="*" \

alice

Create a local Teleport user with the built-in access and requester roles:

$ tctl users add \

--roles=access,requester \

--db-users="*" \

--db-names="*" \

alice

| Flag | Description |

|---|---|

--roles | List of roles to assign to the user. The builtin access role allows them to connect to any database server registered with Teleport. |

--db-users | List of database usernames the user will be allowed to use when connecting to the databases. A wildcard allows any user. |

--db-names | List of logical databases (aka schemas) the user will be allowed to connect to within a database server. A wildcard allows any database. |

Database names are only enforced for PostgreSQL and MongoDB databases.

For more detailed information about database access controls and how to restrict access see RBAC documentation.

Step 3/4. Configure CockroachDB

Create a CockroachDB user

Teleport uses mutual TLS authentication with CockroachDB. Client certificate authentication is available to all CockroachDB users. If you don't have one, connect to your Cockroach cluster and create it:

CREATE USER alice WITH PASSWORD NULL;

The WITH PASSWORD NULL clause prevents the user from using password auth and

mandates client certificate auth.

Make sure to assign the user proper permissions within the database cluster. Refer to Create User in the CockroachDB documentation for more information.

Set up mutual TLS

To set up mutual TLS authentication, you need to make sure that:

- Teleport trusts certificates presented by CockroachDB nodes.

- CockroachDB nodes trust client certificates signed by both your CockroachDB CA

and your Teleport cluster's

db_clientCA.

CockroachDB nodes need to trust the Teleport db_client CA so that Teleport

users can authenticate to your CockroachDB cluster as clients.

The CockroachDB CA needs to be trusted by each CockroachDB node so that nodes can authenticate themselves as clients to other nodes in the CockroachDB cluster. This is because CockroachDB uses mTLS for node-to-node communication.

- Nodes serving your CockroachDB CA certs

- Nodes serving your Teleport CA certs

In this configuration, your CockroachDB CA will be used to issue the server cert

node.crt for each CockroachDB node.

This configuration is simpler to set up, because an existing CockroachDB cluster

already has node.crt issued for each node and you only need to configure the

CockroachDB nodes to trust your Teleport db_client CA.

Another benefit is that your CockroachDB nodes will continue to serve the same

CockroachDB CA-issued cert, rather than serving a new cert signed by Teleport's

db CA, so you don't have to configure other clients to trust a new CA.

Copy your CockroachDB CA cert to ca-client.crt in the certs directory of

each CockroachDB node:

$ CERTS_DIR=/path/to/cockroachdb/certs/dir

$ cp "${CERTS_DIR}/ca.crt" "${CERTS_DIR}/ca-client.crt"

Next, for each CockroachDB node, export Teleport's db_client CA using tctl

(or export it once and copy it to each node) and append the certificate to

ca-client.crt:

$ tctl auth export --type=db-client >> /path/to/cockroachdb/certs/dir/ca-client.crt

Modify the Teleport Database Service to trust your CockroachDB CA:

databases:

- name: "example-cockroachdb"

protocol: "cockroachdb"

uri: "cockroachdb.example.com:26257"

static_labels:

"env": "example"

tls:

ca_cert_file: "undefined"

Now the Teleport Database Service will trust certificates presented by your CockroachDB.

In this configuration, Teleport's CA will be used to issue the server cert,

node.crt, and your own custom CA will be used to issue the client certificate,

client.node.crt, for each CockroachDB node.

Teleport uses mutual TLS authentication with self-hosted databases. These databases must be configured with Teleport's certificate authority to be able to verify client certificates. They also need a certificate/key pair that Teleport can verify.

If you are using Teleport Cloud, your Teleport user must be allowed to

impersonate the system role Db in order to be able to generate the database

certificate.

Include the following allow rule in in your Teleport Cloud user's role:

allow:

impersonate:

users: ["Db"]

roles: ["Db"]

Generate secrets for a CockroachDB node using tctl:

$ tctl auth sign \

--format=cockroachdb \

--host=roach.example.com \

--out=/path/to/cockroachdb/certs/dir \

--ttl=2190h

We recommend using a shorter TTL, but keep mind that you'll need to update the database server certificate before it expires to not lose the ability to connect. Pick the TTL value that best fits your use-case.

The command will produce 4 files:

ca.crtwith Teleport'sdbcertificate authorityca-client.crtwith Teleport'sdb_clientcertificate authoritynode.crt/node.keywith the node's certificate and key.

You can specify multiple comma-separated addresses e.g.

--host=roach,node-1,192.168.1.1.

However, you must include the hostname that Teleport will use to connect to the

database.

Do not rename these files as this is how CockroachDB expects them to be named. See Node key and certificates for details.

Prepend your CockroachDB CA's certificate to ca-client.crt.

Now issue a client certificate for the node using your CockroachDB CA:

$ cockroach cert create-client node \

--certs-dir=/path/to/cockroachdb/certs/dir \

--ca-key=ca-secrets/ca-client.key

Seeing an error message about TLS key mismatch?

If you see an error message like: tls: private key does not match public key,

it likely means you did not prepend your CockroachDB CA cert to ca-client.crt

earlier.

cockroach cert create-client expects the first certificate in ca-client.crt

(in the --certs-dir specified) to be the certificate signed by --ca-key.

Ensure that your CockroachDB CA certificate is the first certificate in

ca-client.crt.

Now copy <Var name="/path/to/cockroachdb/certs/dir" /> to the CockroachDB

node and repeat these steps for all of your other CockroachDB nodes.

Restart your CockroachDB nodes, passing them the directory with generated secrets

via the --certs-dir flag:

$ cockroach start \

--certs-dir=/path/to/cockroachdb/certs/dir \

# other flags...

Alternatively, if the nodes were already started with

--certs-dir=<Var name="/path/to/cockroachdb/certs/dir" />, you can send a

SIGHUP signal to the cockroach process to reload certificates without

restarting the node. You must send SIGHUP as the same user that started the

cockroach process:

$ pkill -SIGHUP -x cockroach

Step 4/4. Connect

Log in to your Teleport cluster. Your CockroachDB cluster should appear in the list of available databases:

- Self-Hosted

- Cloud

$ tsh login --proxy=teleport.example.com --user=alice

$ tsh db ls

# Name Description Labels

# ----- ------------------- -------

# roach Example CockroachDB env=dev

$ tsh login --proxy=mytenant.teleport.sh --user=alice

$ tsh db ls

# Name Description Labels

# ----- ------------------- -------

# roach Example CockroachDB env=dev

To retrieve credentials for a database and connect to it:

$ tsh db connect roach

You can optionally specify the database name and the user to use by default when connecting to the database server:

$ tsh db connect --db-user=alice roach

Either the cockroach or psql command-line client should be available in PATH

in order to be able to connect.

To log out of the database and remove credentials:

$ tsh db logout roach

Troubleshooting

Unimplemented client encoding error

You may encounter the unimplemented client encoding: "sqlascii" error when

connecting to your CockroachDB database if your psql uses SQL_ASCII encoding.

CockroachDB supports only UTF8 client encoding. To enforce the encoding, set

the following environment variable in the shell running tsh db connect:

export PGCLIENTENCODING='utf-8'

If you are connecting the CockroachDB database with Teleport Connect, add the environment variable to your shell startup scripts and restart the Teleport Connect app.

Unable to cancel a query

If you use a PostgreSQL cli client like psql, and you try to cancel a query

with ctrl+c, but it doesn't cancel the query, then you need to connect using a

tsh local proxy instead.

When psql cancels a query, it establishes a new connection without TLS

certificates, however Teleport requires TLS certificates not only for

authentication, but also to route database connections.

If you

enable TLS Routing in Teleport

then tsh db connect will automatically start a local proxy for every

connection.

Alternatively, you can connect via

Teleport Connect

which also uses a local proxy.

Otherwise, you need to start a tsh local proxy manually using tsh proxy db

and connect via the local proxy.

If you have already started a long-running query in a psql session that you

cannot cancel with ctrl+c, you can start a new client session to cancel that

query manually:

First, find the query's process identifier (PID):

SELECT pid,usename,backend_start,query FROM pg_stat_activity WHERE state = 'active';

Next, gracefully cancel the query using its PID. This will send a SIGINT signal to the postgres backend process for that query:

SELECT pg_cancel_backend(<PID>);

You should always try to gracefully terminate a query first, but if graceful cancellation is taking too long, then you can forcefully terminate the query instead. This will send a SIGTERM signal to the postgres backend process for that query:

SELECT pg_terminate_backend(<PID>);

See the PostgreSQL documentation on

admin functions

for more information about the pg_cancel_backend and pg_terminate_backend

functions.

Next steps

- Learn how to restrict access to certain users and databases.

- Learn more about dynamic database registration.

- View the High Availability (HA) guide.

- See the YAML configuration reference for updating dynamic resource matchers or static database definitions.

- Take a look at the full CLI reference.