Best Practices for Secure Infrastructure Access

Table Of Contents

This post is an excerpt of the recently published tech paper, Best Practices for Secure Infrastructure Access. For the full paper, download Best Practices for Secure Infrastructure Access.

Technologies build on other technologies to compound growth. It’s no coincidence that of the companies with the highest market capitalization within the US, the first non-tech company is the eighth one down: Berkshire Hathaway. Nor is it a coincidence that tech startups can take their valuation into the 10 digits in a flash on the backs of other tech companies. This pace of growth can only be afforded by the innovation of new technology.

Cloud Computing

Over 15 years after the launch of AWS, Gartner still projects 17% industry growth in 2020 to over a quarter trillion in public cloud revenues. The new frontier is no longer centralized server clusters, but at the elusive “Edge,” where Gartner predicts 75% of enterprise data will be produced in just five years (2025).

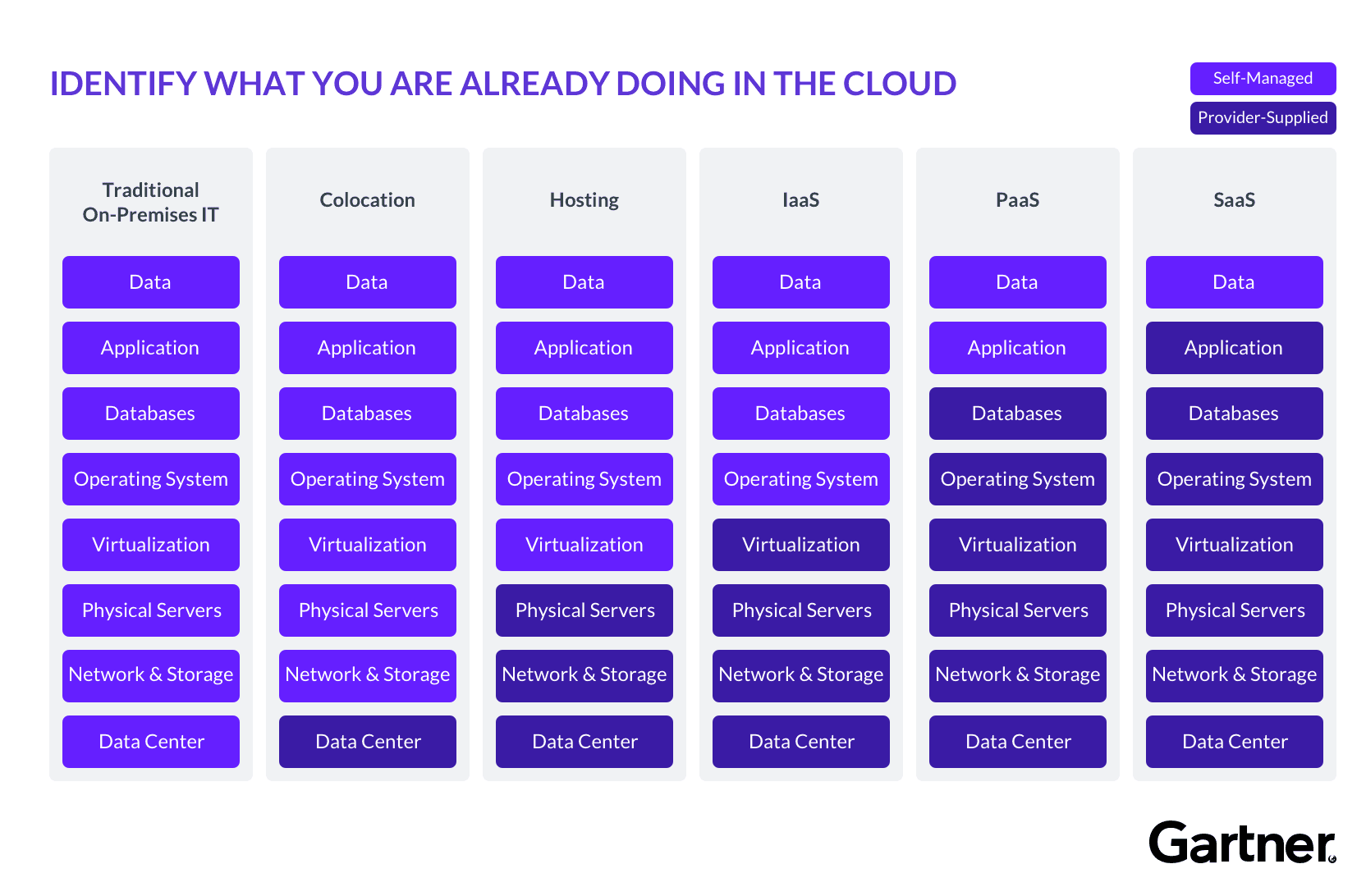

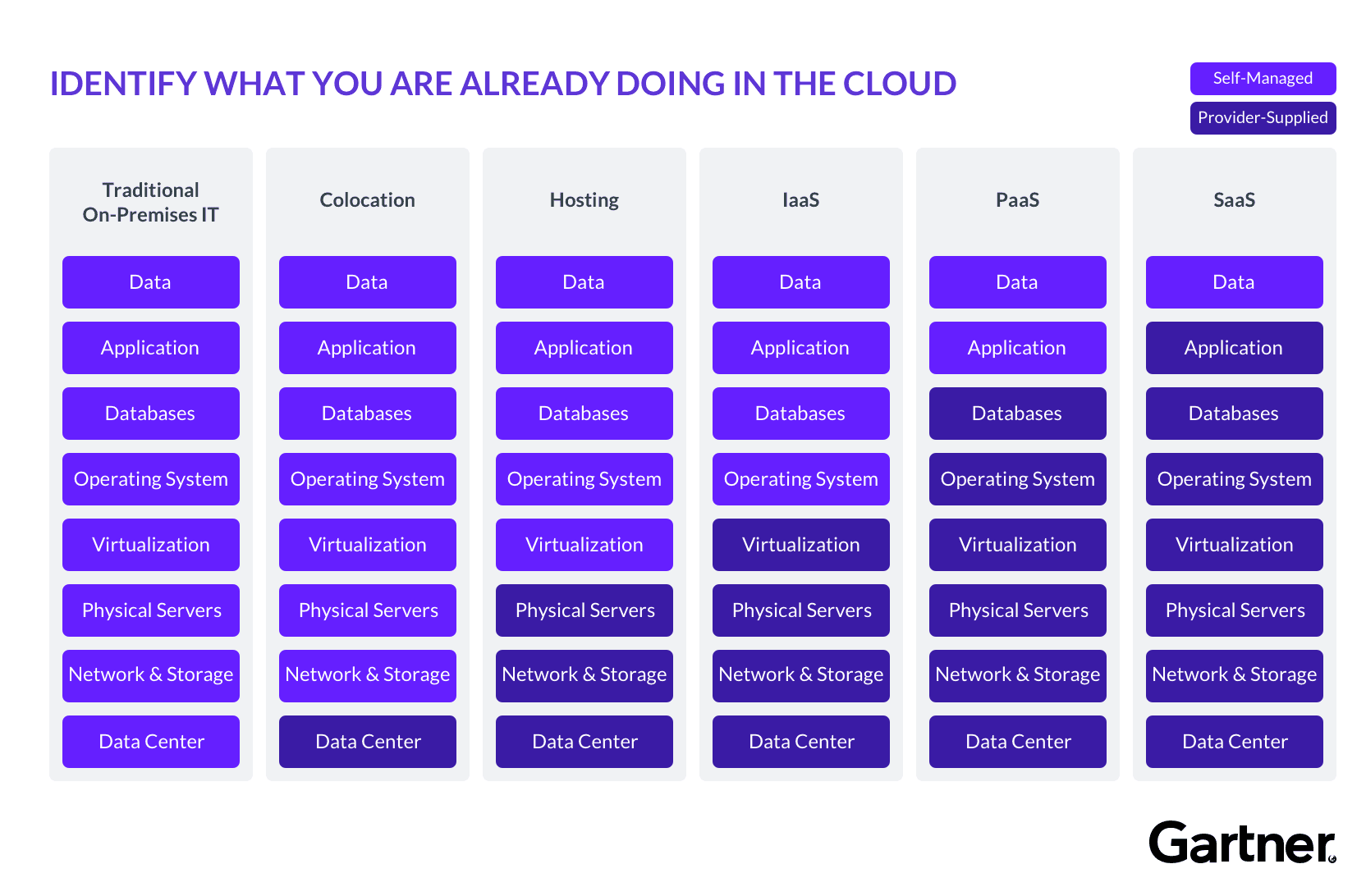

Everything-as-a-Service

The SaaS business model is its own industry, offering every slice of infrastructure as a managed service. Referred to as Everything-as-a-Service (XaaS), these service providers allow companies to outsource core technologies, maintenance, and monitoring at a fraction of the cost required to build in-house.

Internet of Things

IoT represents an archetype of compounding technology. The convergence of sensors, edge computing, 5G, and machine learning, have produced a low-fidelity image of a future that looks unimaginably different. Already, it is incredible that the “Smart Cities” promised by Silicon Valley are starting to take their initial shapes in places like New York and San Francisco in the prototypical form of transportation and environmental goals.

These examples may seem like buzzwordy puffery and far removed from the majority of day-to-day challenges that organizations face, but they represent a consistent trend that all agree with: Technology continues to grow exponentially in size and scale, producing immense complexity. Infrastructure is managed by service providers, data is distributed across the globe, applications are subscribed to and communicate via APIs, new devices connect daily to internal services, and working-from-home has become universally normalized. Adapting to modernity means the decentralization of hardware, software, and people, by abstracting the IT stack. As if actively resisting these two trends, organizations protect resources with policies and procedures that have evolved from centralized architecture. To protect critical infrastructure against the irreparable security threats presented by the rift, organizations can modernize access governance. These four best practices provide a framework to meet this challenge:

- Base decisions on identity

- Make it easy to use

- Don’t trust private networks

- Centralize auditing and monitoring

Where’s the Problem?

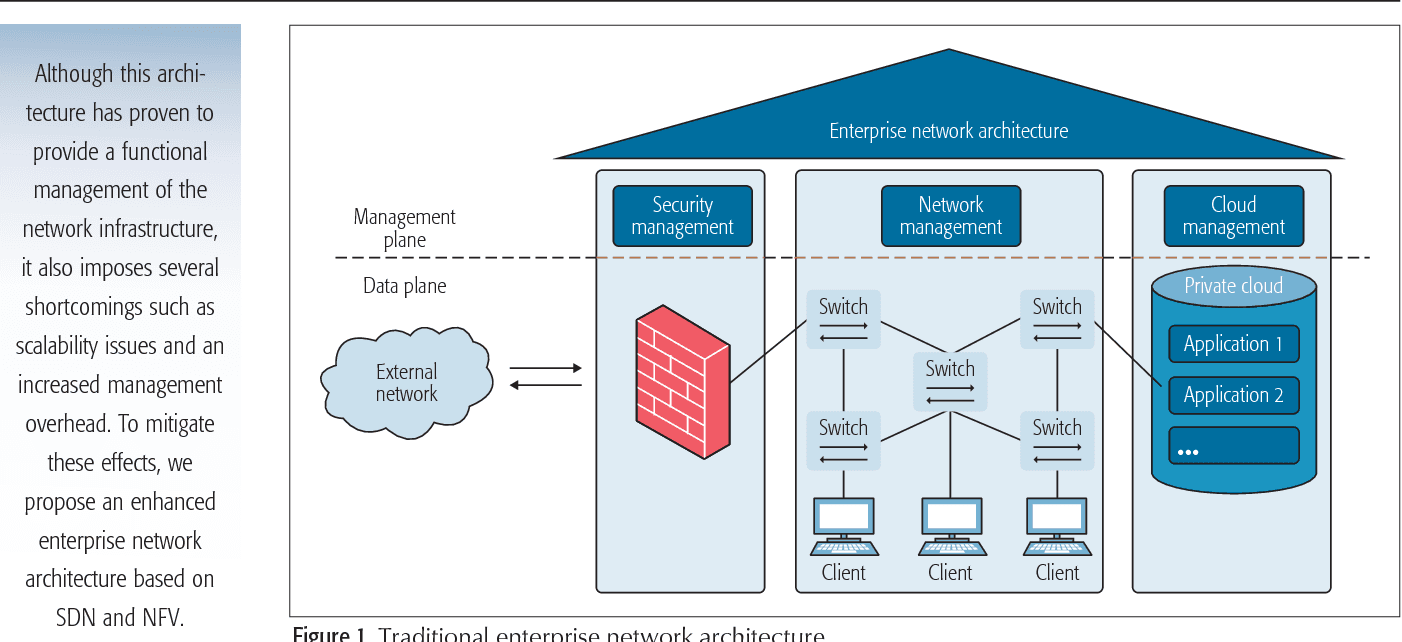

Historically, organizations have relied on protected networks to distinguish between trusted and non-trusted agents. Conceptually, this mental model was intuitive in design as resources were located within a handful of dedicated environments. Security tools would often define access policies based on address origin. Anything within a known registry was safe, anything without was not until determined otherwise. As the web advanced, this fledgling topology outgrew itself and organizations adapted to fragmented infrastructure. Internal networks grew in complexity, using VPNs to connect remote subnetworks together, security groups batched access behind firewalls, bastion hosts jumped users from public to private subnets, etc.

Teleport cybersecurity blog posts and tech news

Every other week we'll send a newsletter with the latest cybersecurity news and Teleport updates.

Enterprise Network Architecture

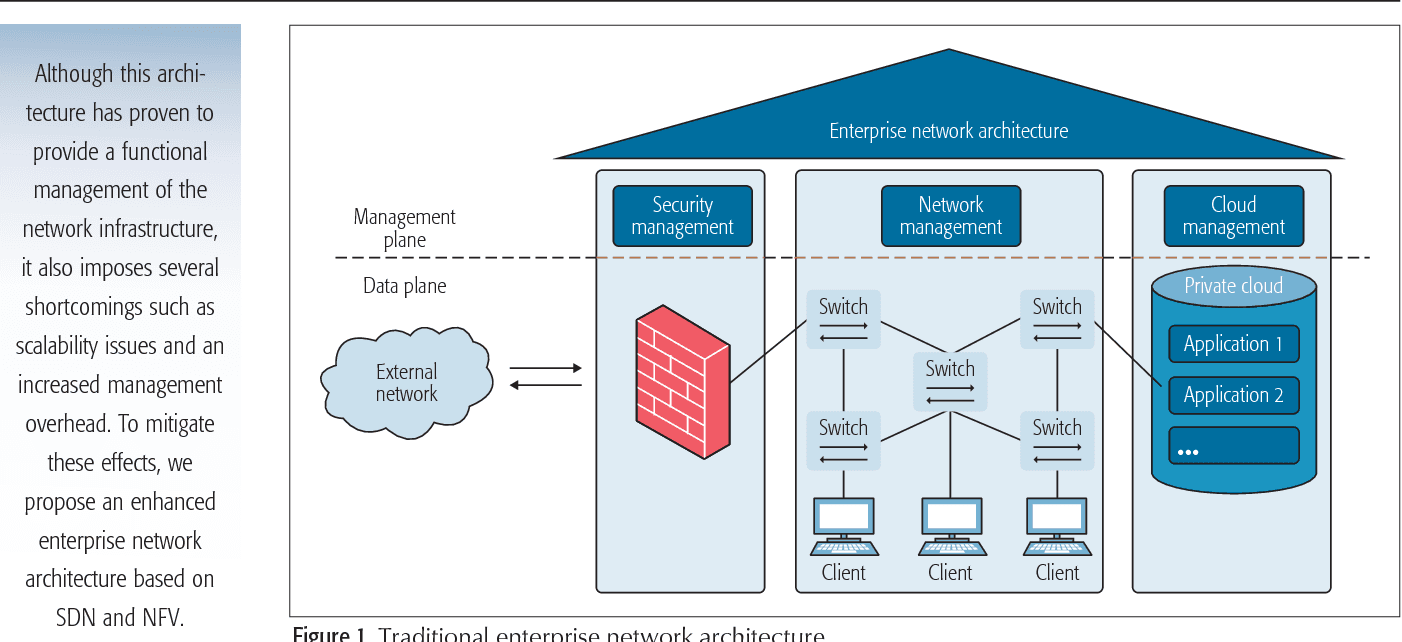

Underpinning these tactics is the same premise as when packet-switching firewalls were implemented over three decades ago: Environments can reliably evaluate whether access is allowed, or not. Networks could be separated, gateways introduced, and user attributes defined. But after being vetted, actors became trusted and left alone. Pair this philosophy with the growing scale and complexity of company infrastructure, and pressure points present themselves.

-

Dynamic Infrastructure - The abstraction of higher-level services from lower-level hardware has made environments fluid and ephemeral. From VMs to containers, tech stacks have become increasingly compartmentalized, allowing developers to make piece-meal changes without disrupting operations. Tack on automated scaling and redundancy for high-availability and infrastructure resembles an ever-changing landscape that network engineers must map. In a static world, IP addresses and ingress ports were reliable substitutes for identifying behavior. But in a dynamic world, these variables constantly change. Keeping pace requires firewalls, routing, ACLs, and API white lists to be constantly updated, always one step behind. Even with sufficient automation, network engineers are burdened with tedious manual labor, driving up operational costs and slowing down development.

-

Few Internal Protections - The complexity of mapping traffic across elastic infrastructure produces relaxed internal measures. In an ideal world, devices and services would be isolated into their smallest possible network segments, communicating through secure gateways with uniquely identifiable information. But when schedulers can spin up a container on any node within a single cluster, it becomes easier to bundle and route blocks instead of identifying individual components. Add on the reality that engineers build back doors when well-intentioned security protocols impede their work, and the task of protecting resources compels a trade-off between performance and protection.

-

Over Reliance on Secrets - Secrets like API keys, .PEM files, SSH keys, etc. unlock critical infrastructure and must be guarded with paranoid rigor. This task has turned into a near existential threat as the number of secrets has ballooned from:

- Cloud services like storage, databases, compute, logging, etc.

- DevOps teams deploying to bespoke development, testing, staging, and production environments

- Machine communications occurring through the exchange of tokens, keys, and certificates

- Internet-connected devices requiring their own secure endpoint

Centralized secret management tools like Hashicorp Vault and AWS Secret Manager only solve half the problem. As with VPNs, once a user is given access, their activity becomes indistinguishable from all the other events, providing minimal visibility for proactive or reactive measures. Once again, IP addresses can be used as a stand-in, but they no longer inspire the same level of confidence as in the past.

Networking is a necessary component of letting the right things in and keeping the wrong things out; ports will never stop being scanned. But the expansion and adoption of technology are outstripping the ability for networks to bear the burden of defense. Modernizing infrastructure access means identifying where and how networks struggle to cope with and comparing alternatives means. It means questioning whether effective security hurdles are being created, or agitating hoops that developers must jump through.

To learn more about how the four practices above can help guide this restoration of effective security controls, read the entire tech paper here.

Table Of Contents

Teleport Newsletter

Stay up-to-date with the newest Teleport releases by subscribing to our monthly updates.

Tags

Tags

Teleport Newsletter

Stay up-to-date with the newest Teleport releases by subscribing to our monthly updates.