How to Record and Audit Amazon RDS Database Activity With Teleport

This blog is the final part of a series about secure access to Amazon RDS. In Part 1, we covered how to use OSS Teleport as an identity-aware access proxy to access Amazon RDS instances running in private subnets. Part 2 explained implementing single sign-on (SSO) for Amazon RDS access using Okta and Teleport. Part 3 showed how to configure Teleport access requests to enable just-in-time access requests for Amazon RDS access. In Part 4, we will show you how to use Teleport to record and audit database access events.

Part 4: Auditing Amazon RDS Access

Auditing and reviewing the database activity is critical for achieving compliance. While managed services such as Amazon CloudWatch and Amazon CloudTrail provide auditing capabilities, they are mostly confined to the Amazon RDS control-plane events. Once a user or application accesses the database and performs queries, customers lose visibility into the session.

Teleport's live session view combined with the comprehensive audit log delivers deep insights into every aspect of the database access. Since the audit log acts as a single source of truth for all the security events generated by Teleport, it can be used to audit and review Data Definition Language (DDL) and Data Manipulation Language (DML) queries impacting the database.

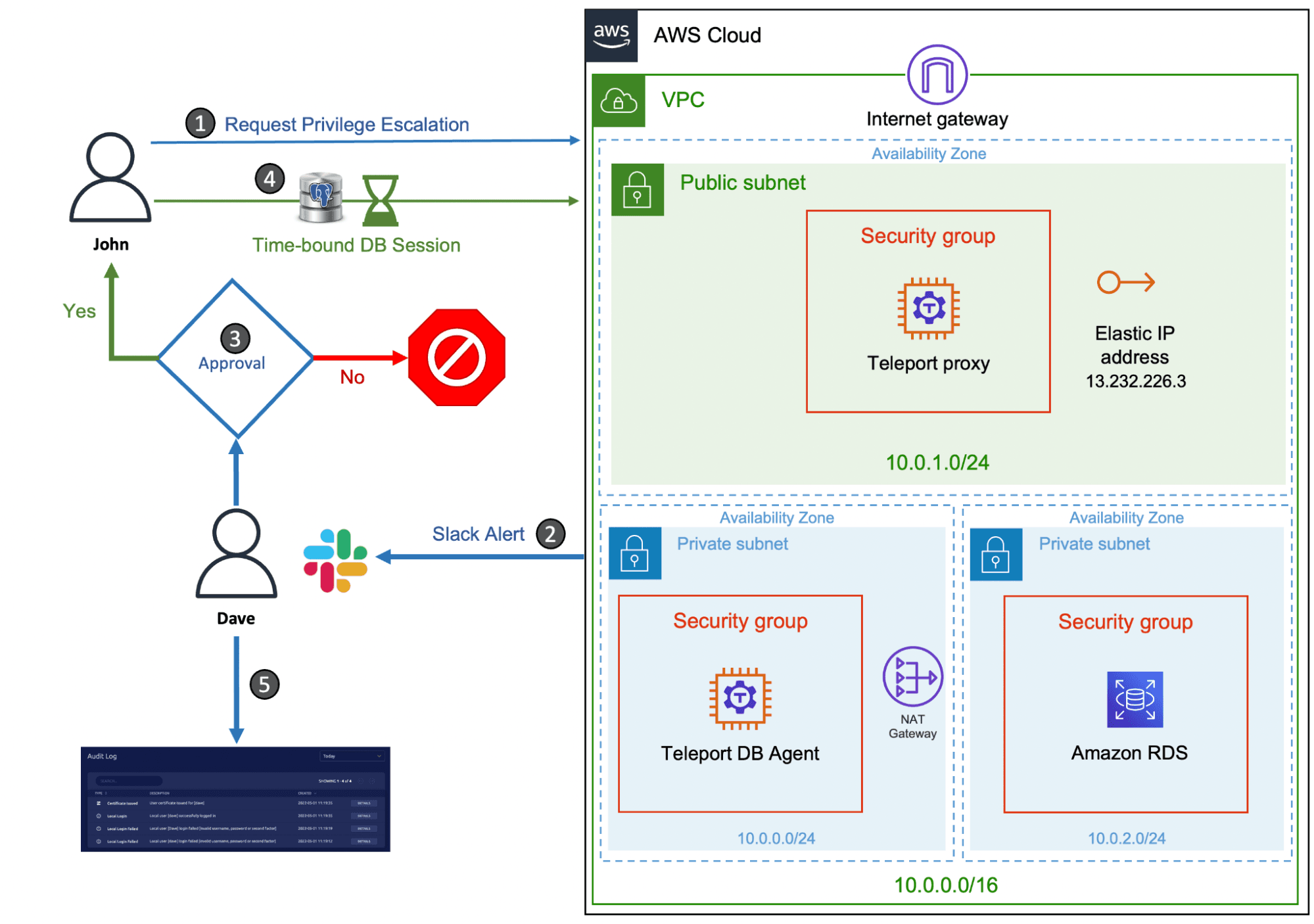

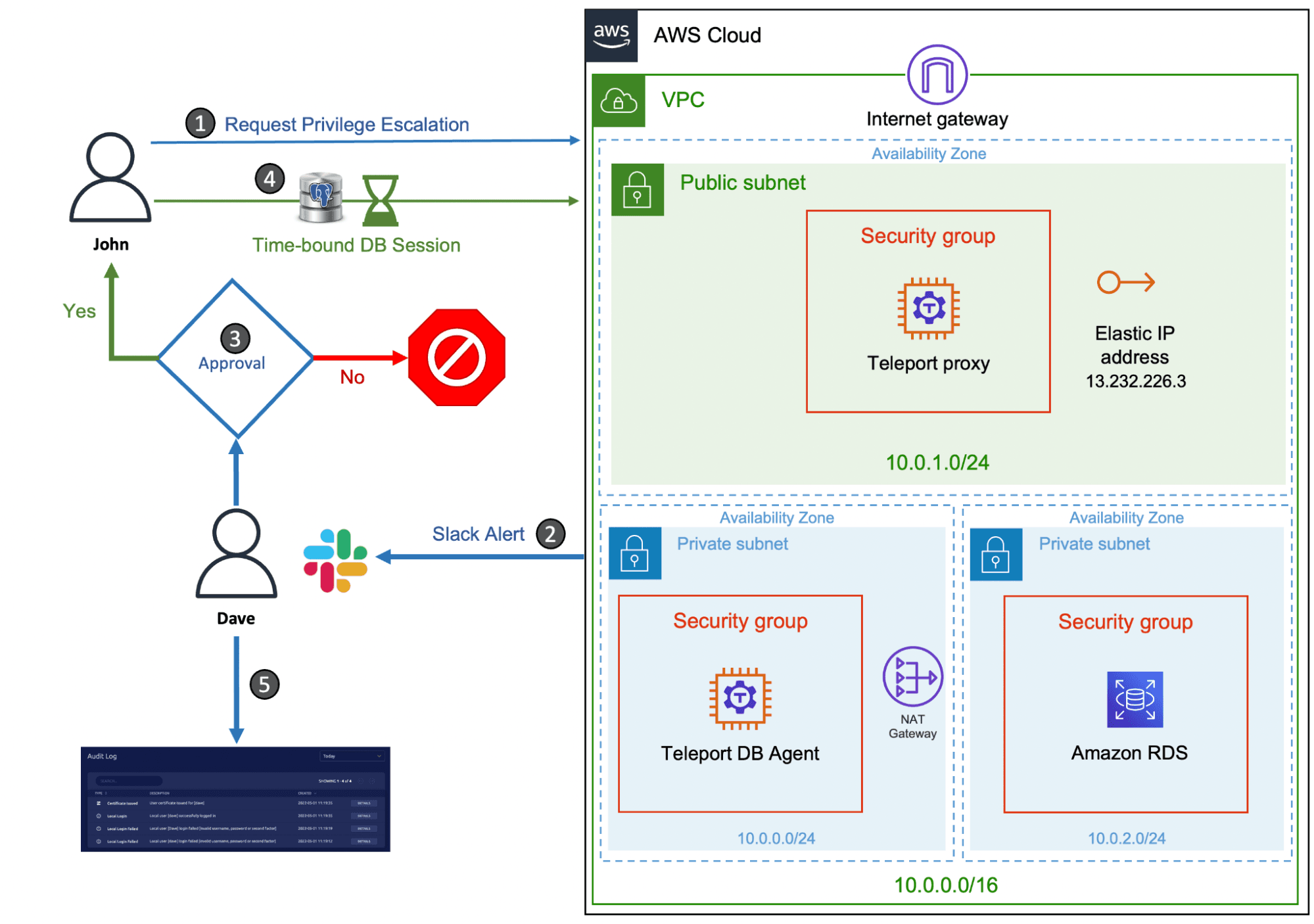

In the previous tutorial, we demonstrated how to perform privilege escalation with Teleport RBAC and Slack to provide time-bound access to sensitive databases. A contractor requests a time-bound DB session to access a production database, approved by a team leader.

We will extend the scenario to cover the audit and review of the DB sessions initiated by the contractor. We will also walk through the steps to use Amazon DynamoDB storage for persisting Teleport events within the AWS infrastructure.

Note: This scenario builds upon the previous articles in this series, including running AWS bastion host and setting up just-in-time access. In order to follow this tutorial, you will need provisioned AWS infrastructure (Amazon EC2 instances, a VPC, security groups, internet gateway, NAT gateway and routing tables) and have Teleport Enterprise installed. You should have a Teleport proxy configured in the public subnet with a Teleport database agent host running in the private subnet. The configuration should also have a user with Teleport roles

access,auditor,editor, and a user with a role that includes permissions to request controlled privilege escalation.

| Teleport User | Persona | Teleport Roles | PostgreSQL User |

|---|---|---|---|

| Dave | Team Leader | access,auditor,editor,team-lead | Any |

| John | Contractor | access,contractor | user1 |

| John | DBA* | access,dba | user2 |

Tip: The

auditorrole is a preset role that allows reading cluster events, audit logs, and playing back session records. The team leader persona below assumes the role of an auditor since he needs to access the session recordings and audit logs.

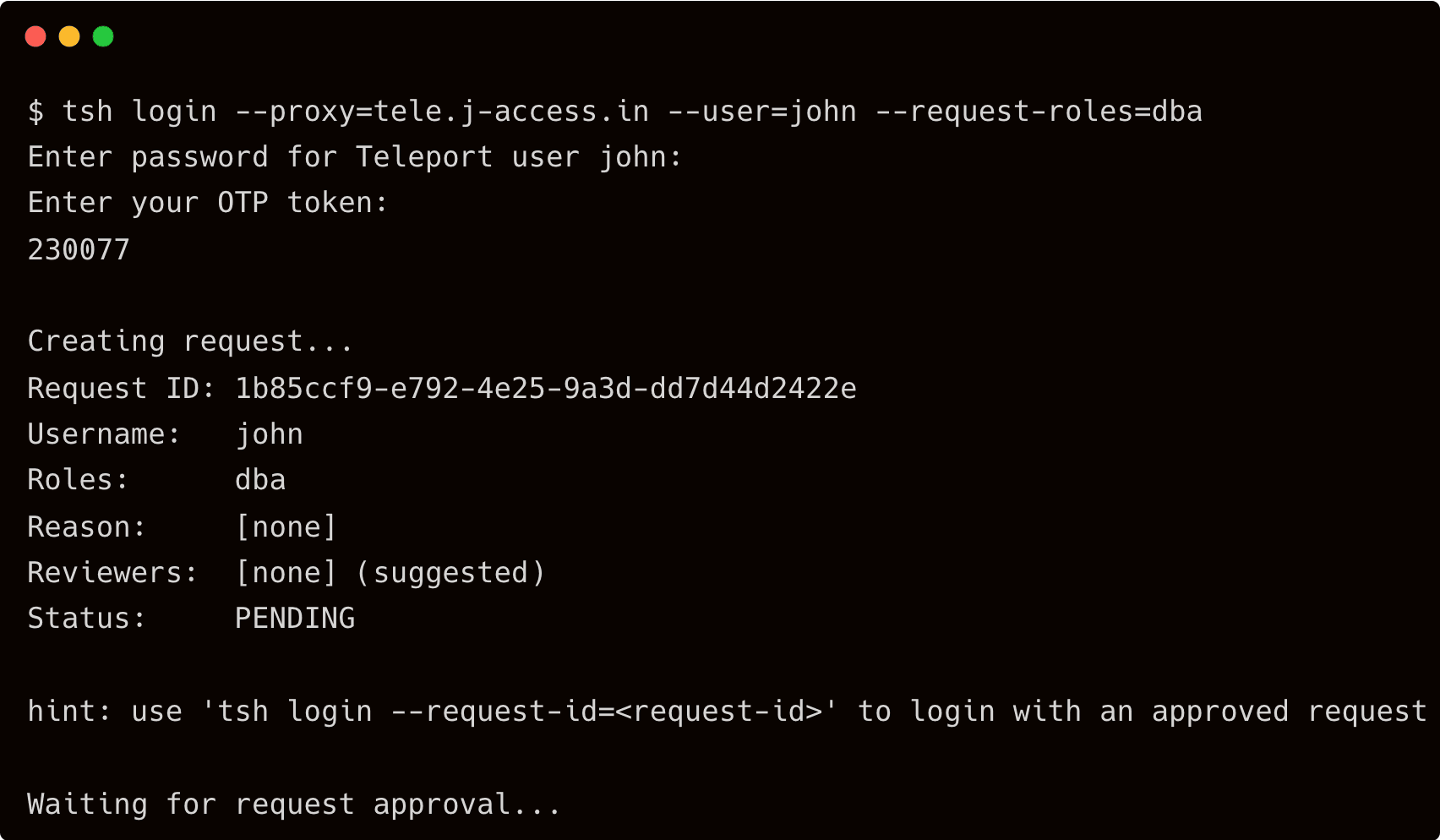

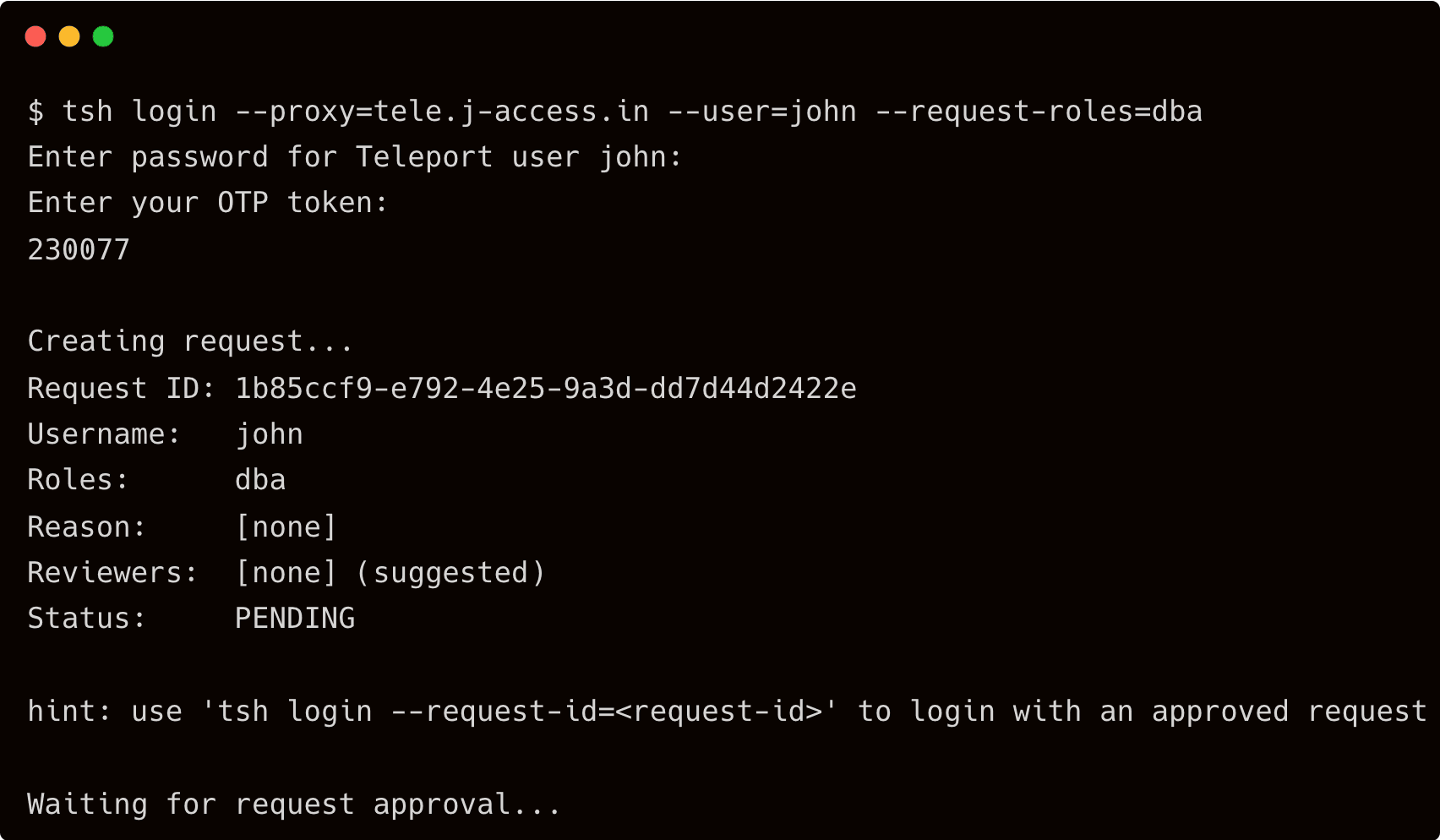

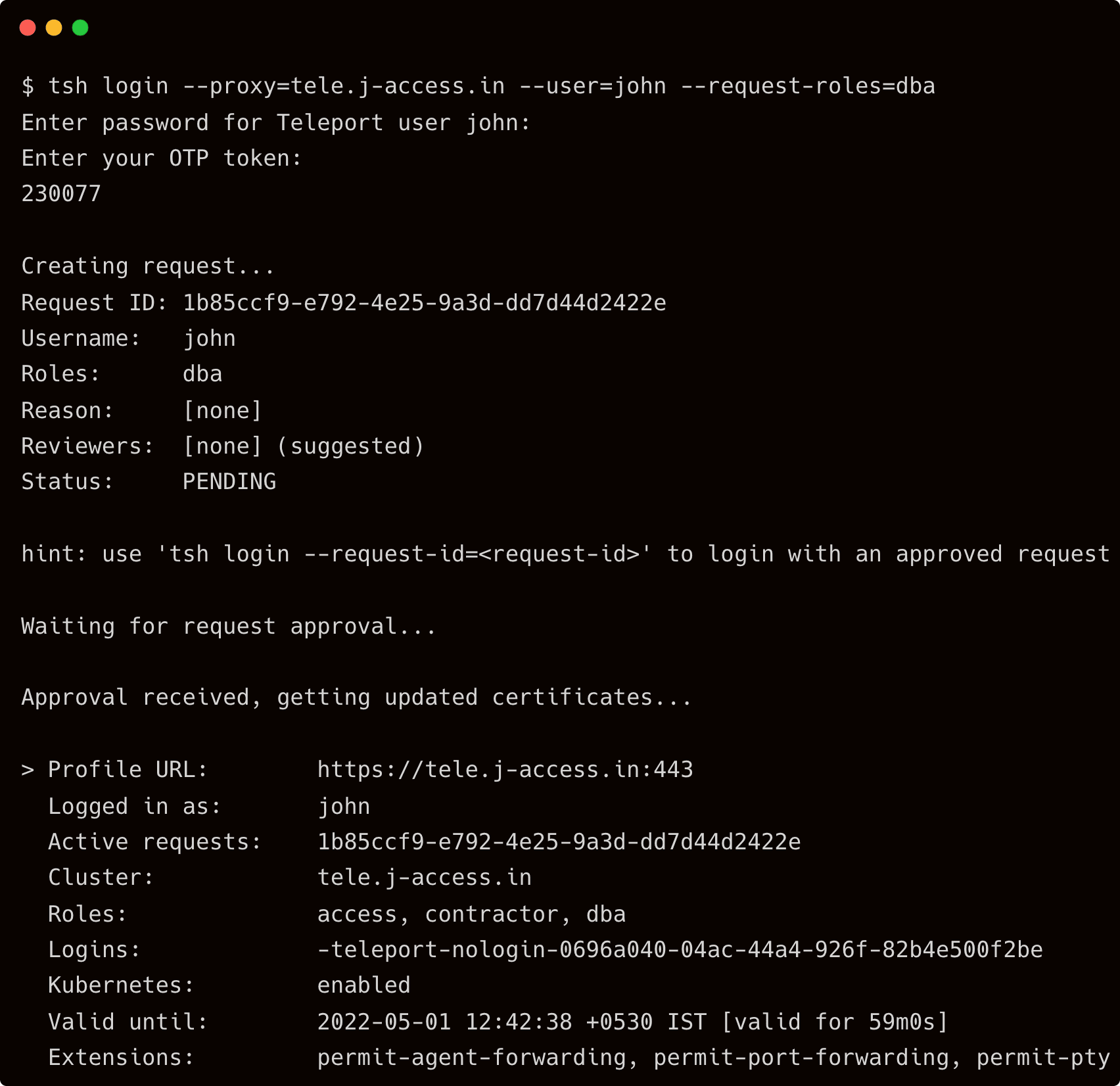

Step 1 - Requesting privilege escalation for database access

Start by requesting access to the suppliers database for John with elevated privileges and letting Dave approve it.

$ tsh login --proxy=tele.j-access.in --user=john --request-roles=dba

Once Dave approves the request, John assumes the role of a DBA for 60 minutes.

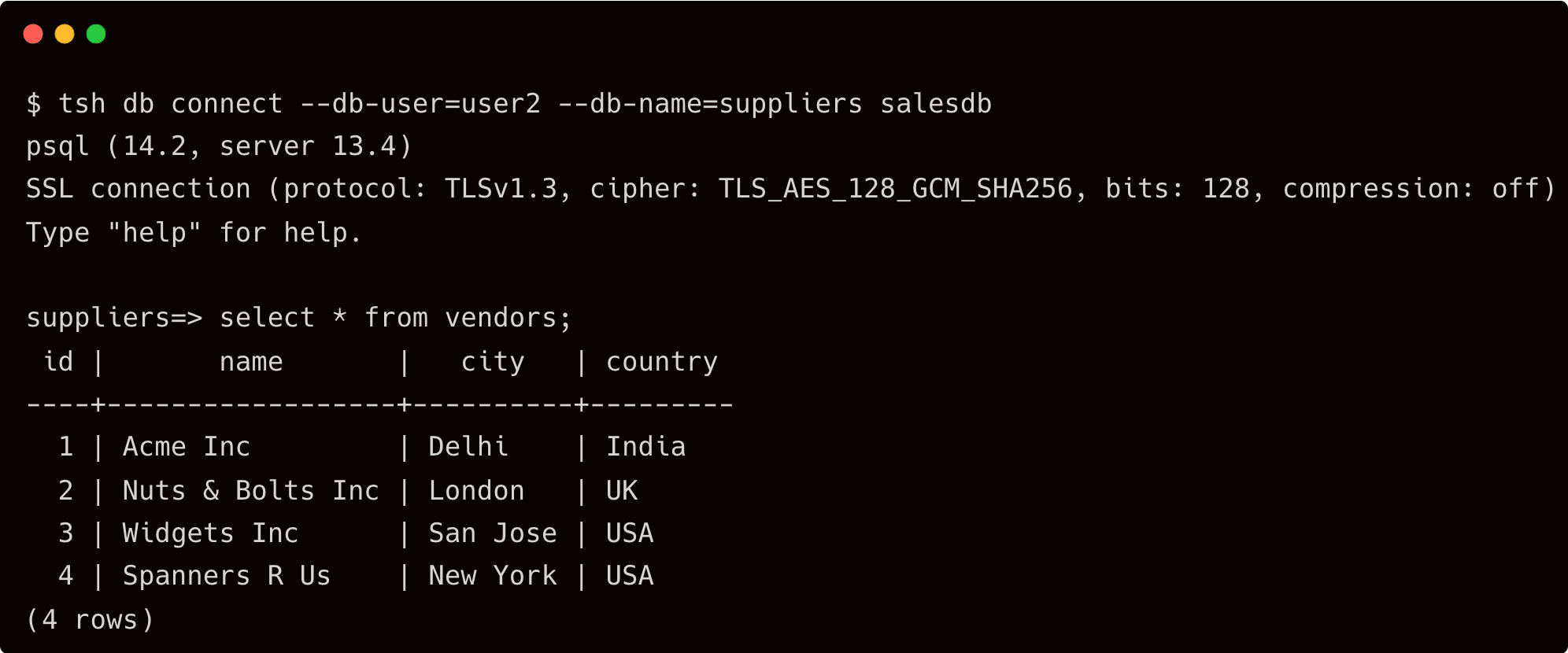

Step 2 - Performing database activities

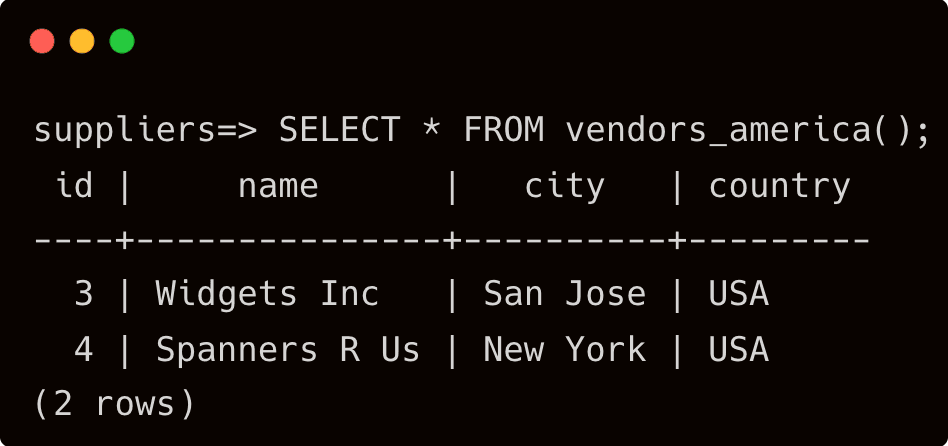

After John logs into the PostgreSQL suppliers database, he performs a couple of queries.

Instead of deleting a temporary table that was a part of the maintenance task, John accidentally deletes the function, vendors_america table.

Without realizing that he deletes a critical user-defined function often accessed by front-end developers, John logs out of PostgreSQL, and eventually, his privileges expire.

Step 3 - Reviewing and auditing DB activity

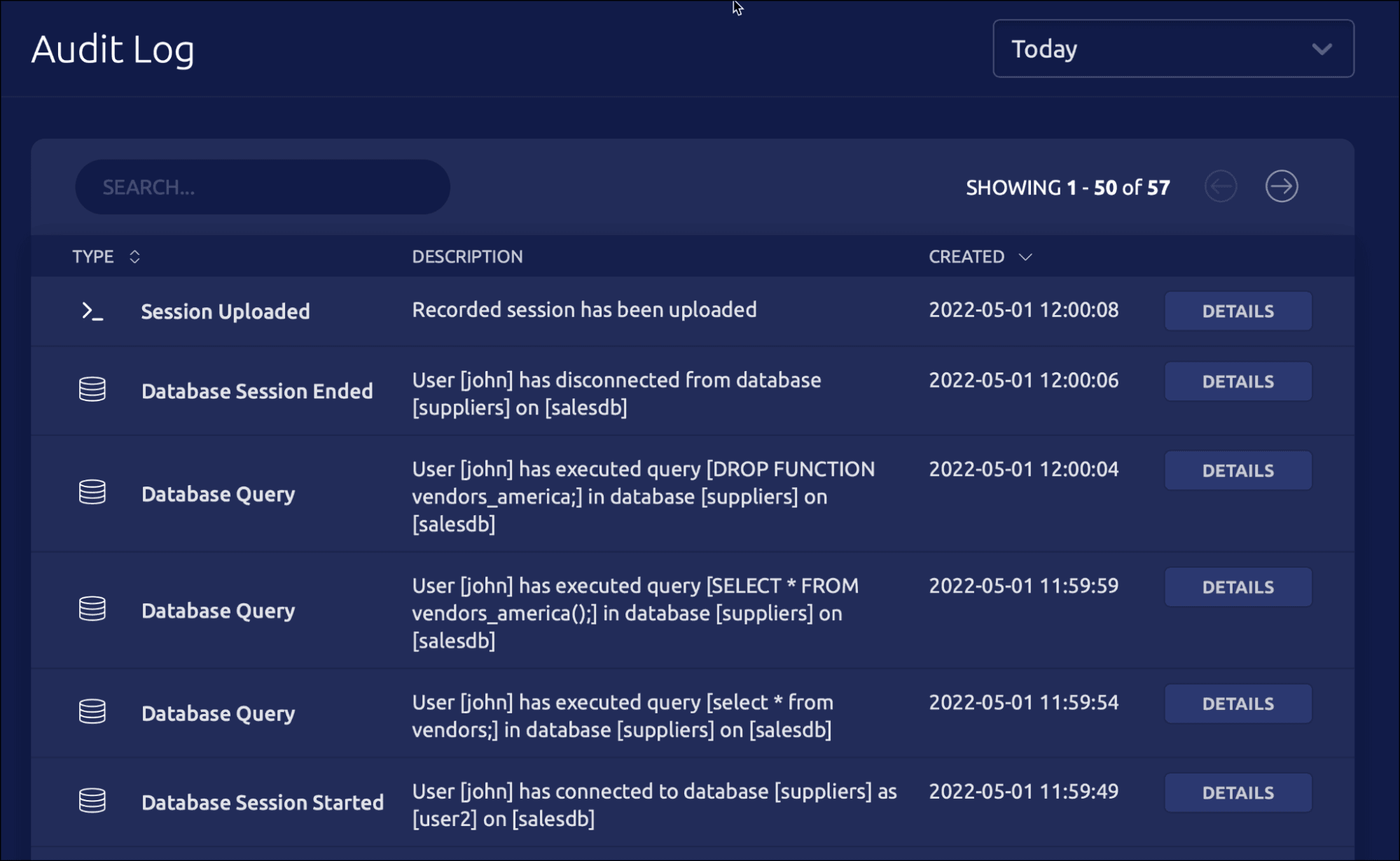

After receiving various complaints from the developers, Dave wants to review the DB access sessions to investigate the issue. He accesses the logs from the Teleport web interface.

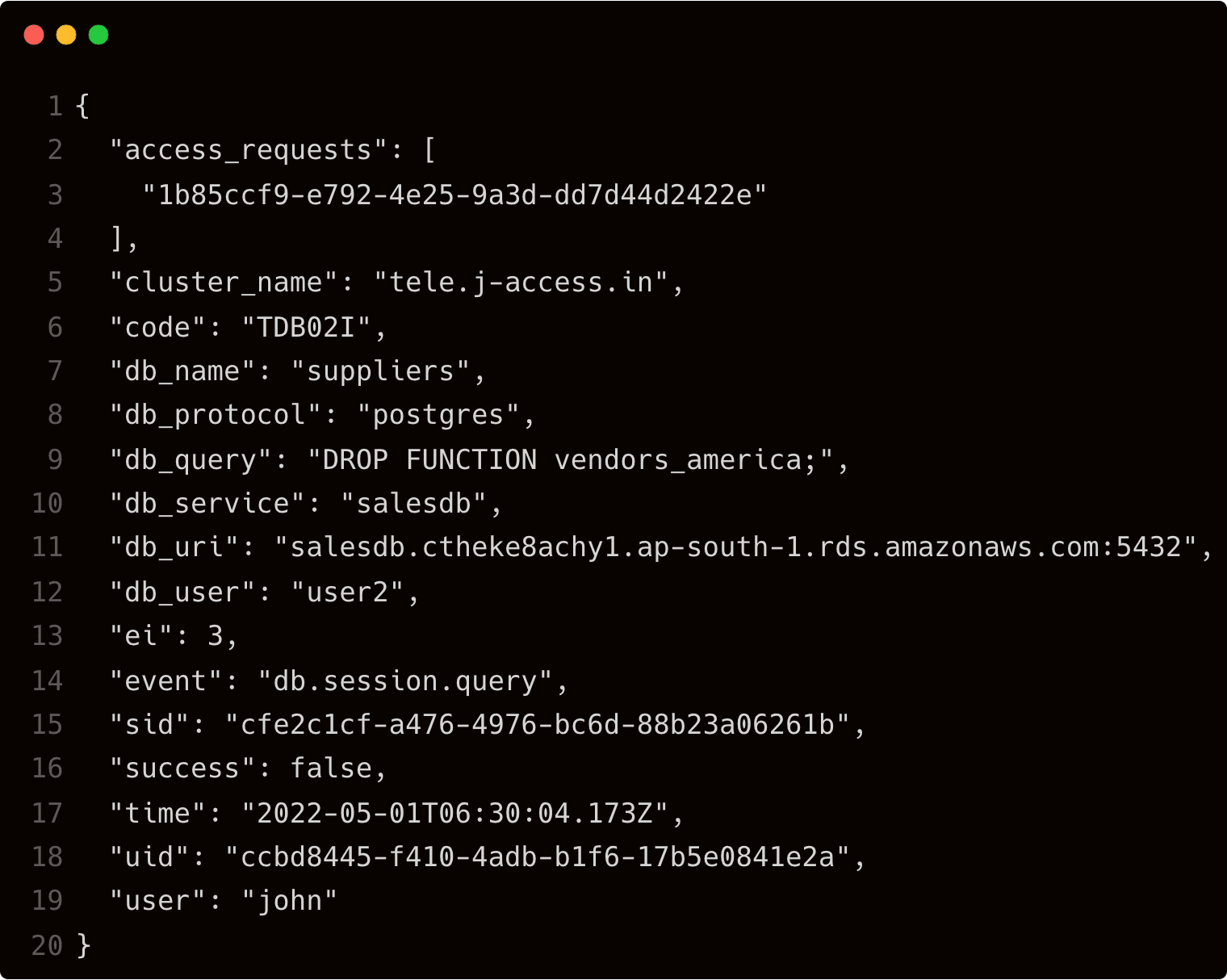

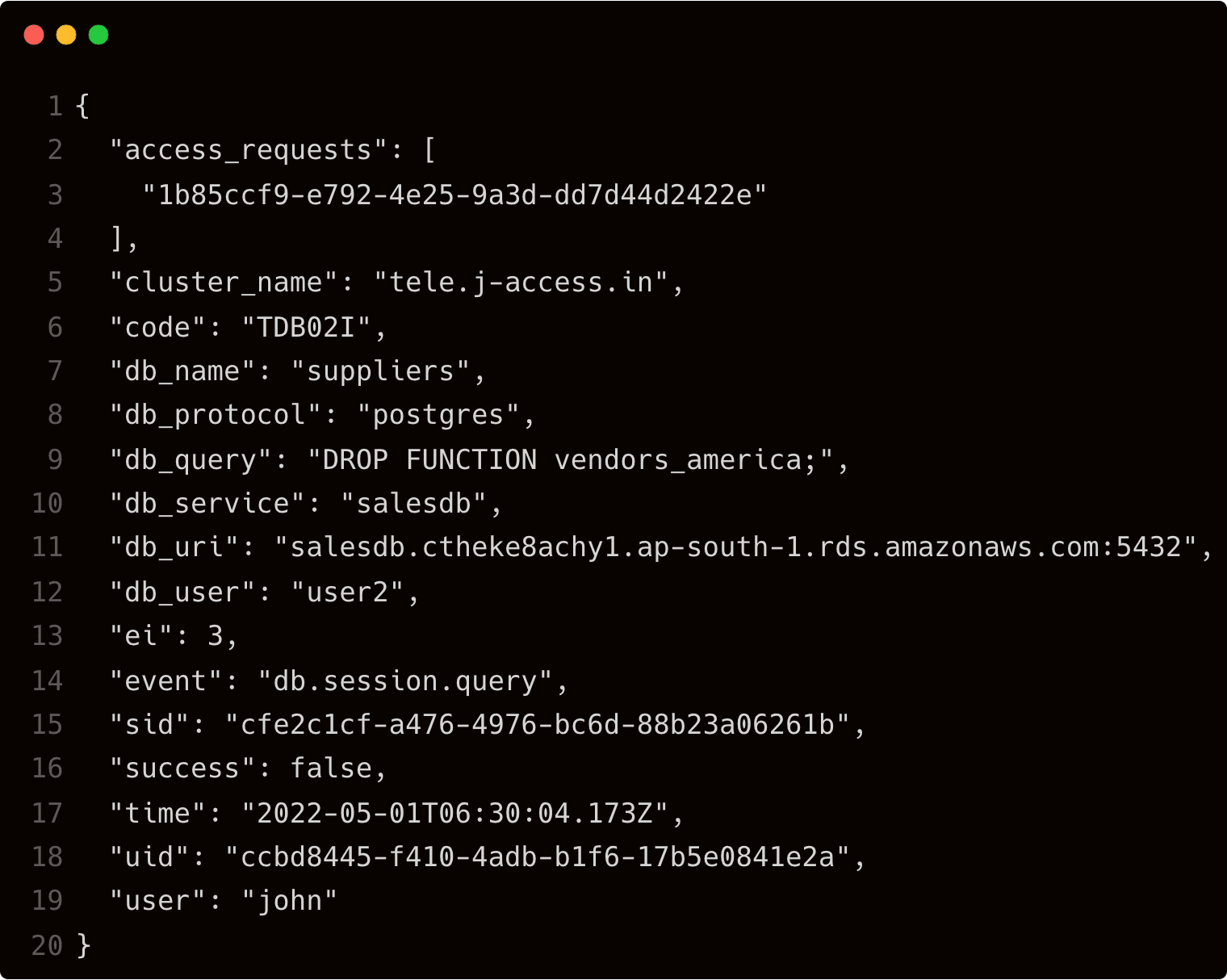

The third item in the list clearly indicates that John has executed the drop function command during his last session. He can click on the details button to see additional information including the session id and timestamp.

The db_query key confirms that John has indeed deleted the function.

By default, Teleport stores the event logs in /var/lib/teleport/log directory of the auth server. For high availability and durability, it is possible to move them to AWS Cloud. The event logs can be stored in Amazon DynamoDB for centralized and secure access.

Step 4 - Storing the audit logs in Amazon DynamoDB

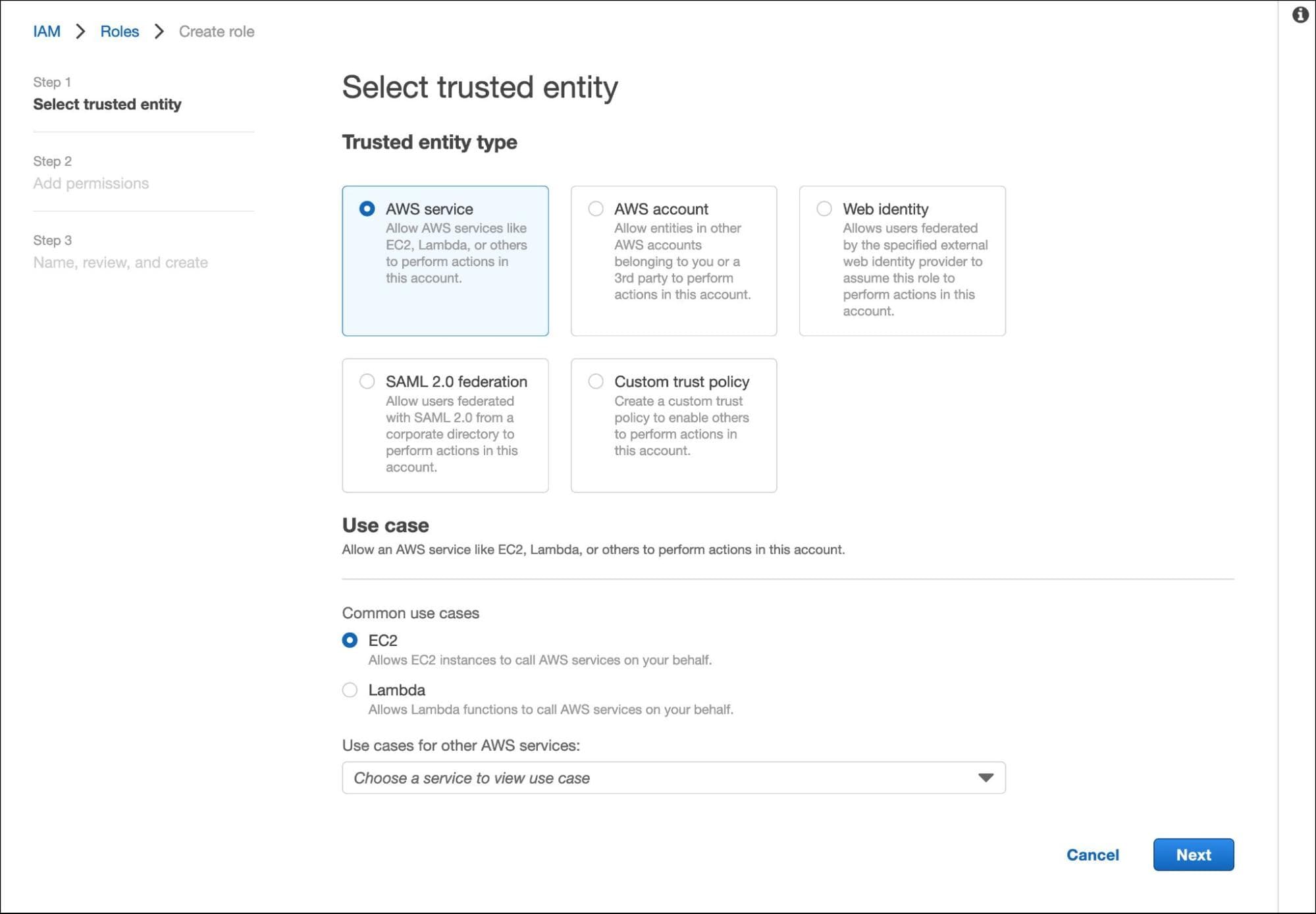

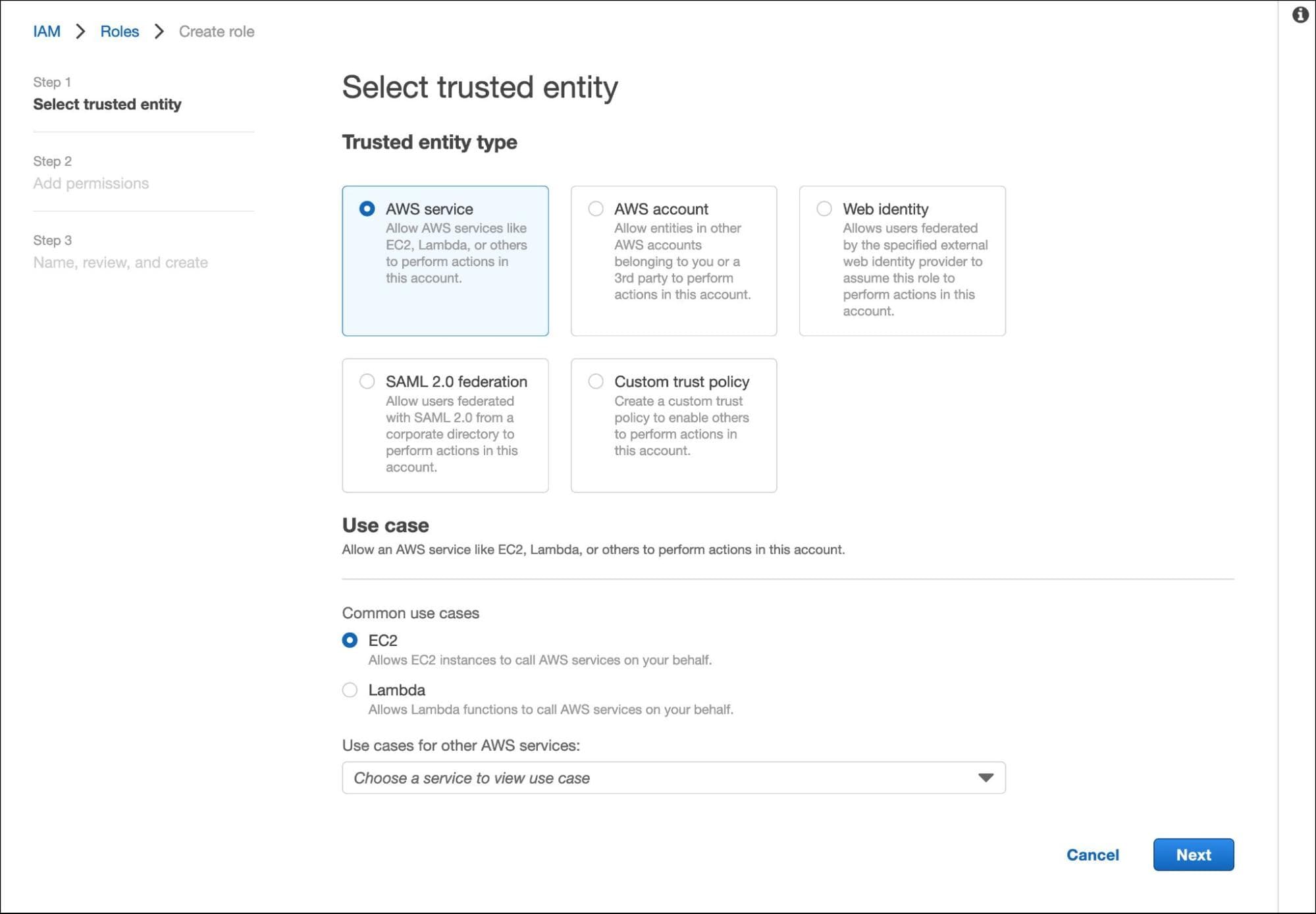

We will start by creating the IAM roles assumed by the Amazon EC2 instance running the Teleport auth server.

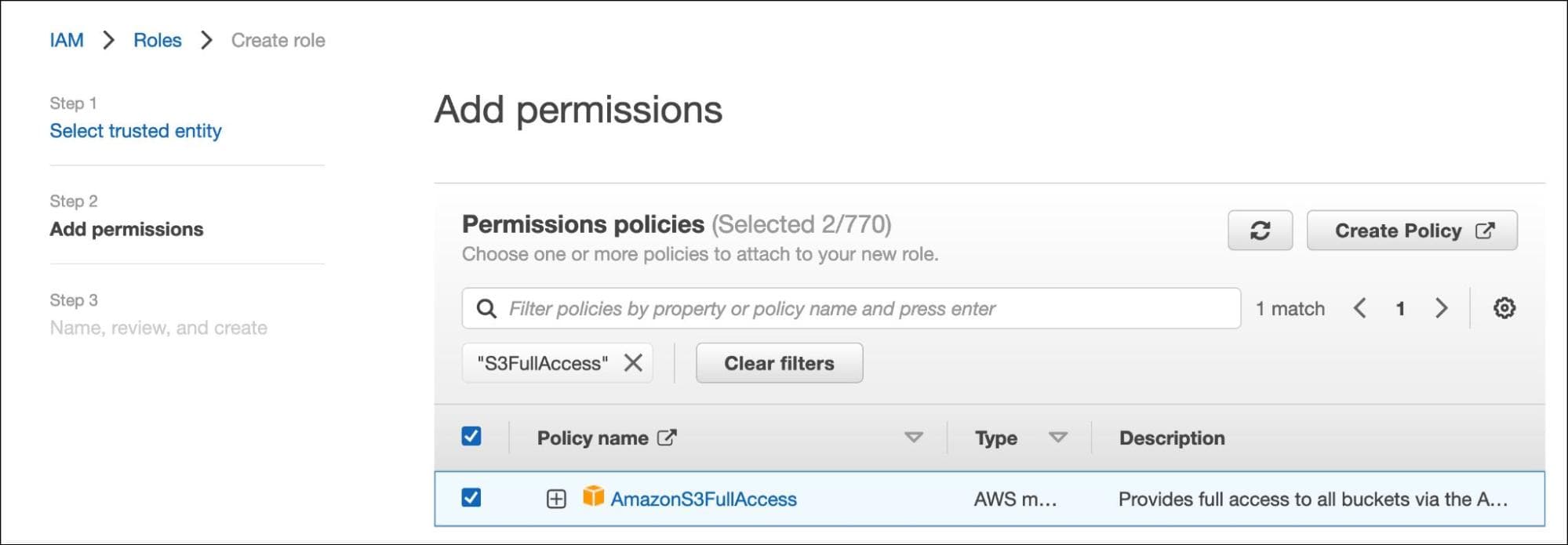

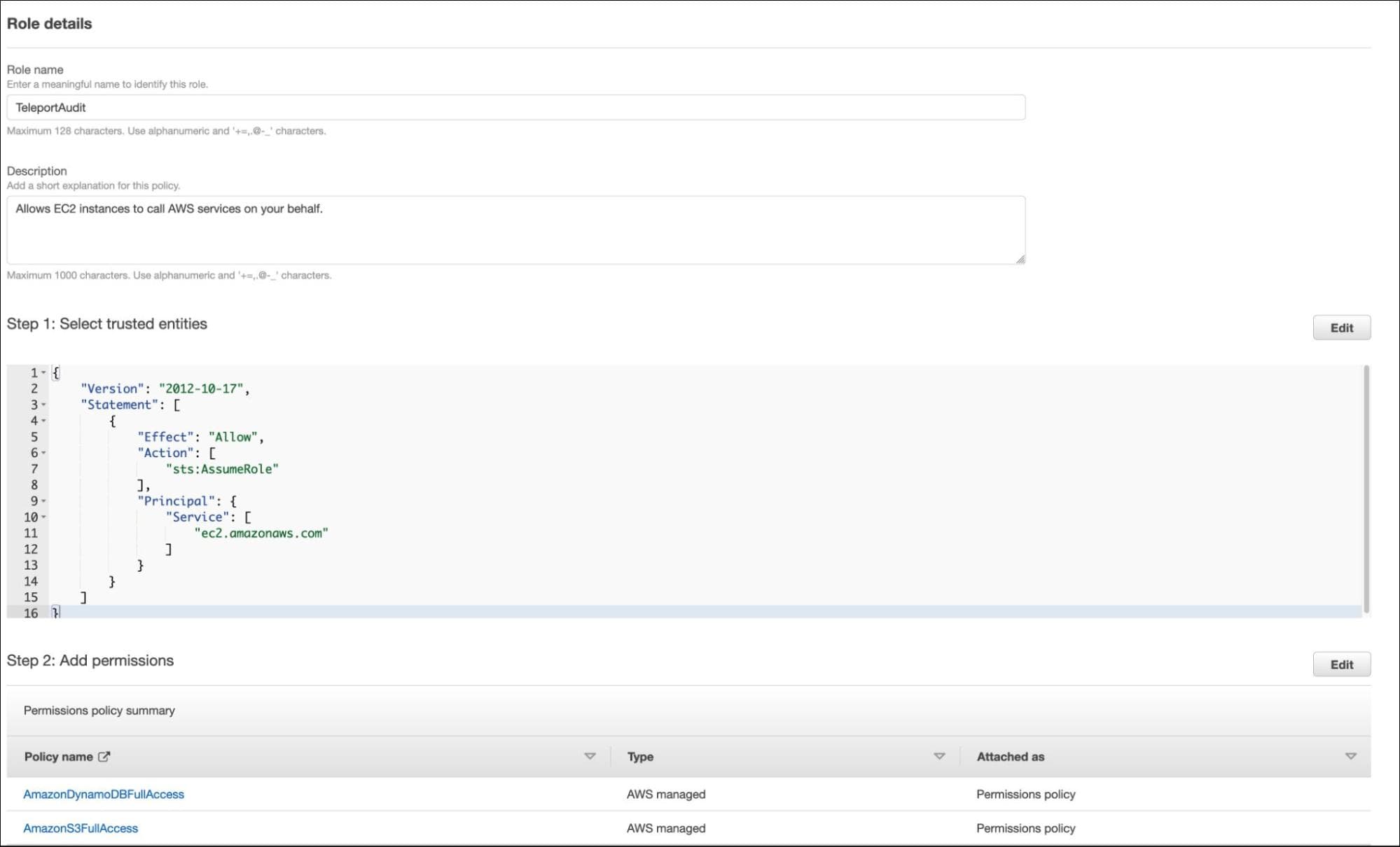

In AWS IAM Console, create a new role for the EC2 instance running the Teleport auth server.

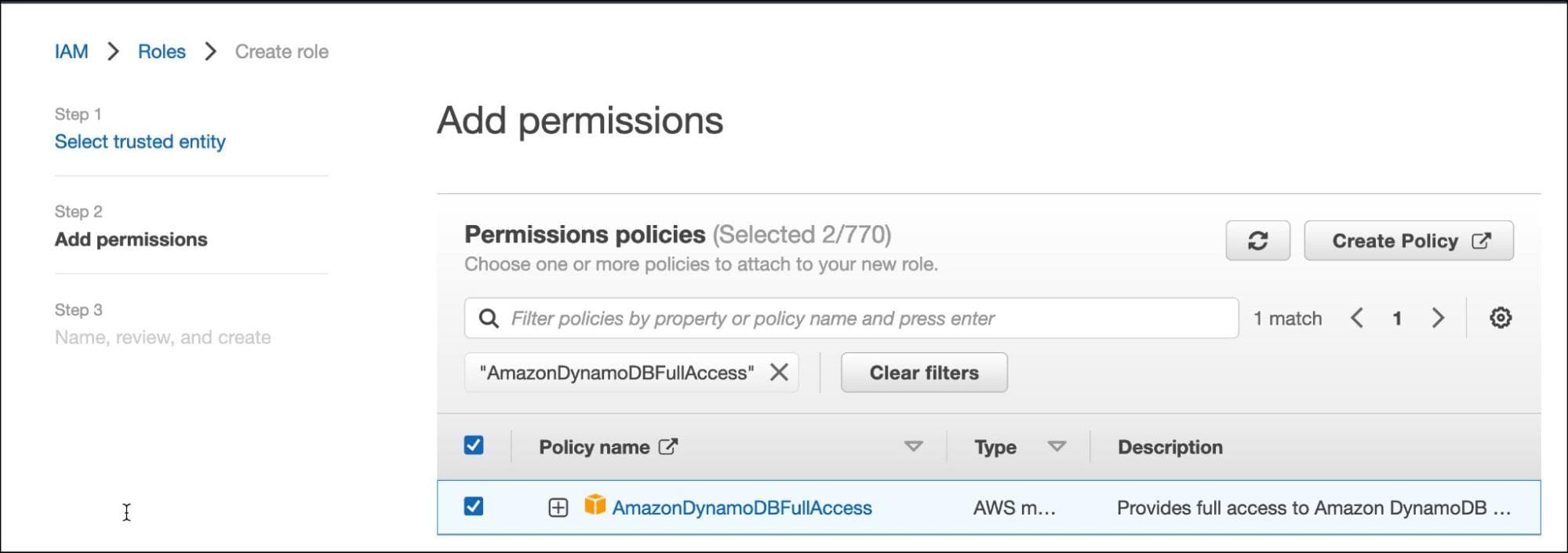

Search for S3FullAccess and AmazonDynamoDBFullAccess Policies and select both of them.

Name the role "TeleportAudit" and click the create button.

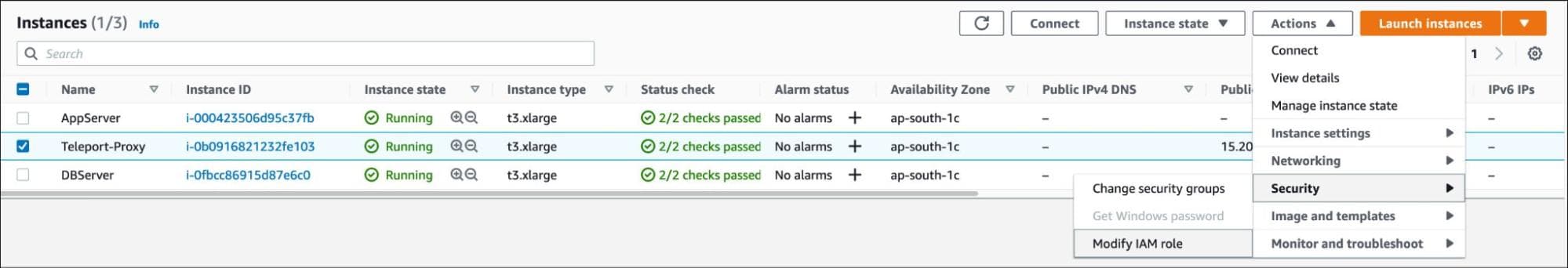

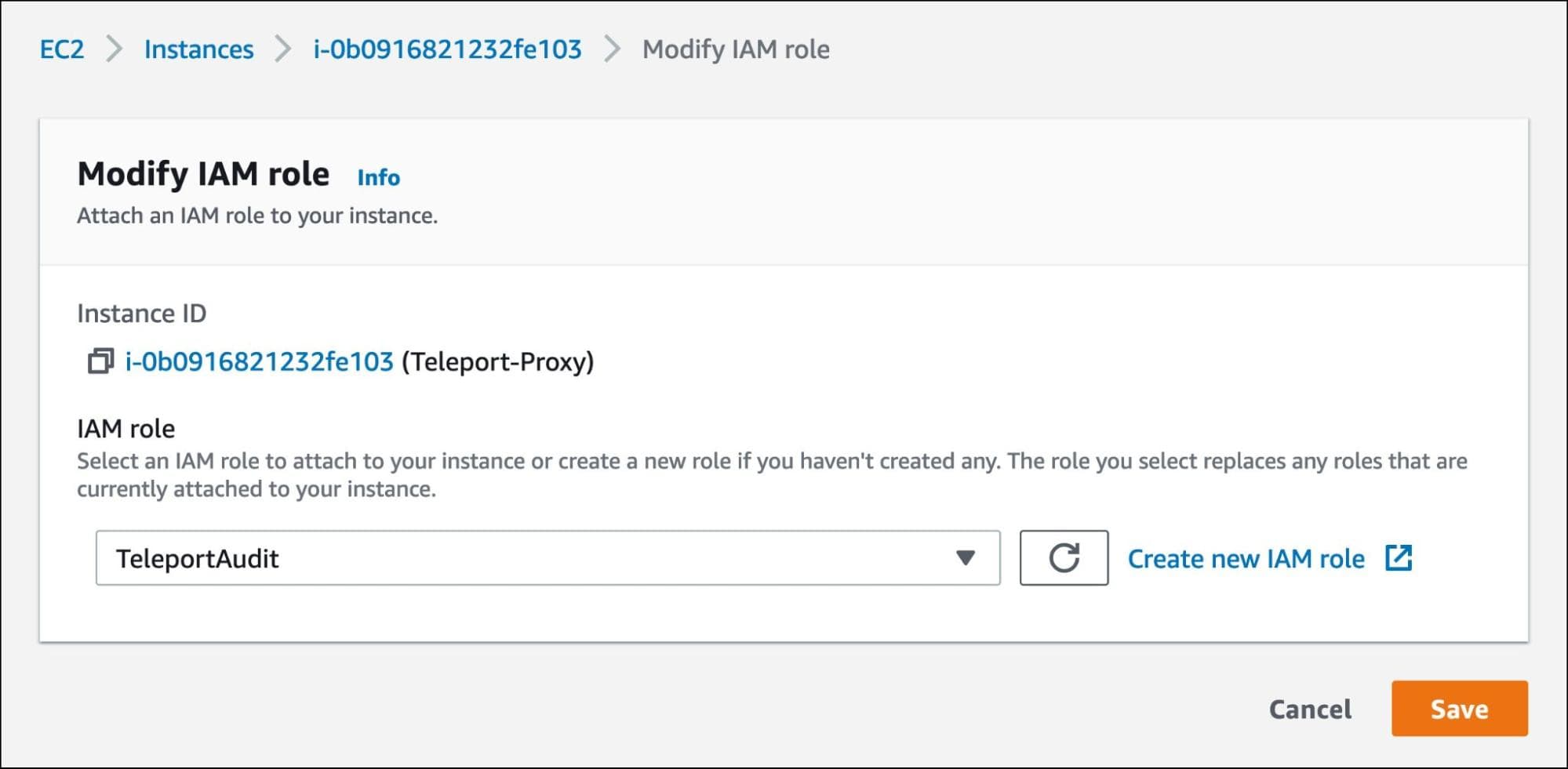

Let’s assign this role to the EC2 instance running the Teleport auth server.

Select the EC2 instance and choose to modify the IAM role under the security options.

Assign the TeleportAudit role and save the configuration.

Now, the Teleport auth server running within the EC2 instance can talk to Amazon DynamoDB and Amazon S3 to store the logs and session recordings.

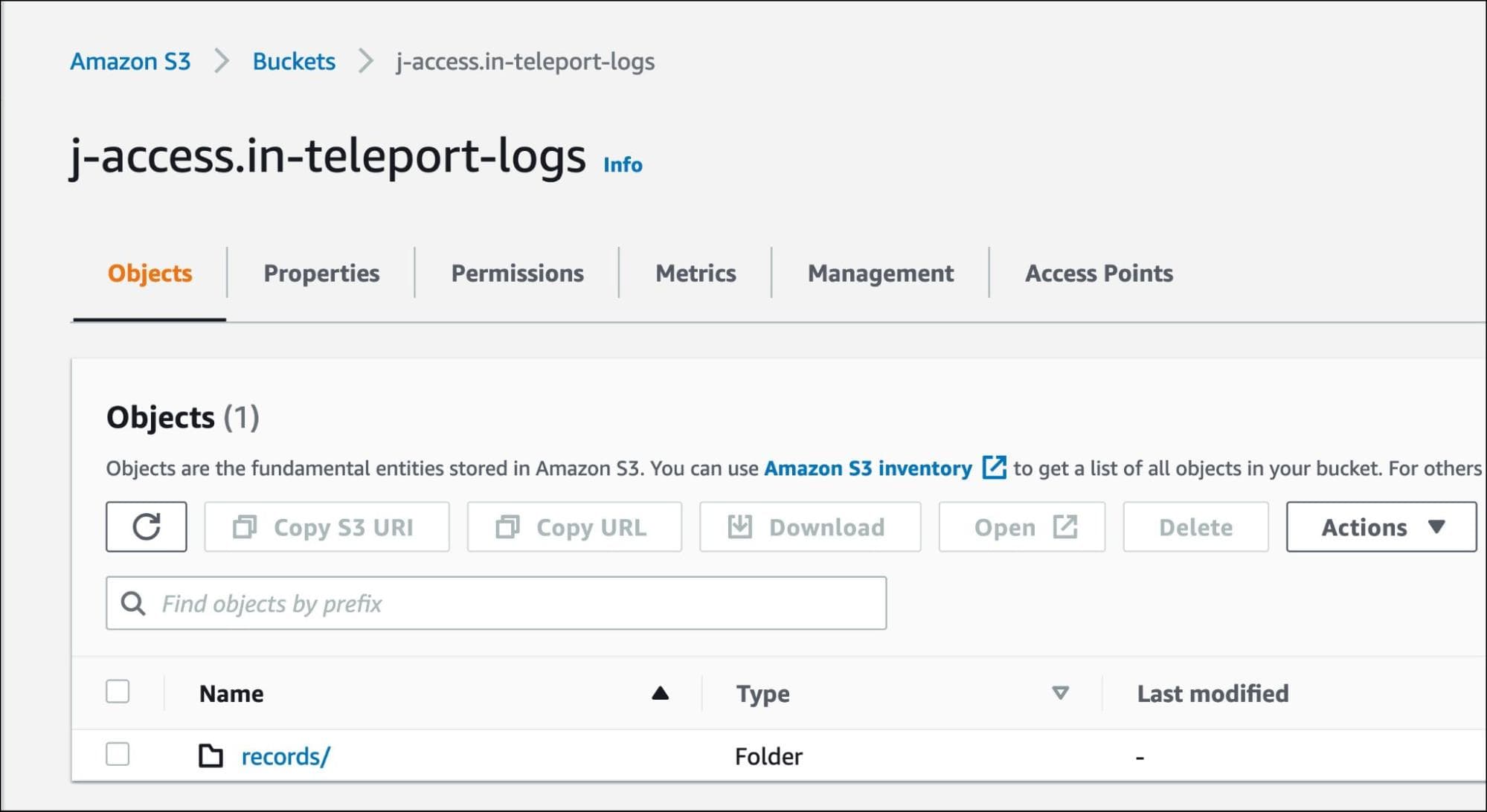

Create an S3 bucket in the same region where the Teleport service is running. For this tutorial, we call it j-access.in-teleport-logs.

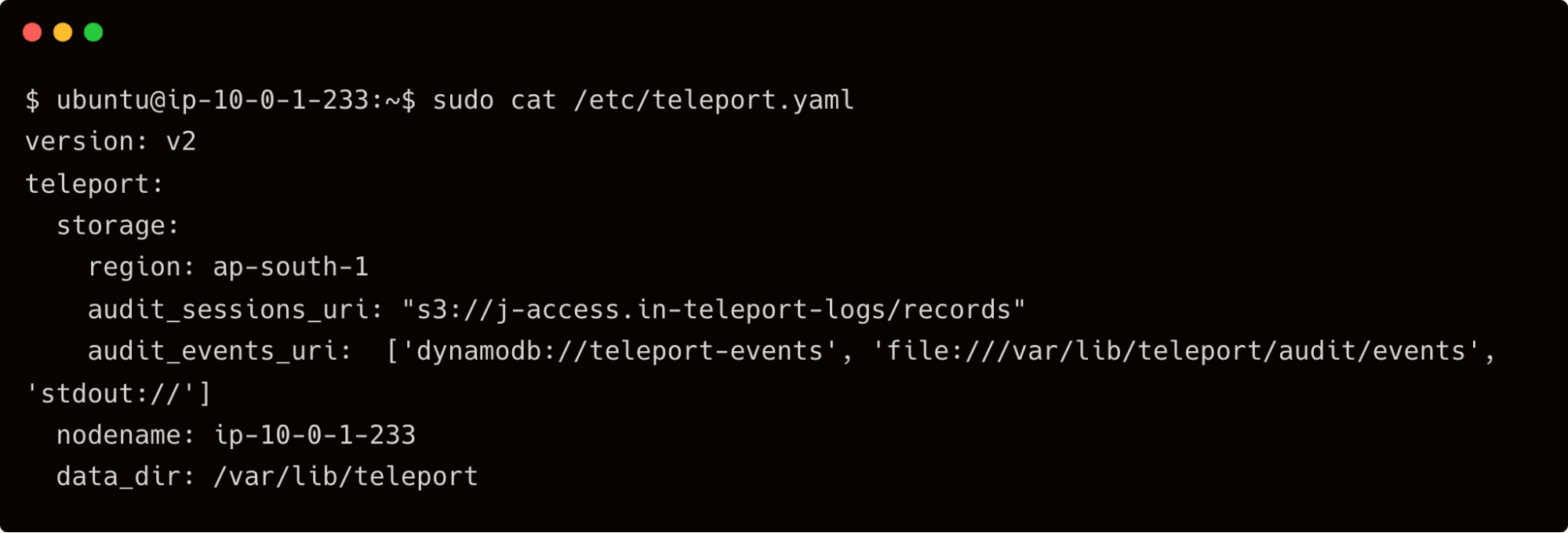

It’s time to point Teleport auth service to DynamoDB and S3. We will do this by adding the below settings to the /etc/teleport.yaml file:

storage:

region: ap-south-1

audit_sessions_uri: "s3://j-access.in-teleport-logs/records"

audit_events_uri: ['dynamodb://teleport-events', 'file:///var/lib/teleport/audit/events', 'stdout://']

The lines starting with audit_sessions_uri and audit_events_uri are responsible for redirecting the content to AWS.

Restart the Teleport service on the auth server and wait for the events to flow.

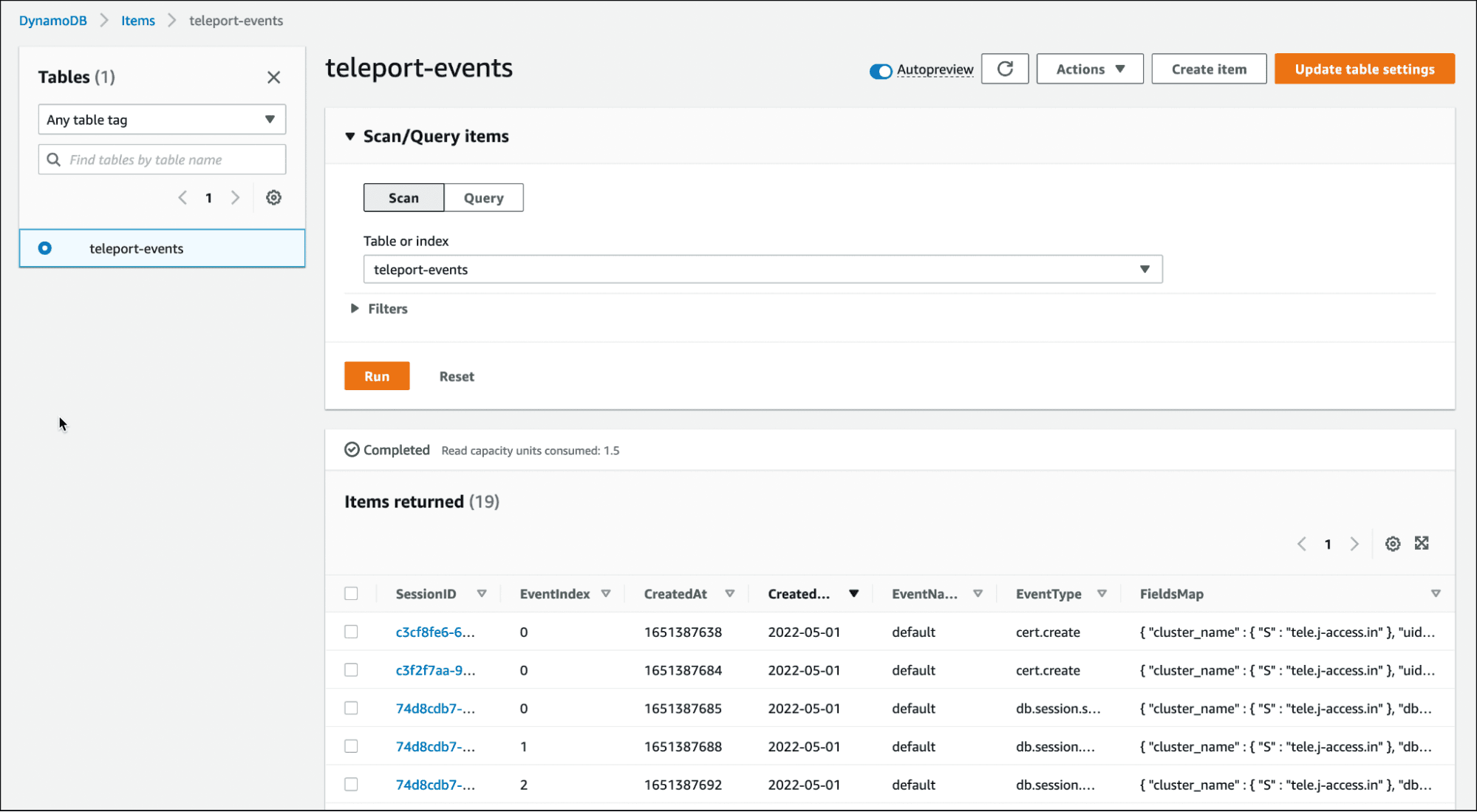

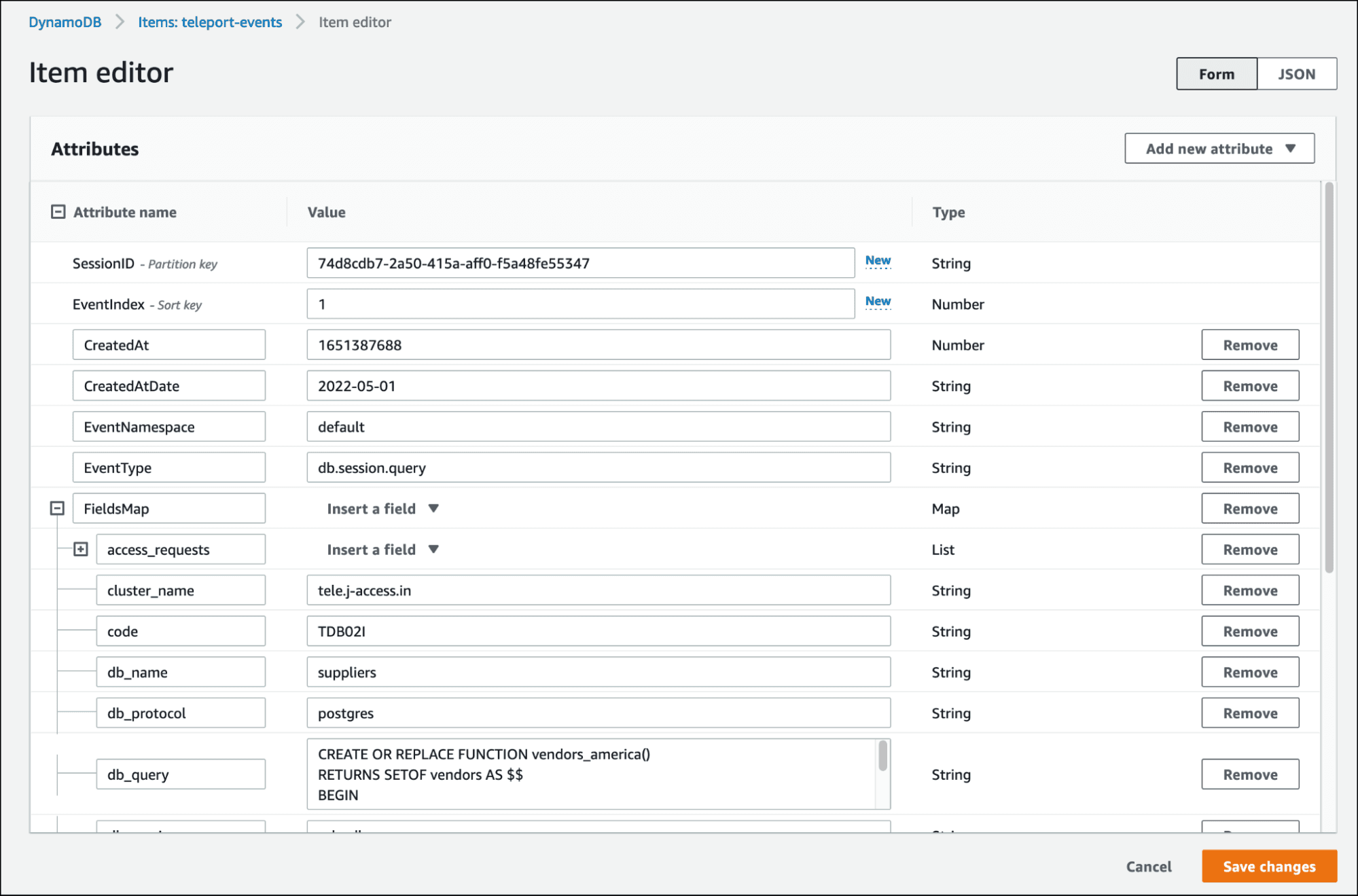

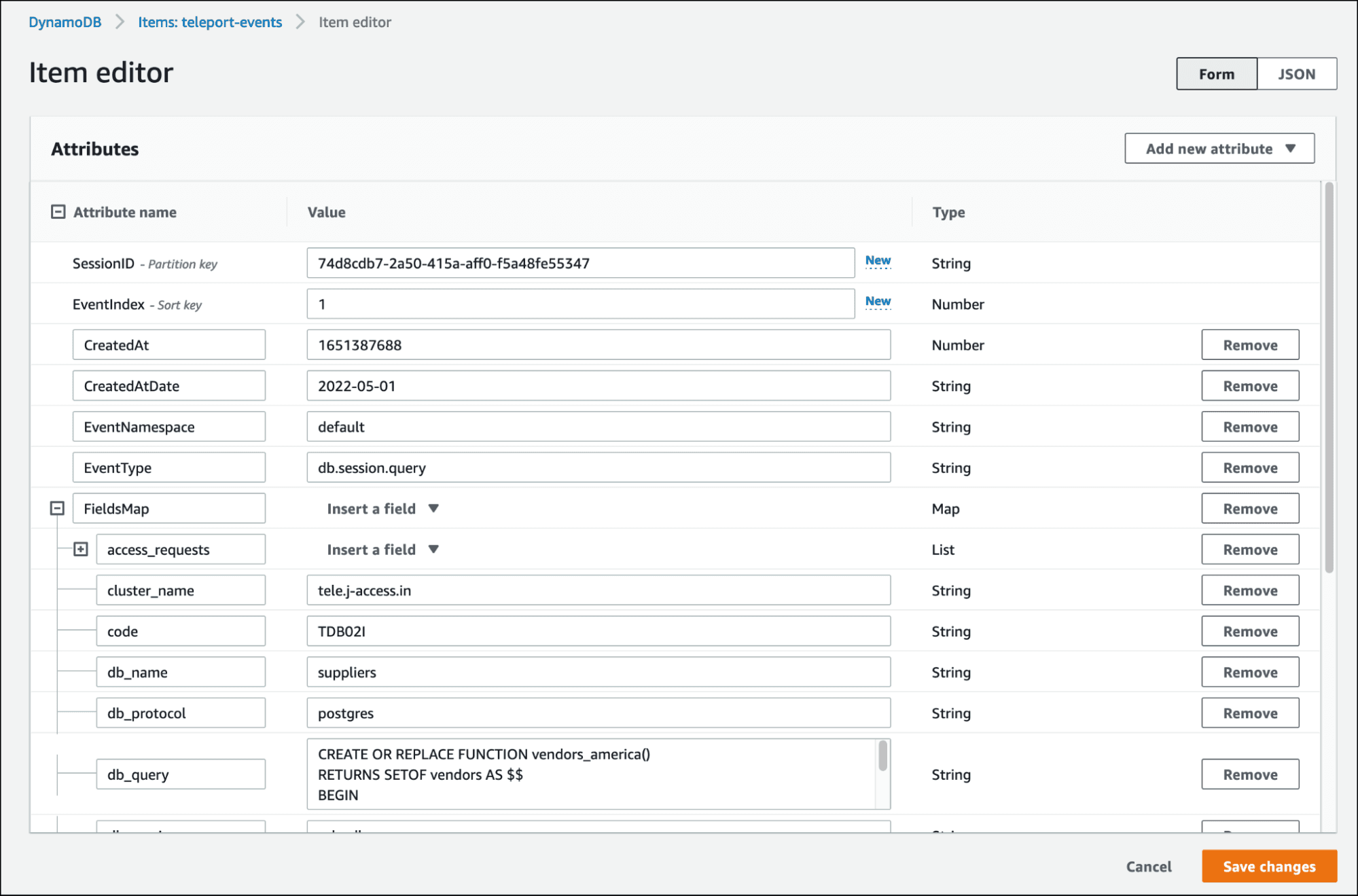

Explore the DynamoDB table, teleport-events, to see the generated event logs.

One of the entries in DynamoDB shows the DDL statement used by John to recreate the dropped function.

When you access the event logs and session recordings from the command line or the web UI, Teleport fetches them from DynamoDB and S3 bucket respectively.

Conclusion

In this tutorial, we explored Teleport audit concepts and the ability to move the DB agent event logs and session recordings to AWS Cloud. First, we created roles based on the preset and custom role definitions that provided access to the event logs and session recordings. Next, we extended the configuration to store the logs and recordings in Amazon DynamoDB and Amazon S3, which makes the data highly available and durable. Session recording with Teleport is more granular as compared to AWS Systems Manager.

Sign up for Teleport Cloud today!

Teleport Cloud offers a fully managed Teleport cluster so that you can quickly get started with Teleport and start protecting access to database servers instantly. We also recommend joining our Slack channel, where the Teleport community members hang out to discuss Teleport in production.

Table Of Contents

Teleport Newsletter

Stay up-to-date with the newest Teleport releases by subscribing to our monthly updates.

Tags

Tags

Teleport Newsletter

Stay up-to-date with the newest Teleport releases by subscribing to our monthly updates.