Deploy Teleport on Kubernetes

Teleport can provide secure, unified access to your Kubernetes clusters. This guide will show you how to deploy Teleport on a Kubernetes cluster using Helm.

While completing this guide, you will deploy one Teleport pod each for the Auth Service and Proxy Service in your Kubernetes cluster, and a load balancer that forwards outside traffic to your Teleport cluster. Users can then access your Kubernetes cluster via the Teleport cluster running within it.

If you are already running the Teleport Auth Service and Proxy Service on another platform, you can use your existing Teleport deployment to access your Kubernetes cluster. Follow our guide to connect your Kubernetes cluster to Teleport.

Teleport Enterprise Cloud takes care of this setup for you so you can provide secure access to your infrastructure right away.

Get started with a free trial of Teleport Enterprise Cloud.

Prerequisites

-

A registered domain name. This is required for Teleport to set up TLS via Let's Encrypt and for Teleport clients to verify the Proxy Service host.

-

A Kubernetes cluster hosted by a cloud provider, which is required for the load balancer we deploy in this guide. We recommend following this guide on a non-production cluster to start.

-

A persistent volume that the Auth Service can use for storing cluster state. Make sure your Kubernetes cluster has one available:

$ kubectl get pvIf there are no persistent volumes available, you will need to either provide one or enable dynamic volume provisioning for your cluster. For example, in Amazon Elastic Kubernetes Service, you can configure the Elastic Block Store Container Storage Interface driver add-on.

To tell whether you have dynamic volume provisioning enabled, check for the presence of a default

StorageClass:$ kubectl get storageclassesLaunching a fresh EKS cluster with eksctl?

If you are using

eksctlto launch a fresh Amazon Elastic Kubernetes Service cluster in order to follow this guide, the following example configuration sets up the EBS CSI driver add-on.dangerThe example configuration below assumes that you are familiar with how

eksctlworks, are not using your EKS cluster in production, and understand that you are proceeding at your own risk.Update the cluster name, version, node group size, and region as required:

apiVersion: eksctl.io/v1alpha5

kind: ClusterConfig

metadata:

name: my-cluster

region: us-east-1

version: "1.23"

iam:

withOIDC: true

addons:

- name: aws-ebs-csi-driver

version: v1.11.4-eksbuild.1

attachPolicyARNs:

- arn:aws:iam::aws:policy/service-role/AmazonEBSCSIDriverPolicy

managedNodeGroups:

- name: managed-ng-2

instanceType: t3.medium

minSize: 2

maxSize: 3 -

The

tshclient tool v14.3.33+ installed on your workstation. You can download this from our installation page.

-

Kubernetes >= v1.17.0

-

Helm >= 3.4.2

Verify that Helm and Kubernetes are installed and up to date.

$ helm version

# version.BuildInfo{Version:"v3.4.2"}

$ kubectl version

# Client Version: version.Info{Major:"1", Minor:"17+"}

# Server Version: version.Info{Major:"1", Minor:"17+"}

It is worth noting that this guide shows you how to set up Kubernetes access with the broadest set of permissions. This is suitable for a personal demo cluster, but if you would like to set up Kubernetes RBAC for production usage, we recommend getting familiar with the Teleport Kubernetes RBAC guide before you begin.

Step 1/2. Install Teleport

To deploy a Teleport cluster on Kubernetes, you need to:

- Install the

teleport-clusterHelm chart, which deploys the Teleport Auth Service and Proxy Service on your Kubernetes cluster. - Once your cluster is running, create DNS records that clients can use to access your cluster.

Install the teleport-cluster Helm chart

To deploy the Teleport Auth Service and Proxy Service on your Kubernetes

cluster, follow the instructions below to install the teleport-cluster Helm

chart.

-

Set up the Teleport Helm repository.

Allow Helm to install charts that are hosted in the Teleport Helm repository:

$ helm repo add teleport https://charts.releases.teleport.devUpdate the cache of charts from the remote repository so you can upgrade to all available releases:

$ helm repo update -

Create a namespace for Teleport and configure its Pod Security Admission, which enforces security standards on pods in the namespace:

$ kubectl create namespace teleport-cluster

namespace/teleport-cluster created

$ kubectl label namespace teleport-cluster 'pod-security.kubernetes.io/enforce=baseline'

namespace/teleport-cluster labeled -

Set the

kubectlcontext to the namespace to save some typing:$ kubectl config set-context --current --namespace=teleport-cluster -

Assign clusterName to a subdomain of your domain name, e.g.,

teleport.example.com. -

Assign email to an email address that you will use to receive notifications from Let's Encrypt, which provides TLS credentials for the Teleport Proxy Service's HTTPS endpoint.

-

Create a Helm values file:

- Open Source

- Enterprise

Write a values file (

teleport-cluster-values.yaml) which will configure a single node Teleport cluster and provision a cert using ACME.$ cat << EOF > teleport-cluster-values.yaml

clusterName: clusterName

proxyListenerMode: multiplex

acme: true

acmeEmail: email

EOFThe Teleport Auth Service reads a license file to authenticate your Teleport Enterprise account.

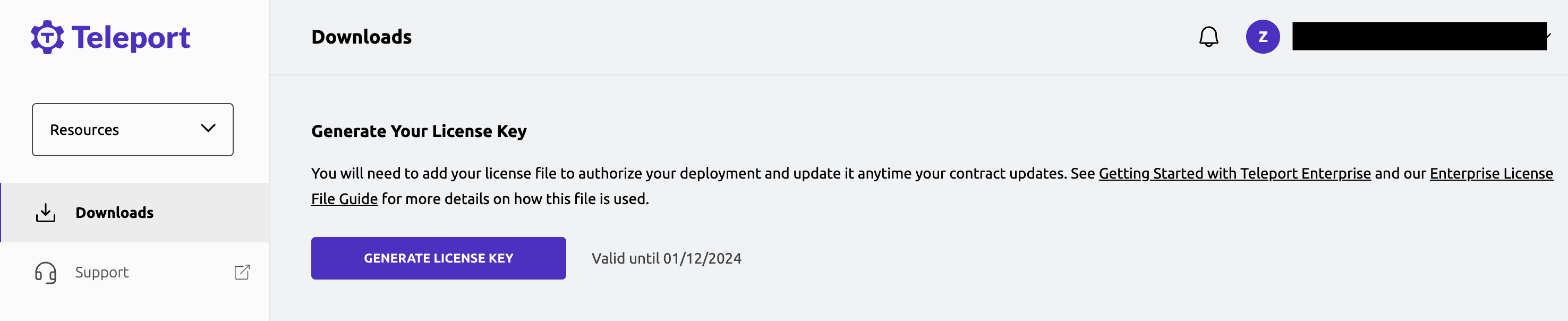

To obtain your license file, navigate to your Teleport account and enter your account name (e.g.,

my-license). After logging in, click the "DOWNLOAD LICENSE KEY" button to download your license file.

Ensure that your license is saved to your terminal's working directory at the path

license.pem.Using your license file, create a secret called "license" in the

teleport-clusternamespace:$ kubectl create secret generic license --from-file=license.pem

secret/license createdWrite a values file (

teleport-cluster-values.yaml) which will configure a single node Teleport cluster and provision a cert using ACME.$ cat << EOF > teleport-cluster-values.yaml

clusterName: clusterName

proxyListenerMode: multiplex

acme: true

acmeEmail: email

enterprise: true

EOF -

Install the

teleport-clusterHelm chart using the values file you wrote:$ helm install teleport-cluster teleport/teleport-cluster \

--create-namespace \

--version 14.3.33 \

--values teleport-cluster-values.yaml -

After installing the

teleport-clusterchart, wait a minute or so and ensure that both the Auth Service and Proxy Service pods are running:$ kubectl get pods

NAME READY STATUS RESTARTS AGE

teleport-cluster-auth-000000000-00000 1/1 Running 0 114s

teleport-cluster-proxy-0000000000-00000 1/1 Running 0 114s

Set up DNS records

In this section, you will enable users and services to connect to your cluster by creating DNS records that point to the address of your Proxy Service.

The teleport-cluster Helm chart exposes the Proxy Service to traffic from the

internet using a Kubernetes service that sets up an external load balancer with

your cloud provider.

Obtain the address of your load balancer by following the instructions below.

-

Get information about the Proxy Service load balancer:

$ kubectl get services/teleport-cluster

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

teleport-cluster LoadBalancer 10.4.4.73 192.0.2.0 443:31204/TCP 89sThe

teleport-clusterservice directs traffic to the Teleport Proxy Service. Notice theEXTERNAL-IPfield, which shows you the IP address or domain name of the cloud-hosted load balancer. For example, on AWS, you may see a domain name resembling the following:00000000000000000000000000000000-0000000000.us-east-2.elb.amazonaws.com -

Set up two DNS records:

teleport.example.comfor all traffic and*.teleport.example.comfor any web applications you will register with Teleport. We are assuming that your domain name isexample.comandteleportis the subdomain you have assigned to your Teleport cluster.Depending on whether the

EXTERNAL-IPcolumn above points to an IP address or a domain name, the records will have the following details:- IP Address

- Domain Name

Record Type Domain Name Value A teleport.example.comThe IP address of your load balancer A *.teleport.example.comThe IP address of your load balancer Record Type Domain Name Value CNAME teleport.example.comThe domain name of your load balancer CNAME *.teleport.example.comThe domain name of your load balancer -

Once you create the records, use the following command to confirm that your Teleport cluster is running:

$ curl https://clusterName/webapi/ping

{"auth":{"type":"local","second_factor":"on","preferred_local_mfa":"webauthn","allow_passwordless":true,"allow_headless":true,"local":{"name":""},"webauthn":{"rp_id":"teleport.example.com"},"private_key_policy":"none","device_trust":{},"has_motd":false},"proxy":{"kube":{"enabled":true,"listen_addr":"0.0.0.0:3026"},"ssh":{"listen_addr":"[::]:3023","tunnel_listen_addr":"0.0.0.0:3024","web_listen_addr":"0.0.0.0:3080","public_addr":"teleport.example.com:443"},"db":{"mysql_listen_addr":"0.0.0.0:3036"},"tls_routing_enabled":false},"server_version":"14.3.33","min_client_version":"12.0.0","cluster_name":"teleport.example.com","automatic_upgrades":false}

Step 2/2. Create a local user

While we encourage Teleport users to authenticate via their single sign-on provider, local users are a reliable fallback for cases when the SSO provider is down.

In this section, we will create a local user who has access to Kubernetes group

system:masters via the Teleport role member. This user also has the built-in

access and editor roles for administrative privileges.

kind: role

version: v6

metadata:

name: member

spec:

allow:

kubernetes_groups: ["system:masters"]

kubernetes_labels:

'*': '*'

kubernetes_resources:

- kind: 'pod'

namespace: '*'

name: '*'

-

Create the role:

$ kubectl exec -i deployment/teleport-cluster-auth -- tctl create -f < member.yaml

role 'member' has been created -

Create the user and generate an invite link:

$ kubectl exec -ti deployment/teleport-cluster-auth -- tctl users add myuser --roles=member,access,editor

User "myuser" has been created but requires a password. Share this URL with the user to

complete user setup, link is valid for 1h:

https://tele.example.com:443/web/invite/abcd123-insecure-do-not-use-this

NOTE: Make sure tele.example.com:443 points at a Teleport proxy which users can access. -

Visit the invite link and follow the instructions in the Web UI to activate your user.

-

Try

tsh loginwith your local user:$ tsh login --proxy=clusterName:443 --user=myuser -

Once you're connected to the Teleport cluster, list the available Kubernetes clusters for your user:

$ tsh kube ls

Kube Cluster Name Selected

----------------- --------

tele.example.com -

Log in to the Kubernetes cluster. The

tshclient tool updates your local kubeconfig to point to your Teleport cluster, so we will assignKUBECONFIGto a temporary value during the installation process. This way, if something goes wrong, you can easily revert to your original kubeconfig:$ KUBECONFIG=$HOME/teleport-kubeconfig.yaml tsh kube login clusterName

$ KUBECONFIG=$HOME/teleport-kubeconfig.yaml kubectl get -n teleport-cluster pods

NAME READY STATUS RESTARTS AGE

teleport-cluster-auth-000000000-00000 1/1 Running 0 26m

teleport-cluster-proxy-0000000000-00000 1/1 Running 0 26m

Troubleshooting

If you are experiencing errors connecting to the Teleport cluster, check the status of the Auth Service and Proxy Service pods. A successful state should show both pods running as below:

$ kubectl get pods -n teleport-cluster

NAME READY STATUS RESTARTS AGE

teleport-cluster-auth-5f8587bfd4-p5zv6 1/1 Running 0 48s

teleport-cluster-proxy-767747dd94-vkxz6 1/1 Running 0 48s

If a pod's status is Pending, use the kubectl logs and kubectl describe

commands for that pod to check the status. The Auth Service pod relies on being

able to allocate a Persistent Volume Claim, and may enter a Pending state if

no Persistent Volume is available.

The output of kubectl get events --sort-by='.metadata.creationTimestamp' -A can

also be useful, showing the most recent events taking place inside the Kubernetes

cluster.

Next steps

- Set up Single Sign-On: In this guide, we showed you how to create a local user, which is appropriate for demo environments. For a production deployment, you should set up Single Sign-On with your provider of choice. See our Single Sign-On guides for how to do this.

- Configure your Teleport deployment: To see all of the options you can set

in the values file for the

teleport-clusterHelm chart, consult our reference guide. - Fine-tune your Kubernetes RBAC: While the user you created in this guide

can access the

system:mastersrole, you can set up Teleport's RBAC to enable fine-grained controls for accessing Kubernetes resources. See our Kubernetes Access Controls Guide for more information.