Export Teleport Audit Events to Panther

Panther is a cloud-native security analytics platform. In this guide, we'll explain how to forward Teleport audit events to Panther using Fluentd.

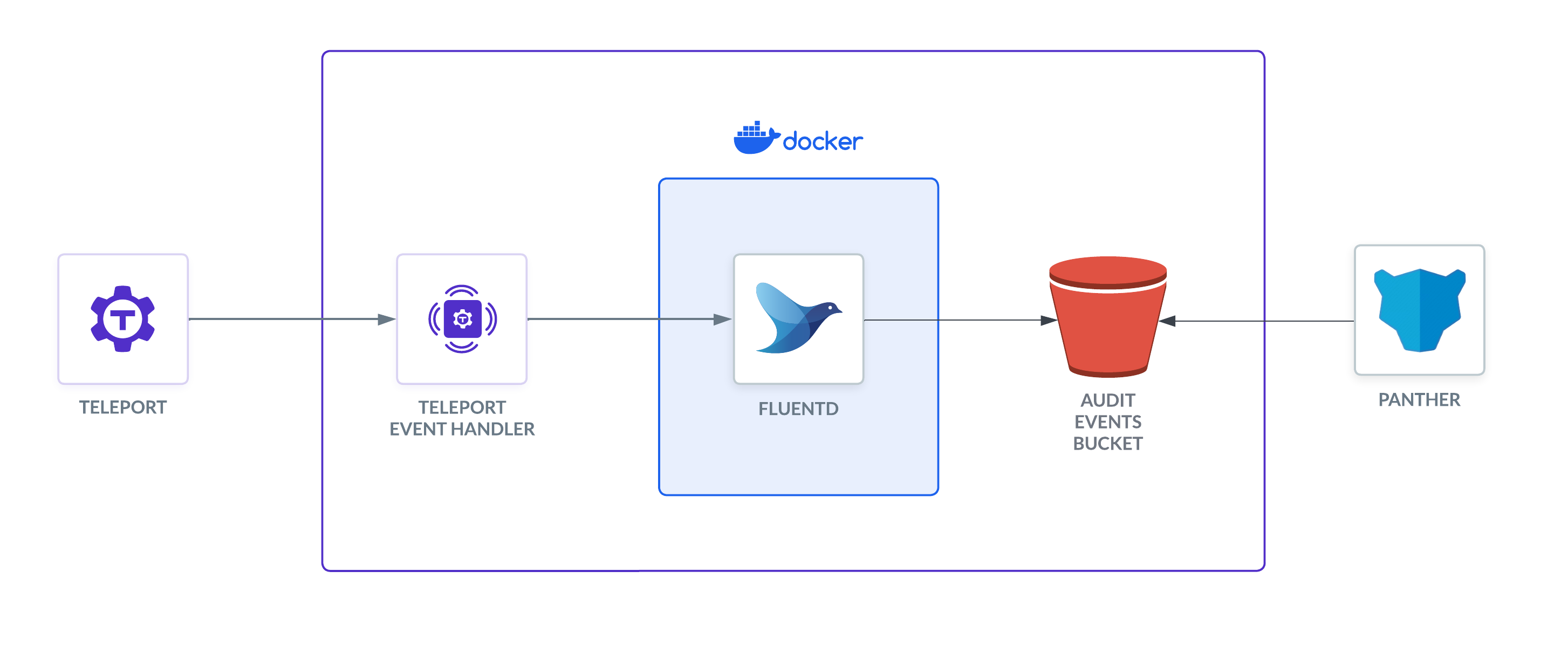

How it works

The Teleport Event Handler is designed to communicate with Fluentd using mTLS to establish a secure channel. In this setup, the Event Handler sends events to Fluentd, which forwards them to S3 to be ingested by Panther.

Prerequisites

-

A running Teleport cluster version 16.4.7 or above. If you want to get started with Teleport, sign up for a free trial or set up a demo environment.

-

The

tctladmin tool andtshclient tool.Visit Installation for instructions on downloading

tctlandtsh.

Recommended: Configure Machine ID to provide short-lived Teleport

credentials to the plugin. Before following this guide, follow a Machine ID

deployment guide

to run the tbot binary on your infrastructure.

- A Panther account.

- A server, virtual machine, Kubernetes cluster, or Docker environment to run the Event Handler. The instructions below assume a local Docker container for testing.

- Fluentd version v1.12.4 or greater. The Teleport Event Handler

will create a new

fluent.conffile you can integrate into an existing Fluentd system, or use with a fresh setup. - An S3 bucket to store the logs. Panther will ingest the logs from this bucket.

The instructions below demonstrate a local test of the Event Handler plugin on VM. You will need to adjust paths, ports, and domains for other environments.

- To check that you can connect to your Teleport cluster, sign in with

tsh login, then verify that you can runtctlcommands using your current credentials. For example:If you can connect to the cluster and run the$ tsh login --proxy=teleport.example.com [email protected]

$ tctl status

# Cluster teleport.example.com

# Version 16.4.7

# CA pin sha256:abdc1245efgh5678abdc1245efgh5678abdc1245efgh5678abdc1245efgh5678tctl statuscommand, you can use your current credentials to run subsequenttctlcommands from your workstation. If you host your own Teleport cluster, you can also runtctlcommands on the computer that hosts the Teleport Auth Service for full permissions.

Step 1/7. Install the Event Handler plugin

The Teleport event handler runs alongside the Fluentd forwarder, receives events from Teleport's events API, and forwards them to Fluentd.

- Linux

- macOS

- Docker

- Helm

- Build via Go

The Event Handler plugin is provided in amd64 and arm64 binaries for downloading.

Replace ARCH with your required version.

$ curl -L -O https://cdn.teleport.dev/teleport-event-handler-v16.4.7-linux-ARCH-bin.tar.gz

$ tar -zxvf teleport-event-handler-v16.4.7-linux-ARCH-bin.tar.gz

$ sudo ./teleport-event-handler/install

The Event Handler plugin is provided in amd64 and arm64 binaries for downloading.

Replace ARCH with your required version.

$ curl -L -O https://cdn.teleport.dev/teleport-event-handler-v16.4.7-darwin-ARCH-bin.tar.gz

$ tar -zxvf teleport-event-handler-v16.4.7-darwin-ARCH-bin.tar.gz

$ sudo ./teleport-event-handler/install

Ensure that you have Docker installed and running.

$ docker pull public.ecr.aws/gravitational/teleport-plugin-event-handler:16.4.7

To allow Helm to install charts that are hosted in the Teleport Helm repository, use helm repo add:

$ helm repo add teleport https://charts.releases.teleport.dev

To update the cache of charts from the remote repository, run helm repo update:

$ helm repo update

You will need Go >= 1.22 installed.

Run the following commands on your Universal Forwarder host:

$ git clone https://github.com/gravitational/teleport.git --depth 1 -b branch/v16

$ cd teleport/integrations/event-handler

$ make build

The resulting executable will have the name event-handler. To follow the

rest of this guide, rename this file to teleport-event-handler and move it

to /usr/local/bin.

Step 2/7. Generate a plugin configuration

- Cloud-Hosted

- Self-Hosted

- Helm Chart

- Local Docker test

Run the configure command to generate a sample configuration. Replace

mytenant.teleport.sh with the DNS name of your Teleport Enterprise Cloud

tenant:

$ teleport-event-handler configure . mytenant.teleport.sh:443

Run the configure command to generate a sample configuration. Replace

teleport.example.com:443 with the DNS name and HTTPS port of Teleport's Proxy

Service:

$ teleport-event-handler configure . teleport.example.com:443

Run the configure command to generate a sample configuration. Assign

TELEPORT_CLUSTER_ADDRESS to the DNS name and port of your Teleport Auth

Service or Proxy Service:

$ TELEPORT_CLUSTER_ADDRESS=mytenant.teleport.sh:443

$ docker run -v `pwd`:/opt/teleport-plugin -w /opt/teleport-plugin public.ecr.aws/gravitational/teleport-plugin-event-handler:16.4.7 configure . ${TELEPORT_CLUSTER_ADDRESS?}

In order to export audit events, you'll need to have the root certificate and the client credentials available as a secret. Use the following command to create that secret in Kubernetes:

$ kubectl create secret generic teleport-event-handler-client-tls --from-file=ca.crt=ca.crt,client.crt=client.crt,client.key=client.key

This will pack the content of ca.crt, client.crt, and client.key into the

secret so the Helm chart can mount them to their appropriate path.

Run the configure command to generate a sample configuration:

$ docker run -v `pwd`:/opt/teleport-plugin -w /opt/teleport-plugin public.ecr.aws/gravitational/teleport-plugin-event-handler:16.4.7 configure .

You'll see the following output:

Teleport event handler 16.4.7

[1] mTLS Fluentd certificates generated and saved to ca.crt, ca.key, server.crt, server.key, client.crt, client.key

[2] Generated sample teleport-event-handler role and user file teleport-event-handler-role.yaml

[3] Generated sample fluentd configuration file fluent.conf

[4] Generated plugin configuration file teleport-event-handler.toml

The plugin generates several setup files:

$ ls -l

# -rw------- 1 bob bob 1038 Jul 1 11:14 ca.crt

# -rw------- 1 bob bob 1679 Jul 1 11:14 ca.key

# -rw------- 1 bob bob 1042 Jul 1 11:14 client.crt

# -rw------- 1 bob bob 1679 Jul 1 11:14 client.key

# -rw------- 1 bob bob 541 Jul 1 11:14 fluent.conf

# -rw------- 1 bob bob 1078 Jul 1 11:14 server.crt

# -rw------- 1 bob bob 1766 Jul 1 11:14 server.key

# -rw------- 1 bob bob 260 Jul 1 11:14 teleport-event-handler-role.yaml

# -rw------- 1 bob bob 343 Jul 1 11:14 teleport-event-handler.toml

| File(s) | Purpose |

|---|---|

ca.crt and ca.key | Self-signed CA certificate and private key for Fluentd |

server.crt and server.key | Fluentd server certificate and key |

client.crt and client.key | Fluentd client certificate and key, all signed by the generated CA |

teleport-event-handler-role.yaml | user and role resource definitions for Teleport's event handler |

fluent.conf | Fluentd plugin configuration |

Running the Event Handler separately from the log forwarder

This guide assumes that you are running the Event Handler on the same host or

Kubernetes pod as your log forwarder. If you are not, you will need to instruct

the Event Handler to generate mTLS certificates for subjects besides

localhost. To do this, use the --cn and --dns-names flags of the

teleport-event-handler configure command.

For example, if your log forwarder is addressable at forwarder.example.com and the

Event Handler at handler.example.com, you would run the following configure

command:

$ teleport-event-handler configure --cn=handler.example.com --dns-names=forwarder.example.com

The command generates client and server certificates with the subjects set to

the value of --cn.

The --dns-names flag accepts a comma-separated list of DNS names. It will

append subject alternative names (SANs) to the server certificate (the one you

will provide to your log forwarder) for each DNS name in the list. The Event

Handler looks up each DNS name before appending it as an SAN and exits with an

error if the lookup fails.

Step 3/7. Create a user and role for reading audit events

The teleport-event-handler configure command generated a file called

teleport-event-handler-role.yaml. This file defines a teleport-event-handler

role and a user with read-only access to the event API:

kind: role

metadata:

name: teleport-event-handler

spec:

allow:

rules:

- resources: ['event', 'session']

verbs: ['list','read']

version: v5

---

kind: user

metadata:

name: teleport-event-handler

spec:

roles: ['teleport-event-handler']

version: v2

Move this file to your workstation (or recreate it by pasting the snippet above)

and use tctl on your workstation to create the role and the user:

$ tctl create -f teleport-event-handler-role.yaml

# user "teleport-event-handler" has been created

# role 'teleport-event-handler' has been created

Step 4/7. Create teleport-event-handler credentials

Enable issuing of credentials for the Event Handler role

- Machine ID

- Long-lived identity files

With the role created, you now need to allow the Machine ID bot to produce credentials for this role.

This can be done with tctl, replacing my-bot with the name of your bot:

$ tctl bots update my-bot --add-roles teleport-event-handler

In order for the Event Handler plugin to forward events from your Teleport

cluster, it needs signed credentials from the cluster's certificate authority.

The teleport-event-handler user cannot request this itself, and requires

another user to impersonate this account in order to request credentials.

Create a role that enables your user to impersonate the teleport-event-handler

user. First, paste the following YAML document into a file called

teleport-event-handler-impersonator.yaml:

kind: role

version: v5

metadata:

name: teleport-event-handler-impersonator

spec:

options:

# max_session_ttl defines the TTL (time to live) of SSH certificates

# issued to the users with this role.

max_session_ttl: 10h

# This section declares a list of resource/verb combinations that are

# allowed for the users of this role. By default nothing is allowed.

allow:

impersonate:

users: ["teleport-event-handler"]

roles: ["teleport-event-handler"]

Next, create the role:

$ tctl create teleport-event-handler-impersonator.yaml

Add this role to the user that generates signed credentials for the Event Handler:

Assign the teleport-event-handler-impersonator role to your Teleport user by running the appropriate

commands for your authentication provider:

- Local User

- GitHub

- SAML

- OIDC

-

Retrieve your local user's roles as a comma-separated list:

$ ROLES=$(tsh status -f json | jq -r '.active.roles | join(",")') -

Edit your local user to add the new role:

$ tctl users update $(tsh status -f json | jq -r '.active.username') \

--set-roles "${ROLES?},teleport-event-handler-impersonator" -

Sign out of the Teleport cluster and sign in again to assume the new role.

-

Open your

githubauthentication connector in a text editor:$ tctl edit github/github -

Edit the

githubconnector, addingteleport-event-handler-impersonatorto theteams_to_rolessection.The team you should map to this role depends on how you have designed your organization's role-based access controls (RBAC). However, the team must include your user account and should be the smallest team possible within your organization.

Here is an example:

teams_to_roles:

- organization: octocats

team: admins

roles:

- access

+ - teleport-event-handler-impersonator -

Apply your changes by saving closing the file in your editor.

-

Sign out of the Teleport cluster and sign in again to assume the new role.

-

Retrieve your

samlconfiguration resource:$ tctl get --with-secrets saml/mysaml > saml.yamlNote that the

--with-secretsflag adds the value ofspec.signing_key_pair.private_keyto thesaml.yamlfile. Because this key contains a sensitive value, you should remove the saml.yaml file immediately after updating the resource. -

Edit

saml.yaml, addingteleport-event-handler-impersonatorto theattributes_to_rolessection.The attribute you should map to this role depends on how you have designed your organization's role-based access controls (RBAC). However, the group must include your user account and should be the smallest group possible within your organization.

Here is an example:

attributes_to_roles:

- name: "groups"

value: "my-group"

roles:

- access

+ - teleport-event-handler-impersonator -

Apply your changes:

$ tctl create -f saml.yaml -

Sign out of the Teleport cluster and sign in again to assume the new role.

-

Retrieve your

oidcconfiguration resource:$ tctl get oidc/myoidc --with-secrets > oidc.yamlNote that the

--with-secretsflag adds the value ofspec.signing_key_pair.private_keyto theoidc.yamlfile. Because this key contains a sensitive value, you should remove the oidc.yaml file immediately after updating the resource. -

Edit

oidc.yaml, addingteleport-event-handler-impersonatorto theclaims_to_rolessection.The claim you should map to this role depends on how you have designed your organization's role-based access controls (RBAC). However, the group must include your user account and should be the smallest group possible within your organization.

Here is an example:

claims_to_roles:

- name: "groups"

value: "my-group"

roles:

- access

+ - teleport-event-handler-impersonator -

Apply your changes:

$ tctl create -f oidc.yaml -

Sign out of the Teleport cluster and sign in again to assume the new role.

Export an identity file for the Event Handler plugin user

Give the plugin access to a Teleport identity file. We recommend using Machine

ID for this in order to produce short-lived identity files that are less

dangerous if exfiltrated, though in demo deployments, you can generate

longer-lived identity files with tctl:

- Machine ID

- Long-lived identity files

Configure tbot with an output that will produce the credentials needed by

the plugin. As the plugin will be accessing the Teleport API, the correct

output type to use is identity.

For this guide, the directory destination will be used. This will write these

credentials to a specified directory on disk. Ensure that this directory can

be written to by the Linux user that tbot runs as, and that it can be read by

the Linux user that the plugin will run as.

Modify your tbot configuration to add an identity output.

If running tbot on a Linux server, use the directory output to write

identity files to the /opt/machine-id directory:

outputs:

- type: identity

destination:

type: directory

# For this guide, /opt/machine-id is used as the destination directory.

# You may wish to customize this. Multiple outputs cannot share the same

# destination.

path: /opt/machine-id

If running tbot on Kubernetes, write the identity file to Kubernetes secret

instead:

outputs:

- type: identity

destination:

type: kubernetes_secret

name: teleport-event-handler-identity

If operating tbot as a background service, restart it. If running tbot in

one-shot mode, execute it now.

You should now see an identity file under /opt/machine-id or a Kubernetes

secret named teleport-event-handler-identity. This contains the private key and signed

certificates needed by the plugin to authenticate with the Teleport Auth

Service.

Like all Teleport users, teleport-event-handler needs signed credentials in order to

connect to your Teleport cluster. You will use the tctl auth sign command to

request these credentials.

The following tctl auth sign command impersonates the teleport-event-handler user,

generates signed credentials, and writes an identity file to the local

directory:

$ tctl auth sign --user=teleport-event-handler --out=identity

The plugin connects to the Teleport Auth Service's gRPC endpoint over TLS.

The identity file, identity, includes both TLS and SSH credentials. The

plugin uses the SSH credentials to connect to the Proxy Service, which

establishes a reverse tunnel connection to the Auth Service. The plugin

uses this reverse tunnel, along with your TLS credentials, to connect to the

Auth Service's gRPC endpoint.

Certificate Lifetime

By default, tctl auth sign produces certificates with a relatively short

lifetime. For production deployments, we suggest using Machine

ID to programmatically issue and renew

certificates for your plugin. See our Machine ID getting started

guide to learn more.

Note that you cannot issue certificates that are valid longer than your existing credentials.

For example, to issue certificates with a 1000-hour TTL, you must be logged in with a session that is

valid for at least 1000 hours. This means your user must have a role allowing

a max_session_ttl of at least 1000 hours (60000 minutes), and you must specify a --ttl

when logging in:

$ tsh login --proxy=teleport.example.com --ttl=60060

If you are running the plugin on a Linux server, create a data directory to hold certificate files for the plugin:

$ sudo mkdir -p /var/lib/teleport/api-credentials

$ sudo mv identity /var/lib/teleport/plugins/api-credentials

If you are running the plugin on Kubernetes, Create a Kubernetes secret that contains the Teleport identity file:

$ kubectl -n teleport create secret generic --from-file=identity teleport-event-handler-identity

Once the Teleport credentials expire, you will need to renew them by running the

tctl auth sign command again.

Step 5/7. Create a Dockerfile with Fluentd and the S3 plugin

To send logs to Panther, you need to use the Fluentd output plugin for S3. Create

a Dockerfile with the following content:

FROM fluent/fluentd:edge

USER root

RUN fluent-gem install fluent-plugin-s3

USER fluent

Build the Docker image:

$ docker build -t fluentd-s3 .

If you're running Fluentd in a local Docker container for testing, you can adjust the entrypoint to an interactive shell as the root user, so you can test the setup.

$ docker run -u $(id -u root):$(id -g root) -p 8888:8888 -v $(pwd):/keys -v \

$(pwd)/fluent.conf:/fluentd/etc/fluent.conf --entrypoint=/bin/sh -i --tty fluentd-s3

Configure Fluentd for Panther

When you run the Teleport Event Handler, it will create a fluent.conf file. This file needs to be updated

to send logs to Panther. This means adding a <filter> and <match> section to the file. These sections

will filter and format the logs before sending them to S3, The record_transformer is important to send the

right date and time format for Panther.

<!--

# Below code is commented out as it's autogenerated in step 4 by teleport-event-handler

fluent.conf

This is a sample configuration file for Fluentd to send logs to S3.

Created by the Teleport Event Handler plugin.

Add the <filter> and <match> sections to the file.

<source>

@type http

port 8888

<transport tls>

client_cert_auth true

ca_path "/keys/ca.crt"

cert_path "/keys/server.crt"

private_key_path "/keys/server.key"

private_key_passphrase "AUTOGENERATED"

</transport>

<parse>

@type json

json_parser oj

# This time format is used by Teleport Event Handler.

time_type string

time_format %Y-%m-%dT%H:%M:%S

</parse>

# If the number of events is high, fluentd will start failing the ingestion

# with the following error message: buffer space has too many data errors.

# The following configuration prevents data loss in case of a restart and

# overcomes the limitations of the default fluentd buffer configuration.

# This configuration is optional.

# See https://docs.fluentd.org/configuration/buffer-section for more details.

<buffer>

@type file

flush_thread_count 8

flush_interval 1s

chunk_limit_size 10M

queue_limit_length 16

retry_max_interval 30

retry_forever true

</buffer>

</source>

-->

<filter test.log>

@type record_transformer

enable_ruby true

<record>

time ${time.utc.strftime("%Y-%m-%dT%H:%M:%SZ")}

</record>

</filter>

<match test.log>

@type s3

aws_key_id REPLACE_aws_access_key

aws_sec_key REPLACE_aws_secret_access_key

s3_bucket REPLACE_s3_bucket

s3_region us-west-2

path teleport/logs

<buffer>

@type file

path /var/log/fluent/buffer/s3-events

timekey 60

timekey_wait 0

timekey_use_utc true

chunk_limit_size 256m

</buffer>

time_slice_format %Y%m%d%H%M%S

<format>

@type json

</format>

</match>

<match session.*>

@type stdout

</match>

Start the Fluentd container:

$ docker run -p 8888:8888 -v $(pwd):/keys -v $(pwd)/fluent.conf:/fluentd/etc/fluent.conf fluentd-s3

This will start the Fluentd container and expose port 8888 for the Teleport Event Handler to send logs to.

Step 6/7. Run the Teleport Event Handler plugin

Configure the Event Handler

In this section, you will configure the Teleport Event Handler for your environment.

- Linux server

- Helm Chart

Earlier, we generated a file called teleport-event-handler.toml to configure

the Fluentd event handler. This file includes setting similar to the following:

storage = "./storage"

timeout = "10s"

batch = 20

namespace = "default"

# The window size configures the duration of the time window for the event handler

# to request events from Teleport. By default, this is set to 24 hours.

# Reduce the window size if the events backend cannot manage the event volume

# for the default window size.

# The window size should be specified as a duration string, parsed by Go's time.ParseDuration.

window-size = "24h"

[forward.fluentd]

ca = "/home/bob/event-handler/ca.crt"

cert = "/home/bob/event-handler/client.crt"

key = "/home/bob/event-handler/client.key"

url = "https://fluentd.example.com:8888/test.log"

session-url = "https://fluentd.example.com:8888/session"

[teleport]

addr = "example.teleport.com:443"

identity = "identity"

Modify the configuration to replace fluentd.example.com with the domain name

of your Fluentd deployment.

Use the following template to create teleport-plugin-event-handler-values.yaml:

eventHandler:

storagePath: "./storage"

timeout: "10s"

batch: 20

namespace: "default"

# The window size configures the duration of the time window for the event handler

# to request events from Teleport. By default, this is set to 24 hours.

# Reduce the window size if the events backend cannot manage the event volume

# for the default window size.

# The window size should be specified as a duration string, parsed by Go's time.ParseDuration.

windowSize: "24h"

teleport:

address: "example.teleport.com:443"

identitySecretName: teleport-event-handler-identity

identitySecretPath: identity

fluentd:

url: "https://fluentd.fluentd.svc.cluster.local/events.log"

sessionUrl: "https://fluentd.fluentd.svc.cluster.local/session.log"

certificate:

secretName: "teleport-event-handler-client-tls"

caPath: "ca.crt"

certPath: "client.crt"

keyPath: "client.key"

persistentVolumeClaim:

enabled: true

Next, modify the configuration file as follows:

- Executable or Docker

- Helm Chart

addr: Include the hostname and HTTPS port of your Teleport Proxy Service

or Teleport Enterprise Cloud account (e.g., teleport.example.com:443 or

mytenant.teleport.sh:443).

identity: Fill this in with the path to the identity file you exported

earlier.

client_key, client_crt, root_cas: Comment these out, since we

are not using them in this configuration.

address: Include the hostname and HTTPS port of your Teleport Proxy Service

or Teleport Enterprise Cloud tenant (e.g., teleport.example.com:443 or

mytenant.teleport.sh:443).

identitySecretName: Fill in the identitySecretName field with the name

of the Kubernetes secret you created earlier.

identitySecretPath: Fill in the identitySecretPath field with the path

of the identity file within the Kubernetes secret. If you have followed the

instructions above, this will be identity.

If you are providing credentials to the Event Handler using a tbot binary that

runs on a Linux server, make sure the value of identity in the Event Handler

configuration is the same as the path of the identity file you configured tbot

to generate, /opt/machine-id/identity.

Start the Teleport Event Handler

Start the Teleport Teleport Event Handler by following the instructions below.

- Linux server

- Helm chart

- Local Docker container

Copy the teleport-event-handler.toml file to /etc on your Linux server.

Update the settings within the toml file to match your environment. Make sure to

use absolute paths on settings such as identity and storage. Files

and directories in use should only be accessible to the system user executing

the teleport-event-handler service such as /var/lib/teleport-event-handler.

Next, create a systemd service definition at the path

/usr/lib/systemd/system/teleport-event-handler.service with the following

content:

[Unit]

Description=Teleport Event Handler

After=network.target

[Service]

Type=simple

Restart=always

ExecStart=/usr/local/bin/teleport-event-handler start --config=/etc/teleport-event-handler.toml --teleport-refresh-enabled=true

ExecReload=/bin/kill -HUP $MAINPID

PIDFile=/run/teleport-event-handler.pid

[Install]

WantedBy=multi-user.target

If you are not using Machine ID to provide short-lived credentials to the Event

Handler, you can remove the --teleport-refresh-enabled true flag.

Enable and start the plugin:

$ sudo systemctl enable teleport-event-handler

$ sudo systemctl start teleport-event-handler

Choose when to start exporting events

You can configure when you would like the Teleport Event Handler to begin

exporting events when you run the start command. This example will start

exporting from May 5th, 2021:

$ teleport-event-handler start --config /etc/teleport-event-handler.toml --start-time "2021-05-05T00:00:00Z"

You can only determine the start time once, when first running the Teleport

Event Handler. If you want to change the time frame later, remove the plugin

state directory that you specified in the storage field of the handler's

configuration file.

Once the Teleport Event Handler starts, you will see notifications about scanned and forwarded events:

$ sudo journalctl -u teleport-event-handler

DEBU Event sent id:f19cf375-4da6-4338-bfdc-e38334c60fd1 index:0 ts:2022-09-21

18:51:04.849 +0000 UTC type:cert.create event-handler/app.go:140

...

Run the following command on your workstation:

$ helm install teleport-plugin-event-handler teleport/teleport-plugin-event-handler \

--values teleport-plugin-event-handler-values.yaml \

--version 16.4.7

Navigate to the directory where you ran the configure command earlier and

execute the following command:

$ docker run --network host -v `pwd`:/opt/teleport-plugin -w /opt/teleport-plugin public.ecr.aws/gravitational/teleport-plugin-event-handler:16.4.7 start --config=teleport-event-handler.toml

This command joins the Event Handler container to the preset host network,

which uses the Docker host networking mode and removes network isolation, so the

Event Handler can communicate with the Fluentd container on localhost.

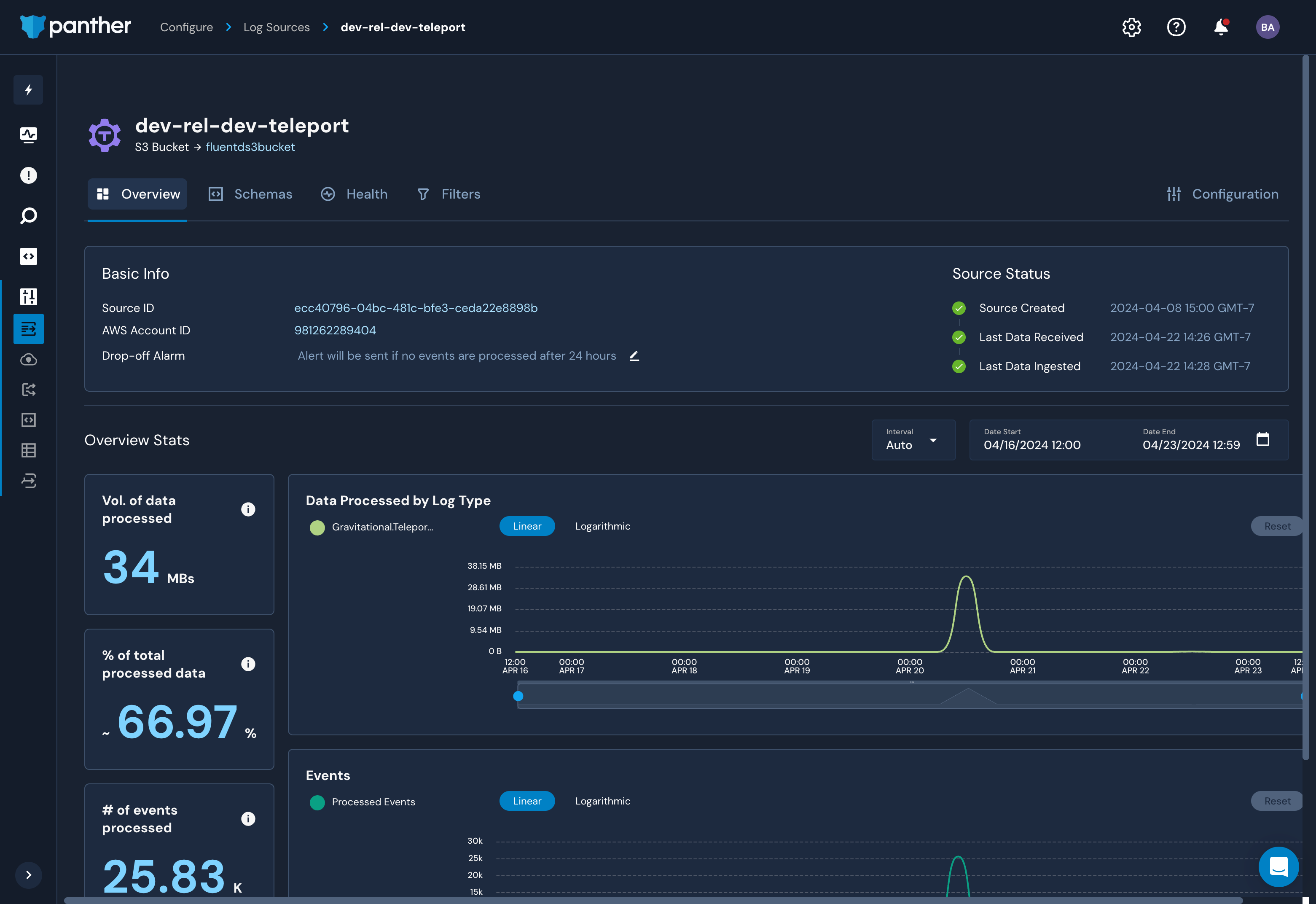

The Logs view in Panther should now report your Teleport cluster events.

Step 7/7. Configure Panther to ingest logs from S3

Once logs are being sent to S3, you can configure Panther to ingest them. Follow the Panther documentation to set up the S3 bucket as a data source.

Troubleshooting connection issues

If the Teleport Event Handler is displaying error logs while connecting to your Teleport Cluster, ensure that:

- The certificate the Teleport Event Handler is using to connect to your

Teleport cluster is not past its expiration date. This is the value of the

--ttlflag in thetctl auth signcommand, which is 12 hours by default. - Ensure that in your Teleport Event Handler configuration file

(

teleport-event-handler.toml), you have provided the correct host and port for the Teleport Proxy Service. - Start the FluentD container prior to starting the Teleport Event Handler. The Event Handler will attempt to connect to FluentD immediately upon startup.

Next steps

- Read more about impersonation here.

- Learn more about the Panther Detections, Alerts and Notifications.

- To see all of the options you can set in the values file for the

teleport-plugin-event-handlerHelm chart, consult our reference guide.