Home - Teleport Blog - How to Prevent Prompt Injection in AI Agents

How to Prevent Prompt Injection in AI Agents

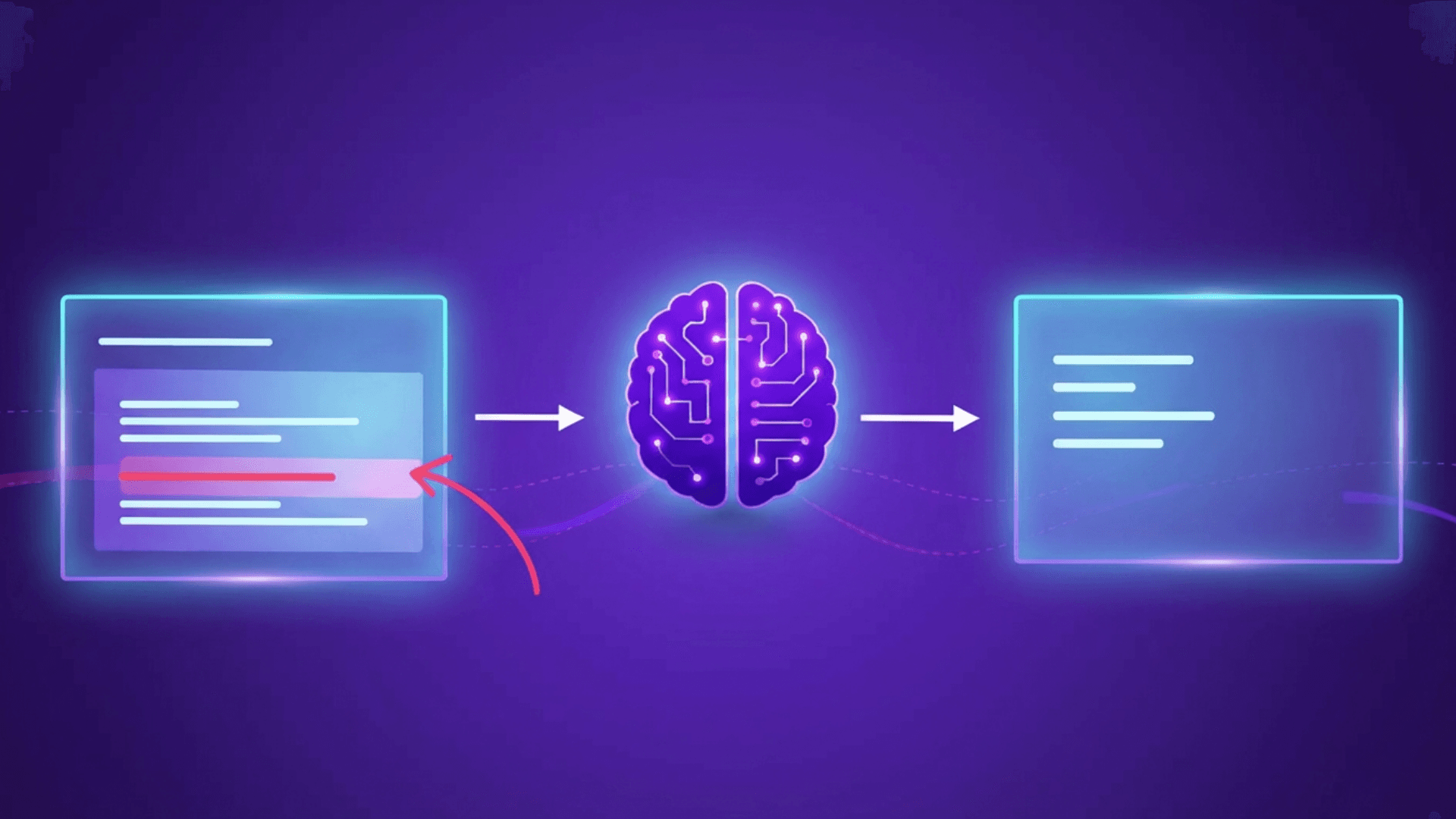

In agentic architectures, model behavior is guided by a combination of system prompts, retrieved context, and tool-related inputs rather than a single instruction source.

When signals conflict or include untrusted instructions, models must infer which inputs to follow. This ambiguity exposes an opening for prompt injection attacks.

Prompt injection can show up as an agent taking an unexpected action, invoking the wrong tool, or following instructions that were never intended to guide its behavior — ultimately influencing agent decisions that are then executed against connected systems.

In this blog post, we’ll examine how prompt injection affects agentic AI systems — including where it creates infrastructure risk and which controls help limit its impact.

What is prompt injection?

Prompt injection is a class of attacks against large language models in which an attacker crafts inputs to manipulate how the model interprets instructions. By embedding malicious instructions within otherwise trusted inputs, attackers may influence model behavior to attempt attacks or exfiltrate data without exploiting a traditional software vulnerability.

Prompt injection exploits how language models interpret and prioritize instructions across multiple sources, a limitation that OWASP classifies as a core architectural risk in LLM applications.

At a technical level, prompt injection exploits how language models process inputs. System prompts, retrieved data, user input, and tool context are merged into a single context. When signals conflict, the model must infer which inputs it should follow. In agentic systems, this ambiguity directly affects how automation interacts with downstream infrastructure.

Model output is used to make operational decisions, including:

- which tools are invoked

- which APIs are called

- which environments are targeted

- which roles or service identities are used

Consider an LLM-powered assistant supporting CI/CD workflows. The agent may review configs, trigger pipelines, or initiate rollbacks using delegated credentials. If injected instructions enter through untrusted context, such as commit messages or pull-request descriptions, the agent may deploy to the wrong environment or execute unintended actions.

In this hypothetical scenario, underlying infrastructure and identity controls are enforcing permissions correctly. The decision to act inappropriately is originating from the manipulated model input.

This is what makes prompt injection operationally significant: the attack does not bypass infrastructure controls. Instead, it steers autonomous systems operating with legitimate access towards committing the attack themselves.

You can read more about prompt injection and other agentic threats in our analysis of OWASP’s Top 10 for Agentic Applications.

Prompt injection examples

In 2025, researchers disclosed a zero-click AI command-injection vulnerability in Microsoft 365 Copilot, known as EchoLeak (CVE‑2025‑32711).

Malicious instructions embedded in an external email were processed as part of Copilot’s context, leading to unauthorized data exfiltration without user interaction. The exploit crossed trust boundaries between external input and internal enterprise data, even though Copilot operated with valid permissions throughout.

A similar pattern appeared in Google Gemini, where attackers embedded hidden instructions in calendar invites and other trusted enterprise inputs. When Gemini processed this data, the injected instructions influenced model behavior and caused unintended exposure of private information. The attack relied on indirect prompt injection and context mixing rather than a traditional software flaw, turning routine enterprise data into an attack vector.

Both incidents demonstrate a similar attack chain

- An attacker embeds instructions into data the system already trusts, such as emails or calendar events.

- That data is processed by an AI system connected to tools or internal services.

- The embedded instructions are used to influence what the system attempts to access or do next.

If the AI is allowed to act autonomously, downstream systems could receive valid requests made under legitimate identities, even though the intent originated from untrusted input.

How agentic AI amplifies prompt injection risk

Agentic AI systems can amplify the impact of prompt injection when model output is executed directly against real infrastructure using delegated identities. For example, agents may select tools, invoke APIs, or perform operations without human review.

This risk of prompt injections in autonomous workflows has been demonstrated in research. A 2023 evaluation of prompt-injection attacks against deployed LLM-integrated applications found that a black-box injection technique successfully compromised 31 of 36 systems, bypassing intended usage constraints and recovering embedded prompts. The failures stemmed from how models interpreted mixed instruction signals, not from flaws in application logic.

In infrastructure workflows, such failures could surface as misdirected actions executed under valid identities.

Injected instructions can influence which identities are used, what access is exercised, and which environments are touched. Even when PAM and role-based access controls (RBAC) function correctly, unintended actions may occur because the agent is operating with approved roles and credentials. As automation increases to improve engineering velocity, the potential blast radius of prompt-injection attacks could grow unless agent access is actively controlled.

Operational steps to mitigate prompt injection threats

Preventing prompt injection in agentic systems starts with controlling how agents execute actions against internal systems. Once agents are allowed to act, risk is defined by what they can access and which operations they are permitted to perform. The following steps focus on reducing that impact.

How to take action against prompt injection in AI workflows:

1. Avoid passing full configs and environment variables into agents

Restrict agent access to commands and helpers like printenv, kubectl get all, terraform show, or internal get_config endpoints that return entire files or account state.

2. Replace broad “dump” endpoints with narrow queries

Update internal tools so agents can request specific values instead of returning full objects, manifests, or JSON blobs.

3. Remove static API keys from agent execution paths where possible

Audit agent workflows for embedded cloud keys, CI tokens, or service credentials. Where short-lived access already exists, switch agents to those mechanisms.

4. Downgrade agent permissions from broad roles to specific actions

Replace broad roles like admin or all-clusters with narrowly defined capabilities tied to specific operations and environments.

5. Add human gates to irreversible actions

Require manual approval for deploys, rollbacks, or infrastructure changes initiated by agents, using existing CI/CD or ticketing workflows.

These steps reduce the risk of prompt-injection compromise, as well highlighting where manual controls and fragmented tooling begin to expose gaps as agentic automation scales.

How to reduce the blast radius of prompt-injection attacks

In many deployments today, agents run using shared service accounts, long-lived tokens, or broadly scoped credentials that infrastructure already trusts.

Giving an agent its own identity changes that model. Instead of inheriting blanket access, the agent authenticates as a specific entity with its own explicitly defined controls.

This makes it possible to limit which systems the agent can touch, which environments it can operate in, and which actions it is allowed to perform. If injected instructions influence the agent’s behavior, the resulting actions would still be checked against those access boundaries before anything reaches production systems.

Once identity is the primary control surface, governing how AI agents authenticate and interact with infrastructure becomes a key part of building resilience against prompt-injection attacks.

Teleport supports these controls in ways that can reduce the impact of prompt injection attacks.

Integrate security into agentic systems — from design to production

Teleport’s Agentic Identity Framework provides a library of primitives, reference architectures, and integration patterns to define strong agent identity, policy-governed access to tools and data, LLM usage controls, and end-to-end auditability for agentic systems. This includes:

- Strong identity for agents to ensure each agent authenticates as a distinct identity rather than using shared service accounts or static tokens. In a prompt-injection scenario, this helps prevent injected instructions from blending into generic automation or inheriting broad, implicit trust. Every action can be traced back to a specific agent identity.

- Ephemeral, least-privileged access to ensure AI access is short-lived and scoped to specific environments and actions. If prompt injection influences an agent’s behavior, the injected instructions cannot extend access, persist beyond credential lifetime, or reach systems outside what policy explicitly allows.

- Runtime authorization and policy enforcement so authorization is enforced at runtime when an agent attempts to act, not when prompts are constructed or interpreted. This ensures that a request generated from manipulated model output cannot bypass access controls, even if the model decides to invoke an unintended tool or API.

- End-to-end auditability so every agent action is logged with identity, role, and target system. If prompt injection leads to unexpected behavior, teams can see exactly what was attempted, under which identity, and against which system, enabling rapid investigation and response.

Related resources

→ Watch: Why Agentic AI Breaks Legacy Identity — and What Infrastructure Leaders Must Do Next

→ Blog: AI Infrastructure Needs an Agentic Identity Framework — We’re Building It

→ Blog: Secure AI Agent Infrastructure with Zero-Code MCP

→ Blog: Securing Identity in the Age of AI

FAQ

What is prompt injection?

Prompt injection is a class of attacks in which untrusted input influences how a language model interprets instructions. In agentic systems, model output may be used to trigger CI/CD pipelines, deployments, or rollbacks using delegated credentials. When injected instructions affect those decisions, prompt injection can result in unintended infrastructure actions executed with valid permissions.

How does agentic AI increase prompt injection risk?

Agentic systems can amplify prompt injection risk when they connect language models to real systems with delegated access. Agents often operate using shared service accounts, long-lived tokens, or broadly scoped permissions. When untrusted input influences agent behavior, infrastructure may receive valid requests that appear authorized, even though the intent originated from manipulated context rather than legitimate instructions.

How can teams reduce prompt injection risk in practice?

Teams can reduce prompt injection risk by governing how agents authenticate and act on infrastructure rather than relying on prompt-level defenses. This includes giving agents distinct identities, issuing short-lived and scoped access, enforcing authorization at execution time, and auditing agent actions.

Table Of Contents

Teleport Newsletter

Stay up-to-date with the newest Teleport releases by subscribing to our monthly updates.

Tags

Subscribe to our newsletter